http://blogs.plos.org/plos/2018/04/plos-update/

“Much of this is related to a strategic decision to shift from developing a proprietary platform for submissions”

http://blogs.plos.org/plos/2018/04/plos-update/

“Much of this is related to a strategic decision to shift from developing a proprietary platform for submissions”

A part of my personal history is a platform called Aperta which was previously called Tahi. It was a PLoS project and I was hired to design and build it. I quit when the PLoS Board decided to close the repositories, effectively making it a closed project. The repos remained closed, and as far as I know, are still closed today. Ironically after I left, they renamed the project ‘Aperta’ – Italian for ‘open’. A really silly marketing move to reassure everyone that despite what they may have heard, the project was still open…that was perhaps true, albeit (ironically and literally) in name only.

Now, it seems, the platform dev has been halted. Feels good to me. From what I heard (and I didn’t hear much), PLoS didn’t take the project in a good technical direction and generated a significant amount of bad faith and market confusion while trying to develop it behind closed doors.

To quote the new CEO Allison Mudditt (who I respect very much, Coko worked with Alison when she was at UCP):

Part of this initiative will involve changes around the workflow system – Aperta™ – we set out to develop several years ago with the goal to streamline manuscript submission and handling. At the time we began, there was very little available that would create the end-to-end workflow we envisioned as the key to opening research on multiple fronts. But the development process has proved more challenging than expected and as a result, we’ve made the difficult decision to halt development of Aperta. This will enable us to more sharply focus on internal processes that can have more immediate benefit for the communities we serve and the authors who choose to publish with us. The progress made with Aperta will not be wasted effort: we are currently exploring how to best leverage its unique strengths and capabilities to support core PLOS priorities like preprints and innovation in peer review. This will be part of our planning for 2018.

I hope that PLoS releases the technologies that have been developed for Aperta (there was a lot more than just the submission system) into the open… with both open repositories and open licenses AND, more importantly, an open heart. Collaboration and openness is more to do with how people are than what open license they choose and several of the practices, including asking potential collaborators to sign Non-Disclosure Agreements (NDAs) before getting a demo of the system, were ridiculous and ungenerous.

Having said that, it would be awesome to see all that work released into the open, in open repos with open licenses, and no more blurring of the word ‘open’. Afterall the systems developed that included Tahi were all paid for by researchers. The PLoS Article Processing Charges fuels PLoS and they committed some of this revenue to the development of Tahi. When I was there, no external funding was secured for developing the system. Pedro Mendes made a good point in response to the announcement:

There is some merit to this, but I do applaud PLoS for being adventurous, and if it had worked then the result would have been APCs could be lowered, not just for PLoS, but for any Journal out there reliant on expensive and dysfunctional Manuscript Submission Systems. Allison also notes this in a discussion below the post mentioned above:

…the original idea was that Aperta would allow us to eliminate or speed up the slowest steps between a finished work and its publication in order to reduce the cost of our publishing services

That is true, and it was an admirable goal. However, whatever the journey was between then and now, the project should have always have been out in the open as a public asset. Open for science, open for access, open for source, open for all – and the fact researchers paid for it but it was turned into closed project mid-flight is reprehensible and in the end it worked against PLoS, in particular, it severely weakened PLoS claims to supporting all things open. What a mess.

But, it can’t be ignored that Tahi is about 5 years old now, which is old in software years. A entire generation of technologies that are better suited to solving these problems has arisen in that time. The system is now not much more than a still (just) relevant but outdated approach. That is the risk you take when you develop things behind closed doors. By the time you release it (or don’t in this case), it is out of date. That said, it would still be good to release it, but there are better technologies and approaches out there now.

So I look on with interest to see what will happen next. I sincerely hope PLoS can return to cutting a path through publishing and exploring and enabling a viable Open Access model that others can follow. With Allison at the helm I am betting things are going to take a much needed turn for the better, not just with this project, but on all counts.

As for me, I learned a lot from designing Aperta (I prefer to call it Tahi). The design process was an introduction to scientific journal publishing for me. I learned a great deal. Tahi gave me, at the time, an unencumbered dream time to imagine something new. It had a lot of interesting innovative approaches and if I had stayed with the project it would have ended up close to where PubSweet is now as I wanted to completely decouple the ‘spaces’ (a concept important to Tahi). It would not have been as good as PubSweet at doing this as a complete ‘decouple’ really has to be imagined from the start, and isn’t as clean if retrofitted. Still, the system would have been a lot more flexible and reusable.

But that wasn’t to be. Don’t get me wrong – I don’t think Tahi was the perfect platform, but it was a pretty good starting point with some significant innovations. At the time, I was looking forward to shaping Tahi with use and to mature it into an excellent system. The good news is, the next platform you design is always better. I took a lot of what I learned (I have now been involved in instigating around a dozen publishing systems) to my next development, and worked hard to re-conceptualise a new system that avoided some of the mistakes I made with Tahi, and took some of the good parts a whole lot further. That new project is PubSweet and it is looking awesome, and leverages modern technologies and approaches to the max – mainly thanks to the bunch of amazing folks working on it within the Coko team (particularly Jure Triglav) and also now, increasingly, from the collaborators we work with (at this stage mainly eLife, YLD, Hindawi and ThinSlices). Also a huge thanks to the Shuttleworth for backing me, especially because it was at a time (I had just quit PLoS) when it was very much needed. Their backing meant Coko was possible, and consequently, PubSweet and everything else we have done.

Anyways… it was past time PLoS moved on too from Aperta and congratulations to Allison for making the right call, especially given that it would have been a difficult one given the cultural forces at play inside of PLoS.

We are pretty close to our PubSweet 1.0 with the RFC now out for PubSweet 2.0, and a PubSweet dev site release next week.

It has been an amazing effort, particularly by Jure Triglav, the lead dev for PubSweet at Coko, but also fantastic work from Richard Smith-Unna, Alf Eaton, Yannis Barlas, and Christos Kokosias. Also more recently some great contribution from Alex Georgantas.

So, we are pretty much there and I’m presenting in San Francisco this week as part of a small Coko event to reflect on the future of the framework and discuss the RFC. For this purpose I’d thought I’d write a post to help me think through the thinking that got us here.

So…the thinking behind PubSweet started when I came back from Antarctica around 2007 or so (I was there setting up an autonomous base for artist-scientist collaborations).

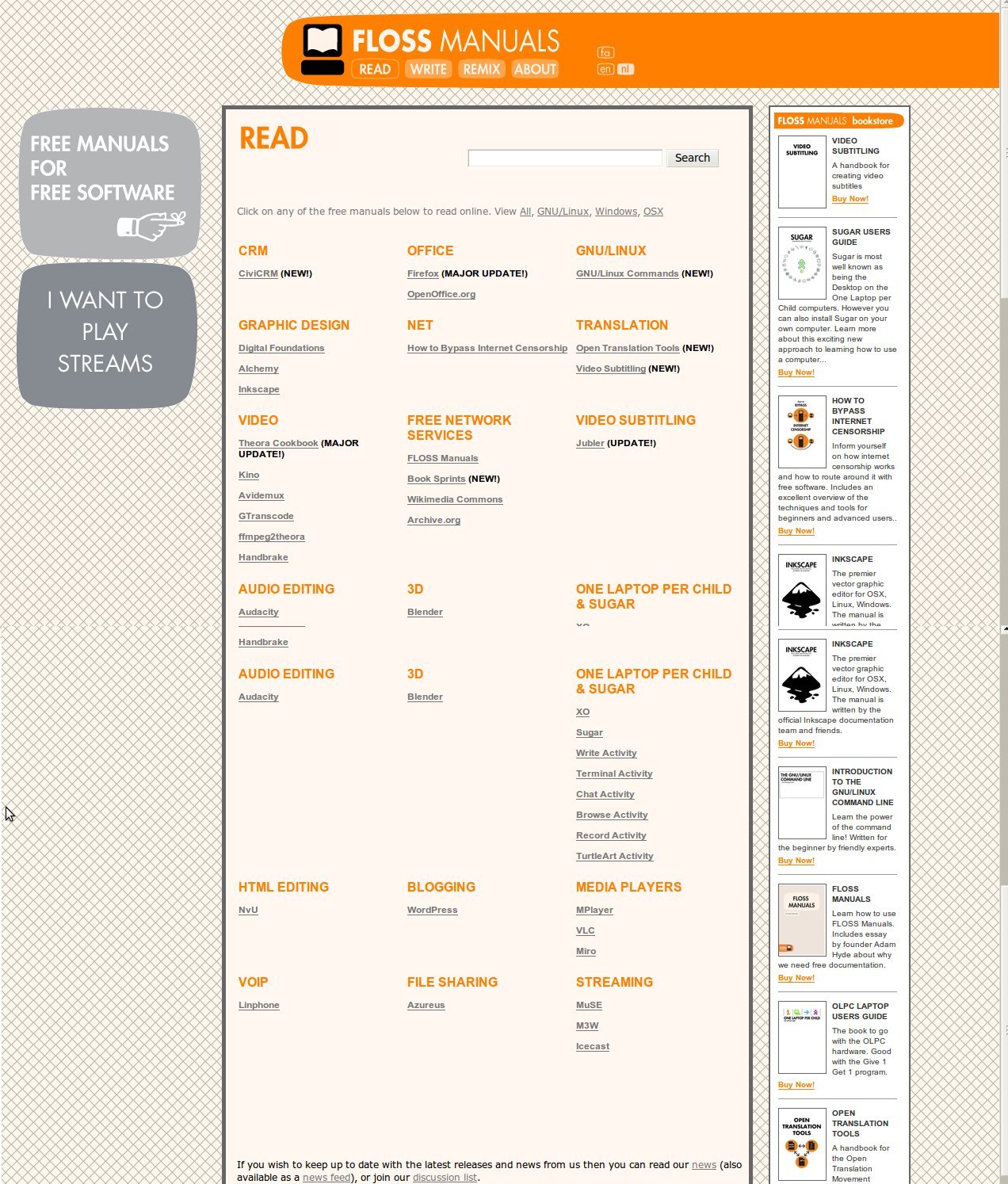

I decided I wanted to give up the art world and try something new. The something new turned out to be FLOSS Manuals – a community writing free manuals about free software. I started it when I was living in Amsterdam somewhere around 2007. In order to execute on this mission I needed to get a couple of things sorted. Namely, learn how to build community, work out processes for rapid book production, and work out the tooling.

The tooling started with me scratching around with TWiki. A wiki written in Perl that happened to have the best plugins for rendering PDF. I scratched around, writing some Perl and cutting and pasting a whole lot more, and added some crazy .htaccess URL rewriting to produce a basic system for producing books. It was pretty scratchy but it actually worked. Later a buddy helped extend the system and later still I was able to pay him and others to extend it.

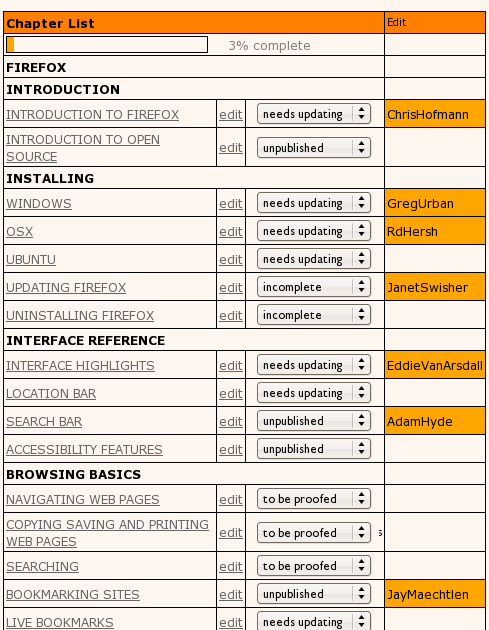

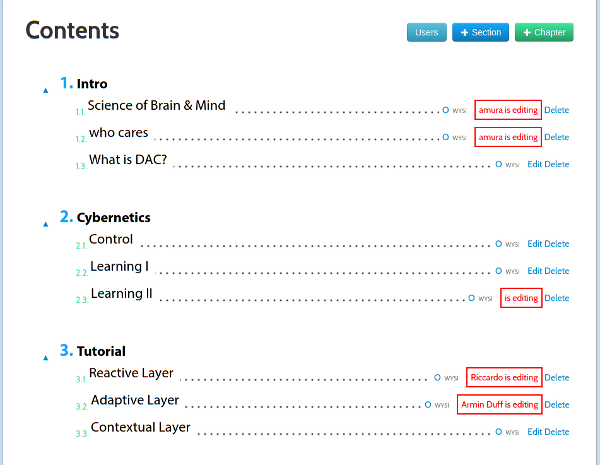

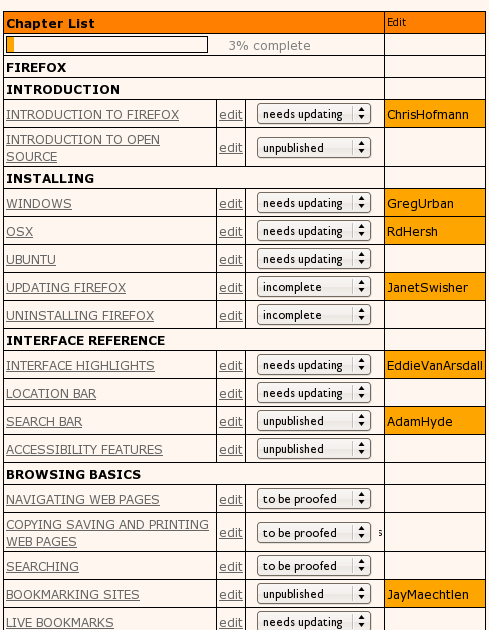

At the time it pretty much comprised a page (per book) for creating a table of contents.

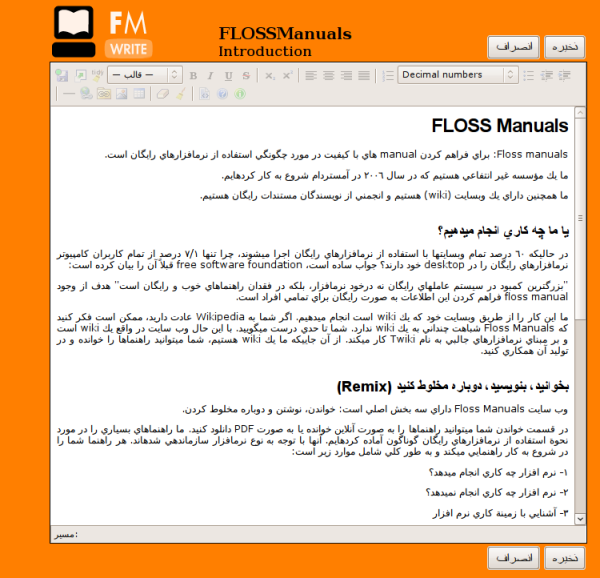

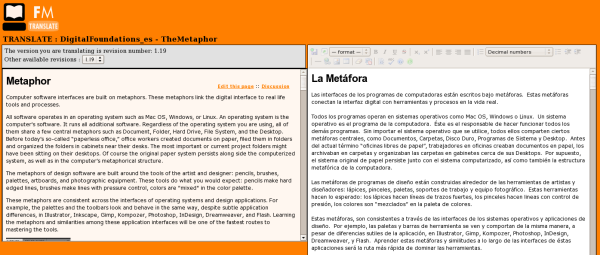

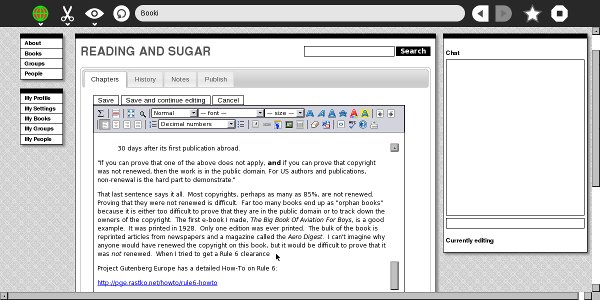

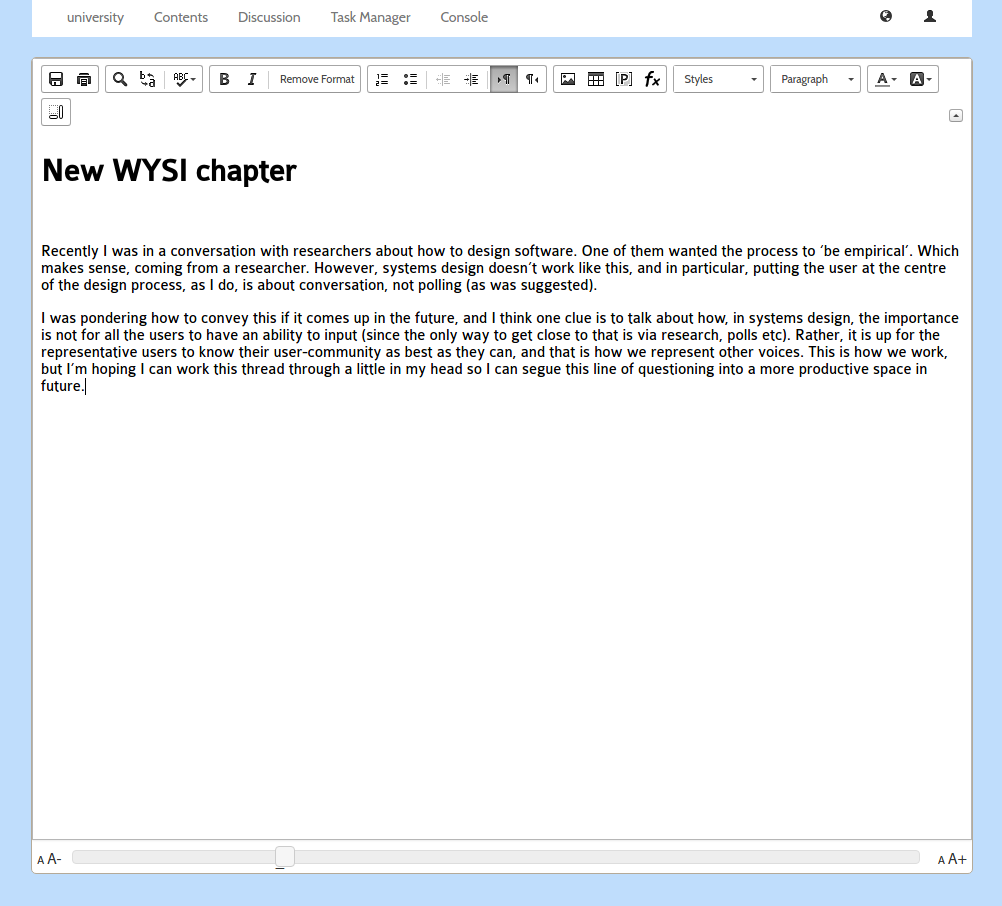

and an interface to edit the content (chapters). I ripped out the native wiki markup editor and replaced it with a WYSIWYG editor, I think it was TinyMCE…

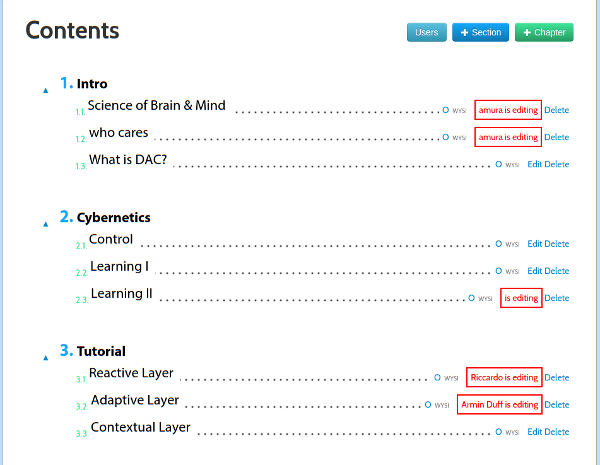

As you can see Right-to-Left content (in this case, Farsi) was also supported. There were also some basic things in place for keeping track of the status of a chapter, the version number, side by side diffs, side by side translation interfaces, and, later, dynamic table of contents organisation and edit locks.

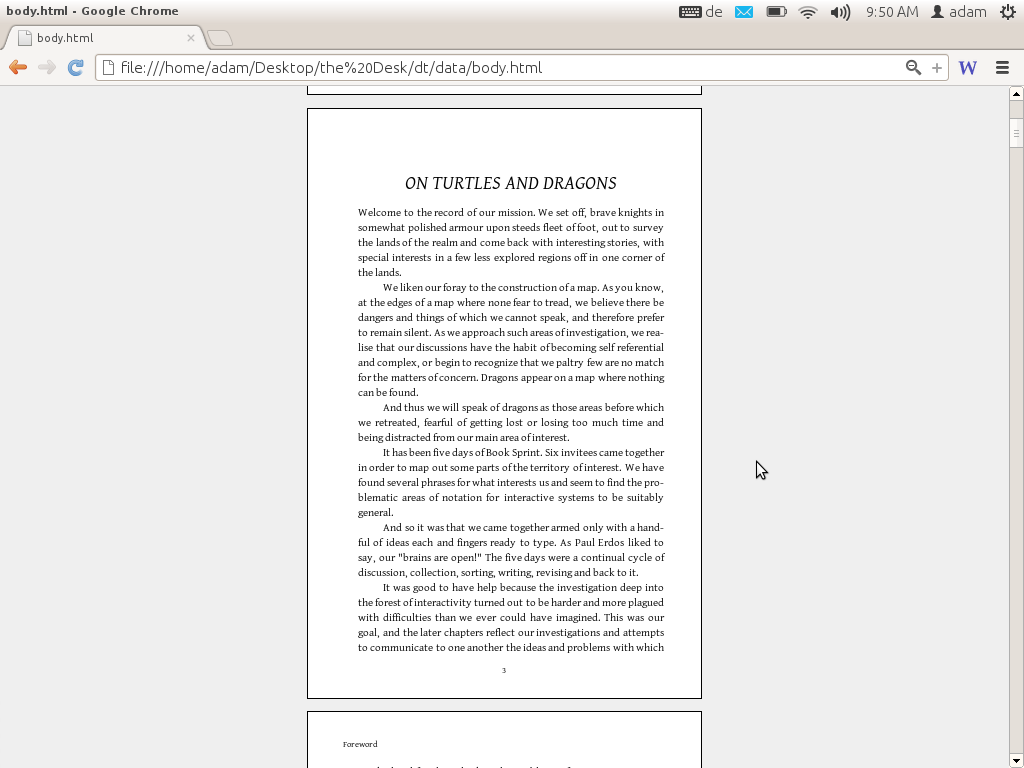

Coupled with some basic PDF rendering stuff and a way to push the content from the ‘draft’ to the publishing front end and we were away.

It actually had some other pretty cool stuff, such as side by side translation interfaces…

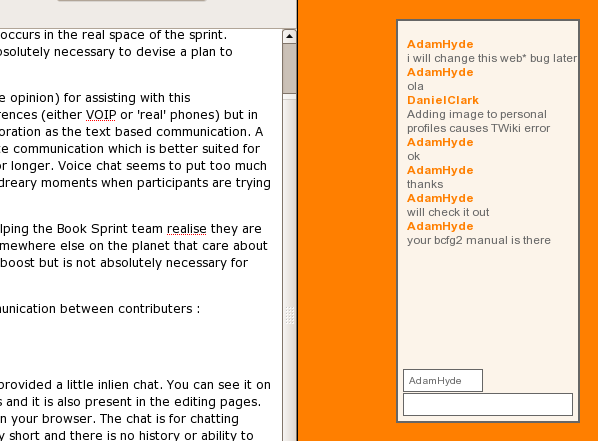

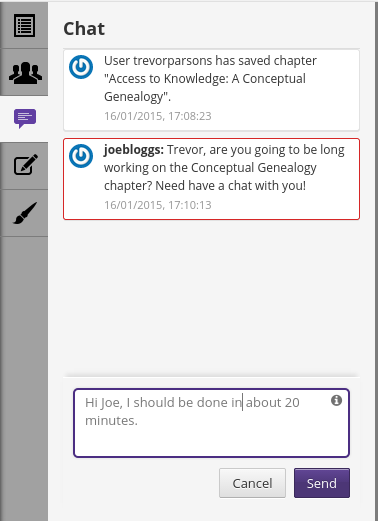

..a built in live chat for talking with collaborators…

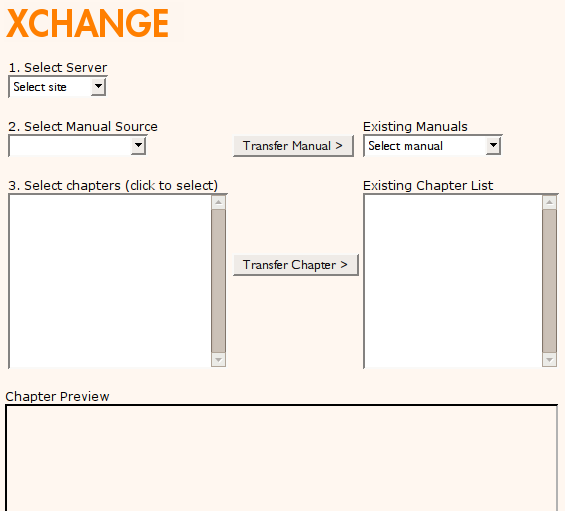

and even a way to send books between different instances (eg for sending a book from the FLOSS Manuals French community site to FLOSS Manuals Finnish for translation)….

We could even render book formatted PDF and push to the lulu.com print on demand services. I just now checked and some of the books are still there!

Not bad for a Perl-based system, built on top of a wiki that wasn’t supposed to do this kind of thing, and built very with very few resources. The TWiki extensions were contributed back upstream to the TWiki repo and it was all open source but it was pretty hard to rebuild and no one I knew actually had a similar use case.

After this, I embarked on a journey to replace the system with a custom built solution specifically for book production. I can’t remember exactly when this started, maybe 2008 or 2009 or something. It was originally called Booki…

…which later became Booktype. Booki (and later Booktype) replaced the FLOSS Manuals tooling, although you can still see the working old tool here. That ole Perl code is still functional with no maintenance after 10 years, I can hardly believe it. The docs on how to use it also still exist.

Booki was built with Django (python) and pretty much had all the same stuff. Although the look and feel was changed quite a bit in the transition. There aren’t too many images around of Booki although I did find these screenshots of Booki taken by someone using it on the OLPC XO! (FLOSS Manuals did all the docs for OLPC/Sugar OS etc).

It was hard to get financial support for it. Internet Archive gave us $25,000 at the time which seemed like a fortune. The evolution of Booki to Booktype represented me taking the project to a buddy’s in Berlin (I was living there at the time) based org (Sourcefabric) and parking it there so I could get more resources to build it out.

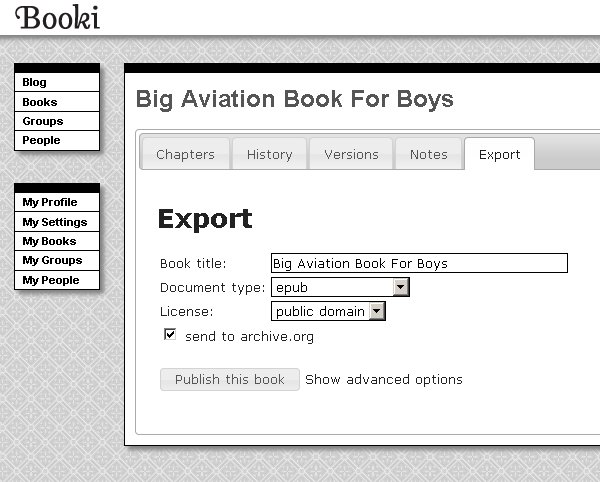

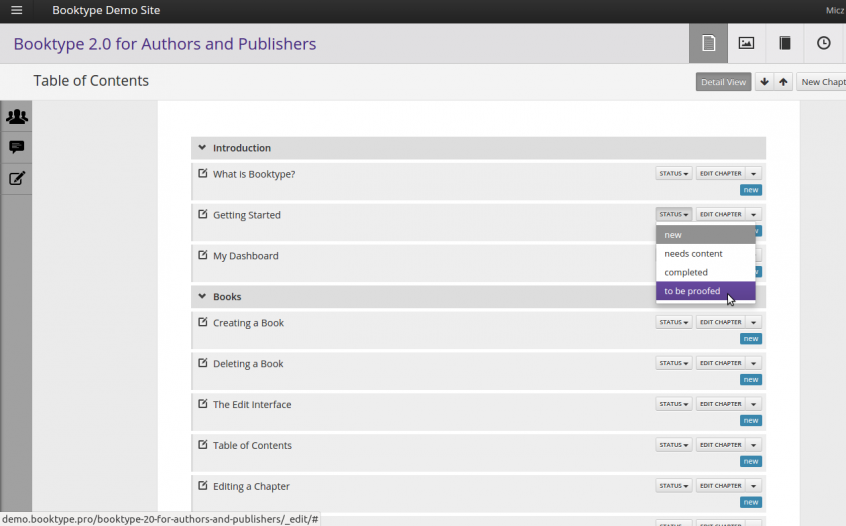

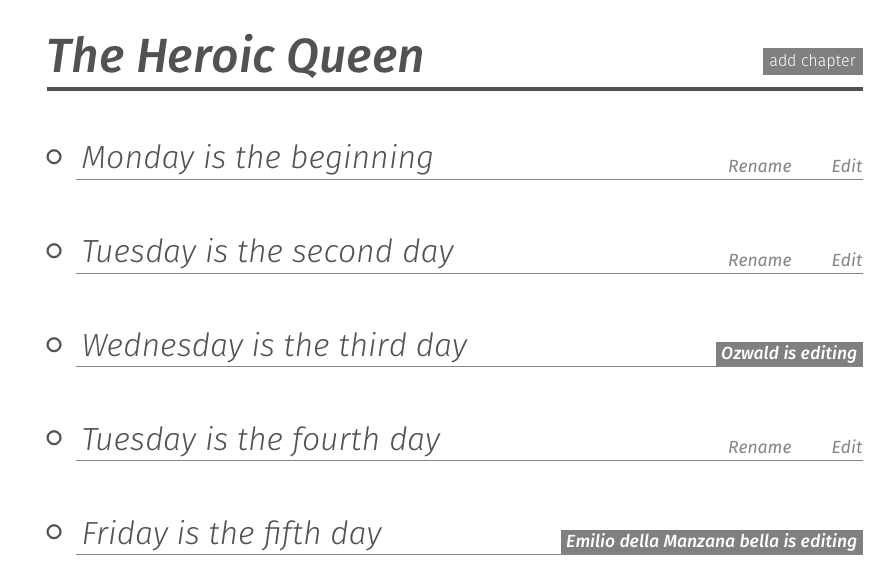

Booki/Booktype pretty much had, and has, the same stuff as the FLOSS Manuals system, just purpose built. So it had, a table of contents manager

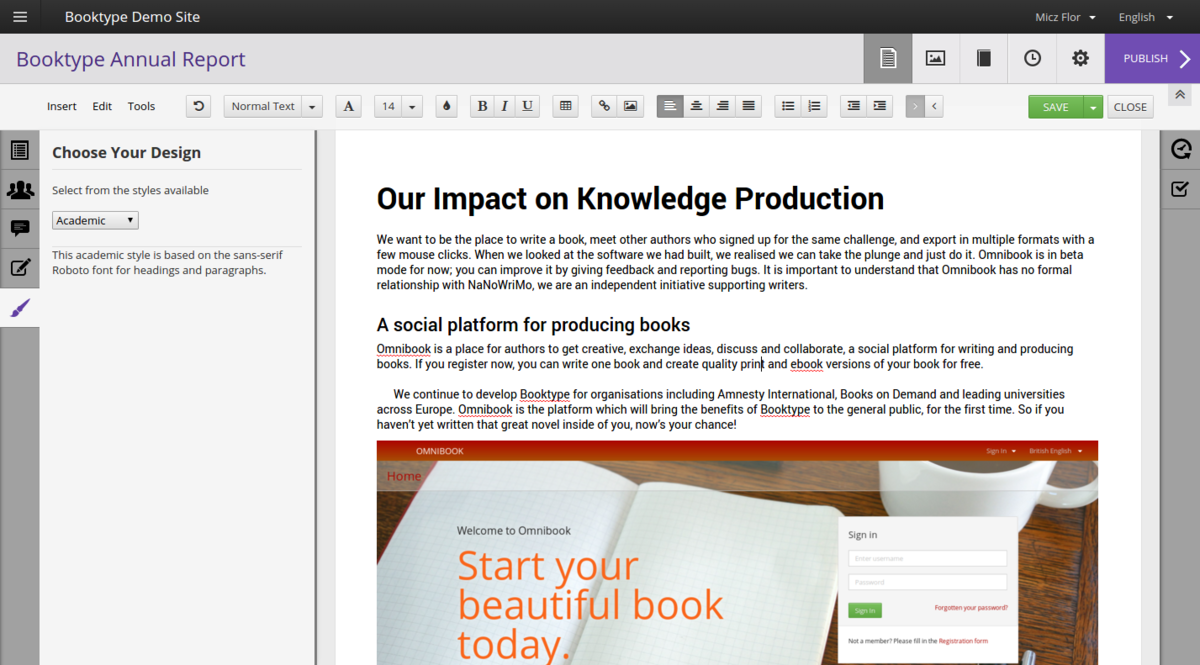

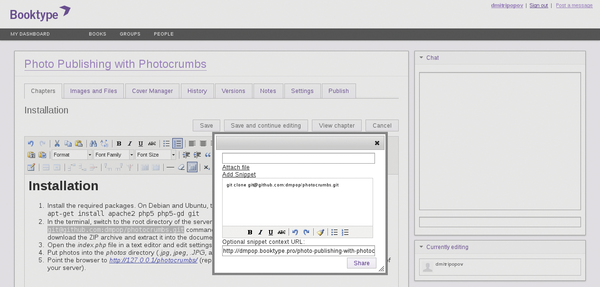

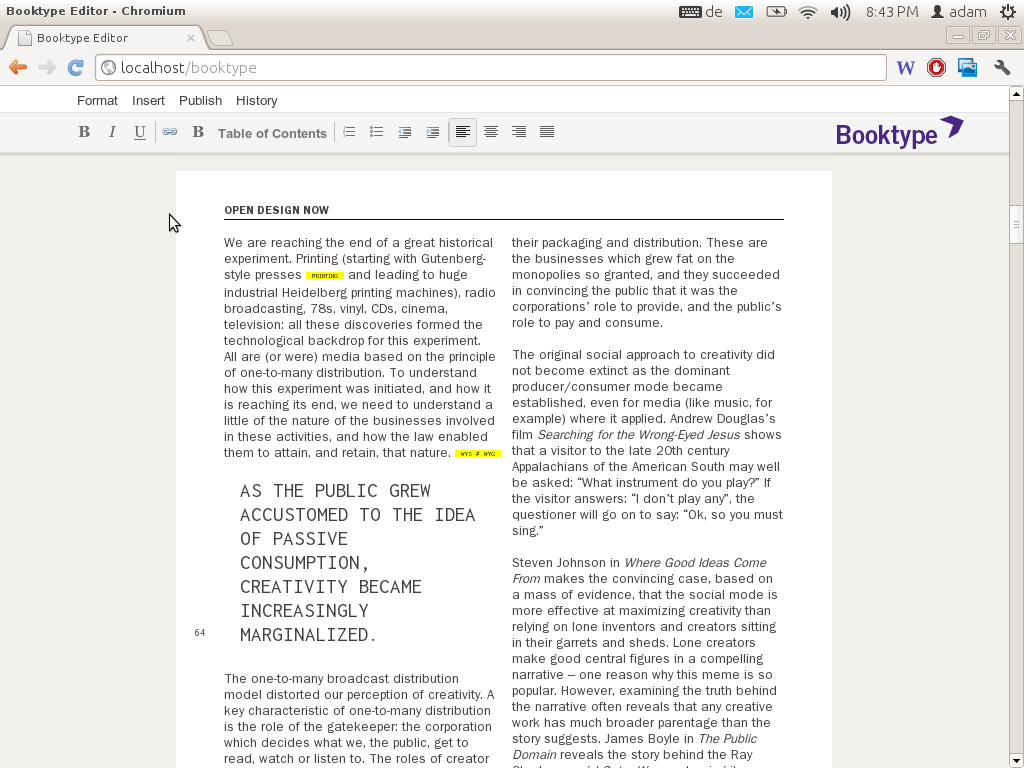

And a book (chapter) editor…

…chat…

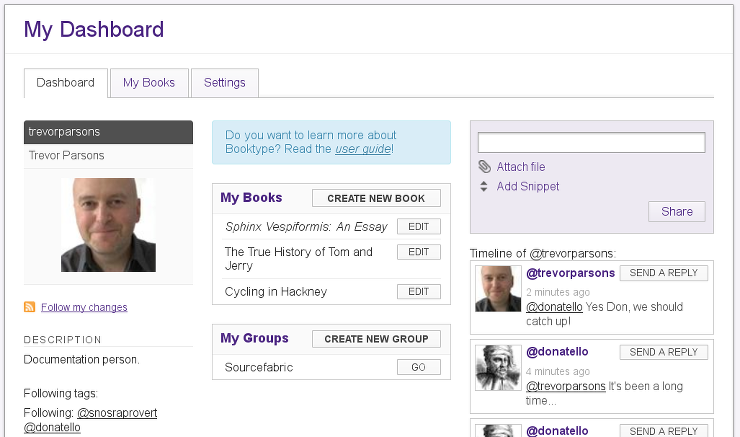

And the other stuff. Perhaps the only new features (compared to the FLOSS Manuals system) were a dashboard…

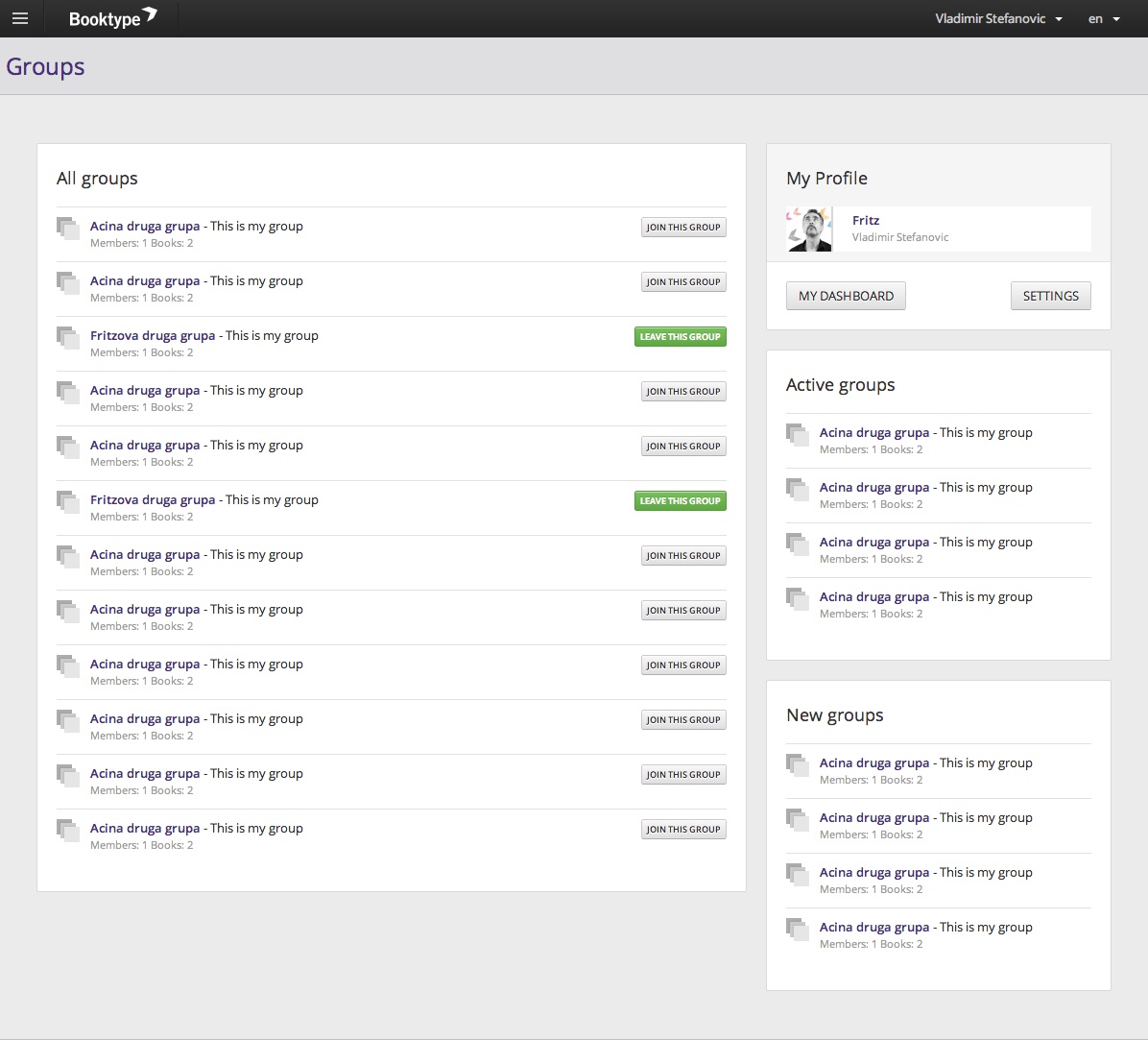

…groups…

and an interesting way to have Twitter-like messaging to pass snippets from chapters to other users.

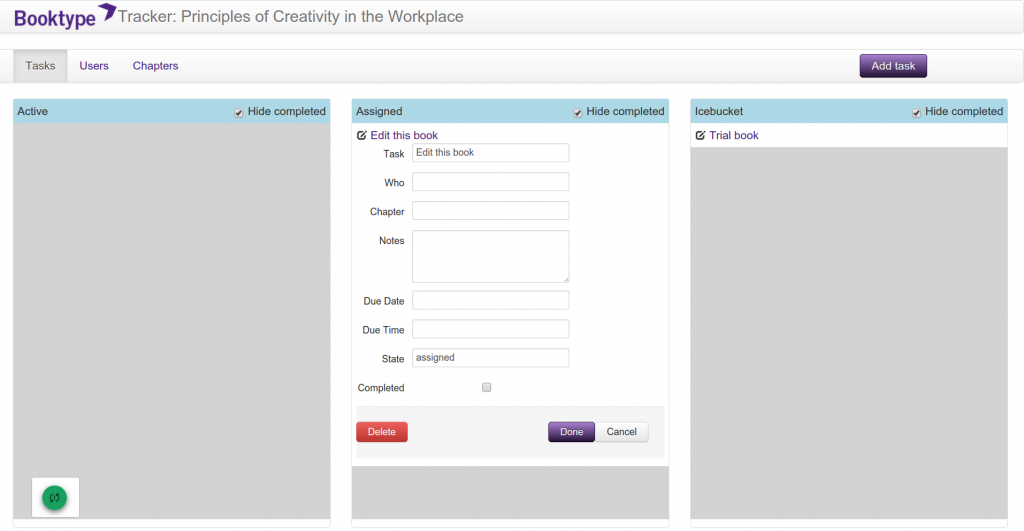

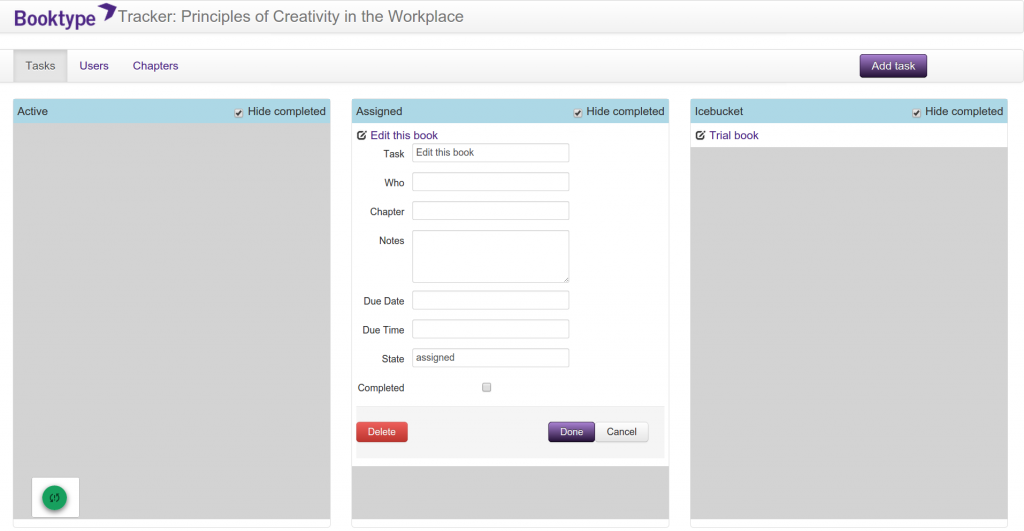

Before I left Sourcefabric I wanted to get some other innovations built but didn’t get there. I did build some prototypes though. There was a task editor…

…and live in-browser book design…

Booktype is still going strong, now it is its own company (based in Berlin) and they also run the Omnibook commercial service using the software.

I left because John Chodacki and Kristen Ratan from PLoS invited me to come work for PLoS to design a new web-based journal submission system. I agreed…

But, before I leave the book story behind for a bit..I had set up Book Sprints as a company and put a small amount of my own money into building two new book production systems somewhere between leaving Sourcefabric and starting at PLoS. These two systems were PHP-based and Juan Gutierrez built them over some months.

I wanted to do this because I was a little frustrated by Booktype not moving forward and also the platform was becoming more difficult to use. We were using it for Book Sprints but after I left the product took a new UI direction and I was finding Book Sprints participants were not enjoying using the system. So I built a Book Sprints specific system called… PubSweet… the namesake of the current Coko system which has eventually turned into something of a prototype for the new PubSweet… this new system was a lot simpler and easier to use than Booktype. It was initially meant to be modular but I think we lost that somewhere along the way. Cleanly modular systems take a lot of extra effort and time to produce so we gave in for speed of development’s sake.

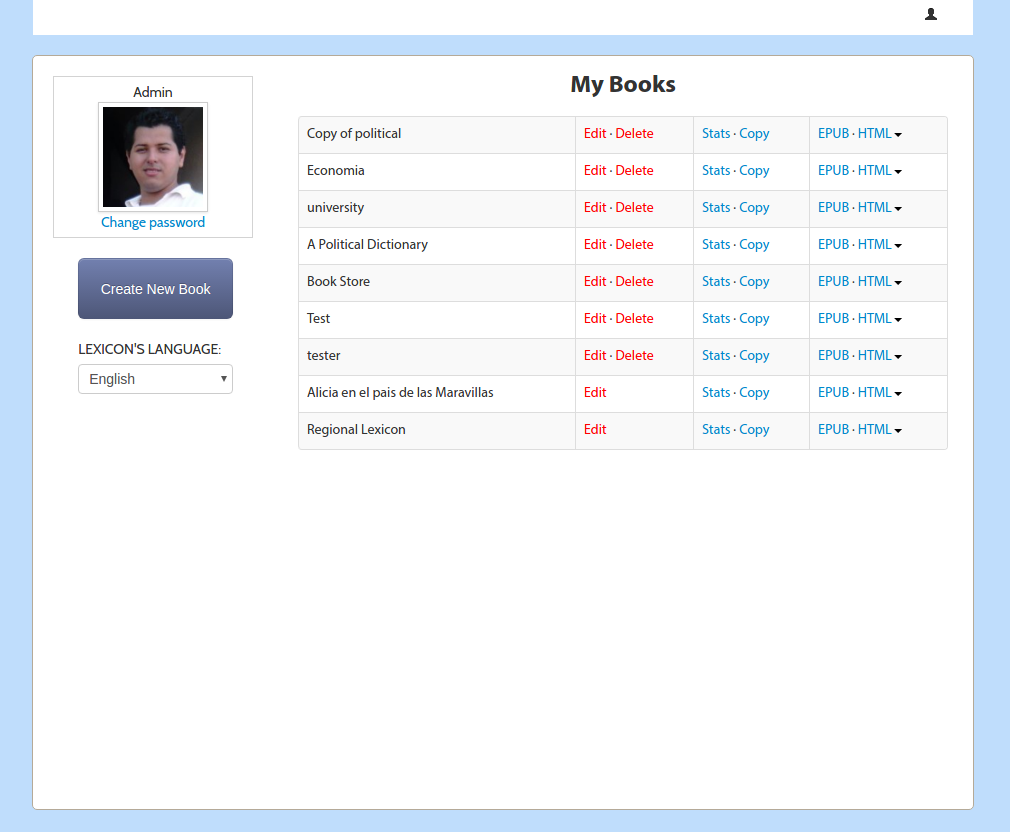

The old PubSweet had a dashboard….

..table of contents manager…

and editor. Just like before!

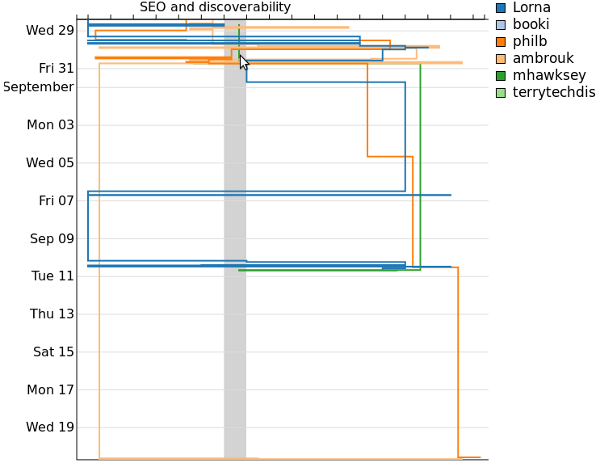

We also introduced some new innovations including visualisations of the book production process…

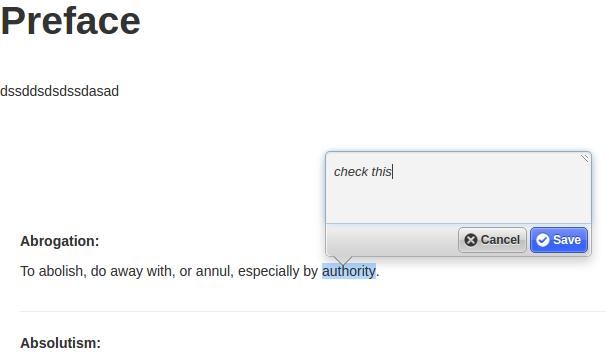

Plus annotation (using Nick Stennings annotator software)…

and other stuff…I think threaded discussions, outline views, review page, an in-browser book renderer, book stats and I can’t remember what.

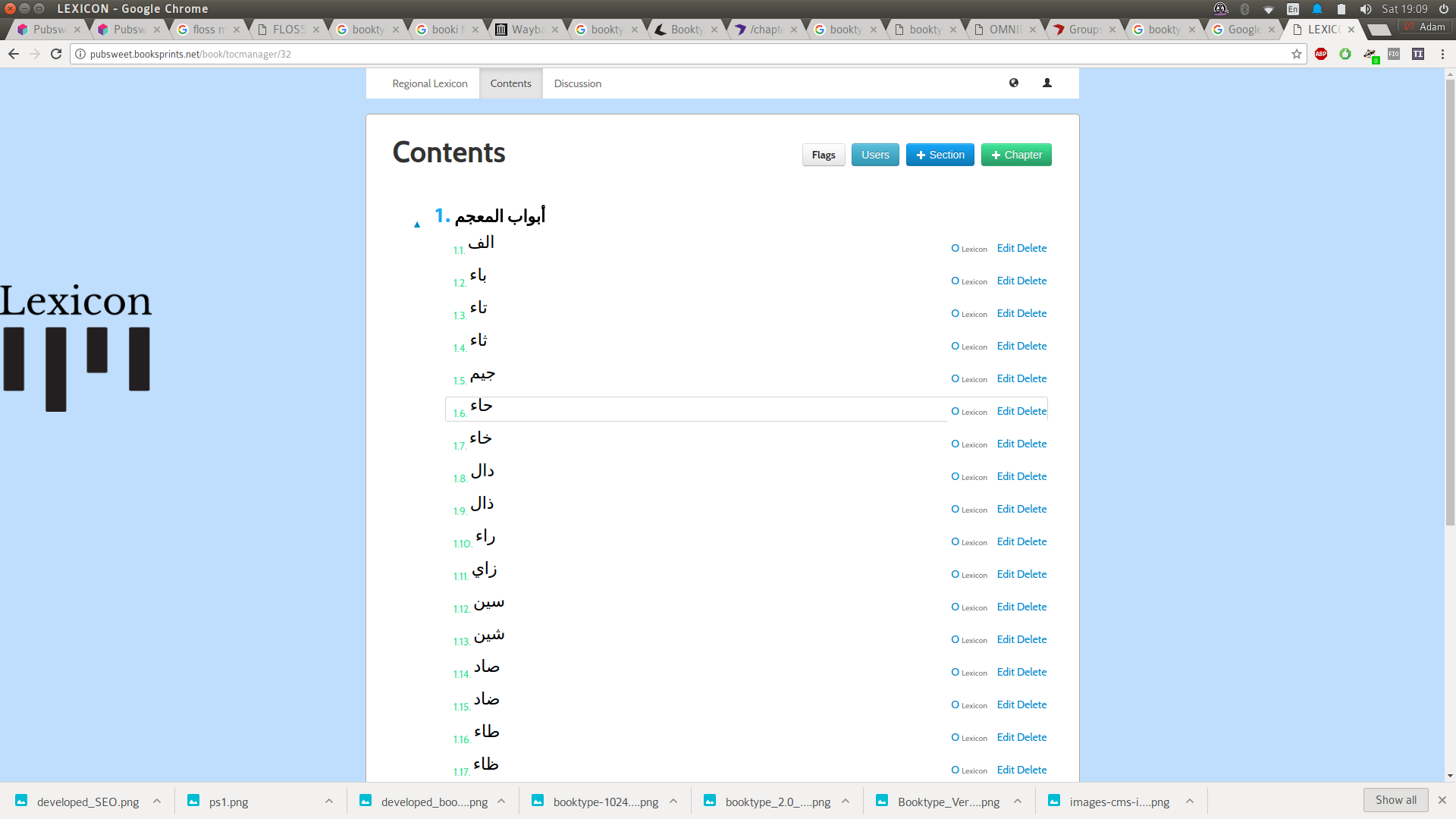

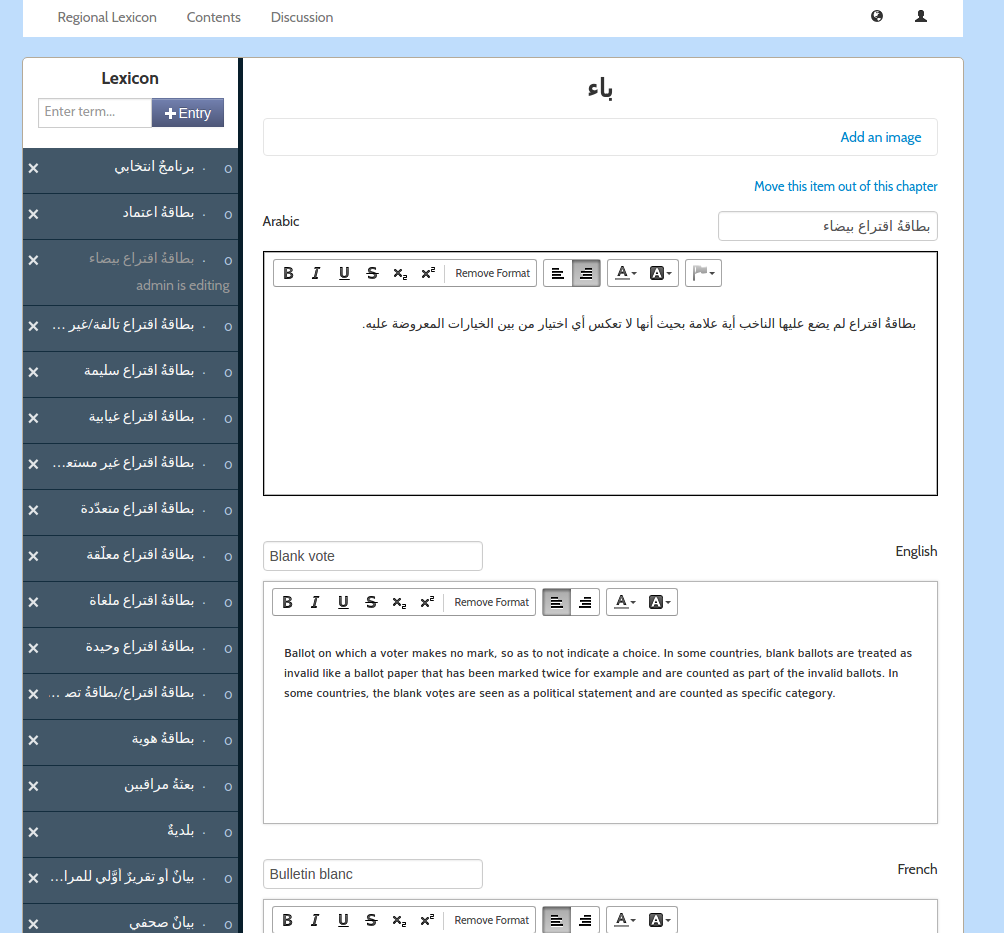

Anyway …I also built a platform on top of this old PubSweet for the United Nations Development Project. It was called Lexicon. Lexicon was pretty interesting as it opened my mind for the first time to the idea that an editor is not an editor is not an editor. Different content types (in a book) may require different editors or production environments.

Lexicon was produced to collaboratively produce a tri-lingual (Arabic, French, English) lexicon of electoral terms for distribution in Arabic regions.

Lexicon had all the same stuff as the old PubSweet but with one major innovation, you could create chapters that were WYSIWYG based, or you could create a chapter which enabled you to add and sort individual terms and provide translations.

It was a pretty interesting idea and we were able to make a really cool book which the UNDP printed and distributed across many Arabic-speaking countries. I still have the book on my bookshelf.

The other interesting thing was that the total cost for building this on top of the old PubSweet was $10,000 USD. This was mostly because we could leverage all the existing stuff and just build the difference…interesting idea!

Ok, so then I dropped book production systems around 2013 or so for a while and went to work for PLoS on a system that was called Tahi and then became Aperta. The name Tahi came from the name of the street I was living on in New Zealand before I had a US work visa and was designing the system – Reotahi Road (cool road). Reotahi means ‘one voice’ and ‘tahi’ means ‘one’ in Maori. It was built on Rails with Ember. Essentially the front end and backend were decoupled although it was really pushing the technology at the time to do this. I designed the system and moved to San Francisco to manage the team to build it.

Tahi (Aperta) had a dashboard (surprise!) and editor, just like the book production systems but I introduced two major innovations – Cards, and card-based workflow management interfaces. Unfortunately, while I was asked to come and build an open source system, things went a little weird at PLoS and they closed the repos, effectively making it a closed platform. So I quit. That also means I don’t have any screenshots to show you. Pity. If you sign an NDA with PLoS I believe they might show it to you.

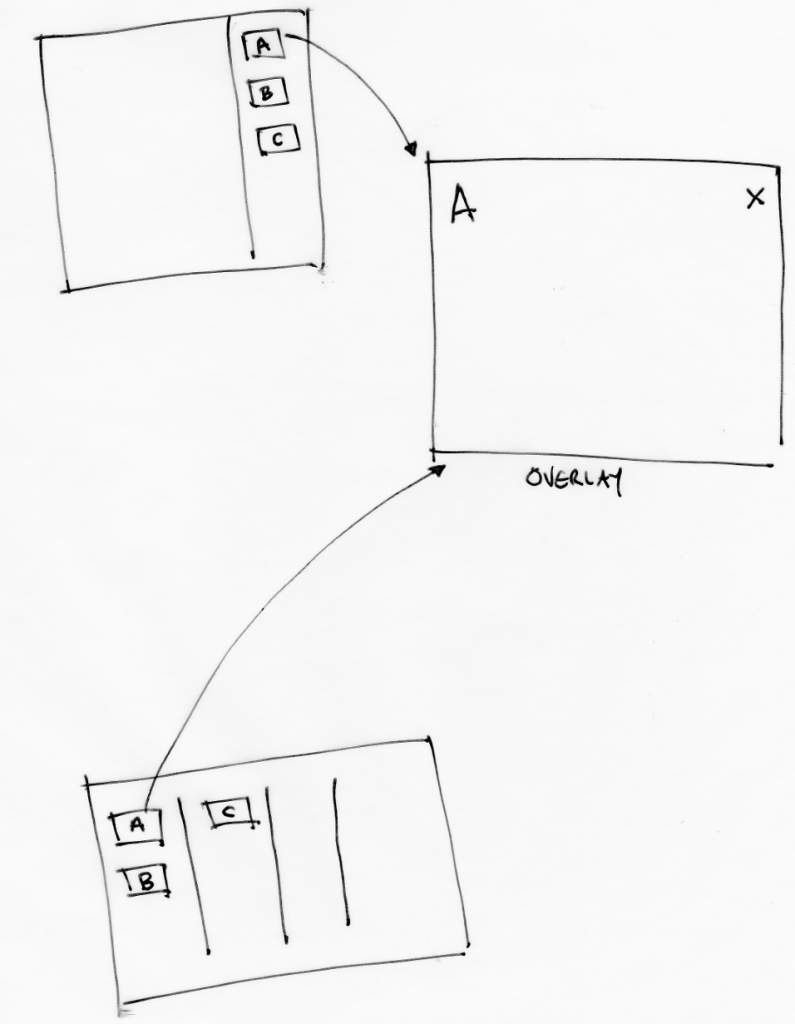

However, you can picture it a little – imagine something like Trello, or Wekan – these are card based kanban systems. But imagine if you could custom make cards to do anything. Effectively cards were first class citizens of the platform and could access the db, perform system operations, make external calls, do validations, whatever you wanted. In hindsight, I think they were as close to an idea of an ‘app’ that you could have in a browser platform, although that wasn’t the way I thought about them at the time. Additionally, cards were imported into the system since each card was actually a gem file. This meant any publisher could custom make their own cards to do whatever they wanted and place them within the kanban-like workflow space (task manager). Pretty neat.

So, cards could be surfaced and used anywhere in the system. We used them for authors to enter submission data, but also for production staff to perform operations, for reviewers etc etc etc. They could also be placed on a kanban board to make a workflow. Cards could be moved around the workflow and deleted or new ones added at any time.

To manage all this my other idea was to let these cards flow through a TweetDeck-like interface. So you could sort cards, per role, per user, at volume.

Tahi essentially had four spaces – a dashboard, a submission page (which displayed the manuscript in an editor, and submission data could be entered through cards), a task manager (workflow for the article, using cards as tasks), and a ‘flow manager’ (the TweetDeck-like interface for sorting all your cards across all your articles). While the FLOSS Manuals, Booki and Booktype platforms were pretty much monolithic systems, the old PubSweet was sort of modular. However, Tahi did decouple the front end and back end but I wanted to also break these four spaces into discreet components. That would have given the system enormous flexibility but unfortunately I wasn’t able to do this before I left.

Anyways, Tahi/Aperta is a little old now but it was pretty cool. I don’t know what happened to Aperta but I believe it is now being used for PLoS Biology.

After I left PLoS I was offered a Fellowship by the Shuttleworth Foundation to continue on the mission to reform publishing. So I started Coko with Kristen Ratan (who was the publisher at PLoS)….

So there are some themes from building the past 7 or 8 publishing systems (depending on how you count it… there were also some other interesting experiments in between). First, the next system you build is always better. That is for sure. It’s an important thing to realise because when I developed the FLOSS Manuals system I thought that was it. Nothing could be better! But I was wrong. Then Booki/Booktype and I felt the same thing. I was so proud of it and nothing could be better! haha… you get the picture. The reason why it’s important to understand this is because I think it gives someone like me a bit of freedom. I can take some risks with systems knowing you get some stuff right, you get some stuff wrong. But the next system will get that bit you got wrong, right. Taking this attitude also takes the pressure off and you can have more fun which is good for your health, the team you are working with, and the system.

As far as technical lessons learned… well… after looking back at all these systems when we started Coko, I realised that the idea of independent ‘spaces’ for publishing workflows had a heap of currency. How many systems did I have to build with baked in dashboards, task managers, editors, table of content managers, etc etc etc before I could realise it doesn’t make sense to do this over and over. I wanted to take the idea of these kinds of spaces forward and not have to build them again and again… so some kind of system where you could include whatever spaces/components you wanted would be ideal… This would have two very important side benefits:

That was a lot of the thinking behind the new PubSweet – PubSweet 1.0. But there is some other stuff too. Through my time at PLoS, I came to understand just how many variables affect workflow choices in journal publishing and that each publisher has slightly different conditions and roles that affect this. That means that the access control is complex. We might think there are various roles – author, editor, reviewer etc that shepherd an article through a process but it’s not that simple. Any number of conditions can affect who gets to see or do what and when. So we need to have a very sophisticated way to set and manage this.

There was a lot of other stuff to take into account to but I mention these two specifically because recently when I was talking to Jure (lead PubSweet dev) about PubSweet 1.0 and reflecting on how far we came he nailed it, he identified the two major innovations of the system being:

I agree entirely. I think I might add another:

It is pretty easy, and getting easier, for developers to develop publishing platforms/workflows (call them what you will) with PubSweet. I think it is pretty astonishing and I think these 3 characteristics put together enable us to build multiple publishing systems fast and in parallel (with small teams) as well opening the door for other to do the same and huge opportunities for innovation.

If we are successful at building community this will be a huge contribution to the publishing sector.

In a future post, I’ll break PubSweet spaces / components down in more detail. There were also a lot of other similar stories regarding technical innovations on the way (eg Objavi->iHat->INK), but I’ll break them down into posts on another day.

I meant to also talk about Editoria here, the monograph production system built on top of PubSweet, and xpub – the PubSweet-based Journal system.

They are both pretty amazing and leverage so much more than the previous systems identified above.

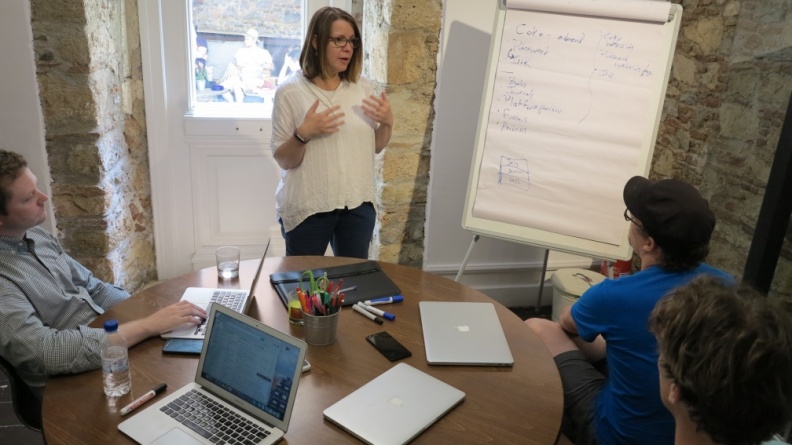

I think the main thing with them is that we are working extremely closely with publishers using the method I developed – the Cabbage Tree Method.

This means that I am no longer involved in building, what I would call, naive publishing systems. Naive in the sense that publishers could use, for example, Booktype, but it’s not really built for publishers. It’s a general book production system built by someone who didn’t know much about publishing at the time. That’s great of course, there is a place for it. However, Editoria is not a naive system. It is designed by publishers for publishers and the difference is enormous.

But I will leave a longer rant about this for another post.

I do however, want to say that I didn’t, of course, build any of the above systems by myself. There were many people involved and I have credited them elsewhere in this blog. I’m not going to do another roll call here except for Jure Triglav.

Jure and I sat down just over 18 months ago to discuss some of the lessons I learned as explained above. We jammed it out over post-its, whiteboards, coffee, and food in Slovenia and you can read a little more about that process in the PubSweet 2.0 RFC. But Jure trusted me, and I trusted him, and he took these ideas and, with a small team in very good speed, made them a reality. As a result, I think PubSweet is an exciting system and will only get better. Congratulations Jure, you deserve special thanks and recognition for the absolutely amazing job you have done.

Some of my projects. As you can see, partially complete. Will add more and then make this a static page.

Current

The Collaborative Knowledge Foundation’s mission is to evolve how scholarship is created, produced and reported. CKF is building open source solutions in scholarly knowledge production that foster collaboration, integrity and speed.

CKF envisions a new research communication ecosystem that gives rise to wholly unique channels for research output.

CKF was founded in October 2015 with support from the Shuttleworth Foundation.

Current

I have just been awarded a Shuttleworth Foundation Fellowship. I’m deeply honoured to have been selected. I was awarded a second year of Shuttleworth Fellowship for my work on reformulating how knowledge is produced.

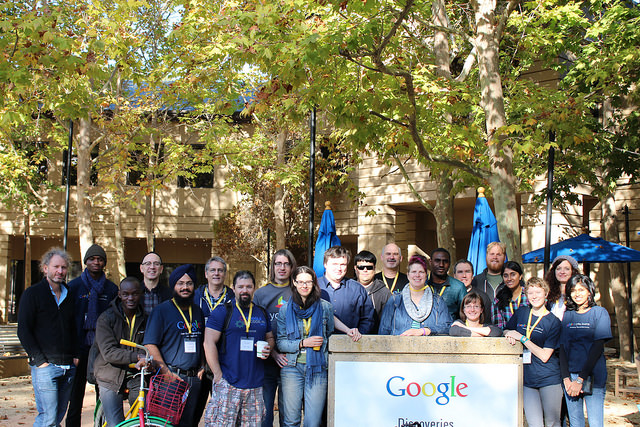

Google Headquarters in Silicon Valley, Aug 2016

http://www.thefutureoftext.org/

Organised by friends and followers of Douglas Englebart, Adam was invited to present on collaboration and book production.

London, Sept 2016

http://www.alpsp.org/2016-Programme

Adam was invited to present at the annual ALPSP conference about ways that Open Source could change publishing.

Brussels, Nov 2016

http://www.ismte.org/page/2016EuroConference

http://www.slides.com/eset/ismte

Adam was invited to present on Open Source tools for publishers at the Brussels edition of the 2016 IMSTE series of conferences.

Riga, Latvia, Oct 2016

http://rixc.org/en/festival/Open%20Fields%20Konference/

I was invited to speak about the intersection of art, science, and publishing at the cutting edge Open Fields festival.

Berlin, Oct 2016

http://www.supermarkt-berlin.net/event/un-learning-networked-collaboration/

Adam was invited to facilitate a one day conference on collaboration and facilitation.

2016 – present

http://wwwpagedmedia.org

Founded the blog about paged media.

2016 – present

http://substance.io/consortium/

Foundational member of the Substance Consortium.

2008 – present, New Zealand

http://www.booksprints.net/

Book Sprints is a methodology and a company I founded to rapidly produce books.

Nov 2016: transitioned from founder and CEO to the board. I appointed Barbara Rühling as CEO.

A Book Sprint is a collaborative process where a book is produced from the ground up in just five days. But even more important, this collaborative process captures the knowledge of a group of subject-matter experts in a manner that would be nearly impossible using traditional methods. The result at the end of the Book Sprint is a high-quality finished book in digital and print-ready formats, ready for distribution.

Book Sprints Ltd, is a team of facilitators, book-production professionals, and illustrators specialised in Book Sprint facilitation and rapid book production. Our organisation developed the original methodology and has refined it since 2008 through the facilitation of more than 100 Book Sprints. Topics have ranged from corporate documentation to industry guides, government policies, technical documentation, white papers, academic research papers, and activist manuals.

Book Sprints clients include Cisco, PLOS, F5, the World Bank, USAID, African Development Bank, Open Oil, Liturgical Press, Ausburg Fortress, Cryptoparty, OpenStack, European Commission, JISC CETIS, UNECA, Mozilla, IDEA, Engine Room, Heidy Collective, Transmediale, Google… to name a few.

"If Book Sprints did not exist, we would be forced to invent them, so powerful is the knowledge production paradigm." --Allen Gunn, Aspiration "Book Sprints get more brilliant work out of bright people in 1 week than most project can evoke across many months." --Loy Evans, Cisco "Writing a book through a Book Sprint turned out to be efficient, thorough and enjoyable; I can’t imagine a better outcome." --Phil Barker, JISC CETIS

2013 – July 2015. Public Libary of Science, San Francisco

In 2013, I designed a platform for the Public Library of Science (PLOS), originally called Tahi but renamed to Aperta. In 2014 I was asked to lead a team to build the platform. I led the 15 strong team to the production-ready 1.0 release of this multi-million dollar project to completion, on time and under budget in June 2015.

Aperta is an entire submission and peer review platform for multiple scientific journals housed within the single instance. The entire system is designed to be highly collaborative and concurrent. The platform includes a manuscript production interface, HTML and LaTeX document editing support, Word ingestion, a workflow management system, task management interfaces, admin interfaces, reports, and user dashboards. The platform was built in Ember-CLI, Rails, implements a highly customised Wikimedia Foundations Visual Editor, and uses Slanger for concurrency. It is an HTML-first system, has many innovative new approaches to journal systems, and solved many long-standing problems in this space. The project also involved a separate codebase named iHat that provides Aperta with an API service for queue-managed file conversions.

"At its core, this new PLOS editorial environment brings simplicity to the submission and peer review process by providing advanced task-management technology and a vastly improved user interface, which will enhance the publishing experience for our community of authors, editors, and reviewers." http://blogs.plos.org/plos/2015/07/publishing-initiatives-at-plos-a-look-back-and-a-look-ahead/

NB: I only work on Open Source systems. The sources are not yet available for this project.

2012 – present

PubSweet is a platform designed to assist the rapid production of books in Book Sprints. The platform is very simple to use, with very little overhead for new users. The system provides dashboards, publishing consoles, card-based workflow management (task manager), discussions, data visualisations of contributions, a dynamic table of content management, and support for multiple chapter types. PubSweet can produce EPUB and leverages book.js (see below) to produce print-ready PDF (paginated in the browser). PubSweet is written in PHP, using Node on the backend, and CKEditor as the content editor.

2012

Lexicon is a platform produced for the United Nations Development Project to collaboratively produce a tri-lingual (Arabic, French, English) lexicon of electoral terms for distribution in Arabic regions. Lexicon provided concurrent editing for chapters with multiple terms, sorting by language, discussion forums and voting. Lexicon was written quickly in php with Node.

"The Lexicon was created with the aid of an innovative collaborative writing tool customized to suit the needs of this project. This web-based software allowed the authors, reviewers, translators and editors to simultaneously input their contributions to successive drafts from their various countries. "http://www.undp.org/content/undp/en/home/presscenter/pressreleases/2014/11/19/undp-launches-first-lexicon-of-electoral-terminology-in-three-languages.html

![]()

2012

book.js is a Javascript library that you can use to turn a web page into a PDF formatted for printing as a book. Take a web page, add the JavaScript, and you will see the page transformed into a paginated book complete with page breaks, margins, page numbers, table of contents, front matter, headers etc. When you print that page you have a book-formatted PDF ready to print.

book.js has given inspiration to a number of other JS pagination engines. See Vivliostyle, bookJS Polyfil, Pagination.js, simplePagination.js, and CaSSiuS.

2010 / 2012

Booktype is a book production platform. I brought this platform to Sourcefabric (Berlin) as ‘Booki’ in 2012. Booki was started in 2010. Booktype is written in Python (Django).

“Booktype resolves challenging issues in collaborative knowledge production resulting in high quality print and ebooks.” – Erik Möller, deputy director, Wikimedia Foundation

2011, 2012, 2013

The GSoC Doc Camp was an annual event over three years. It was a place for documenters to meet, work on documentation, and share their documentation experiences. The camp improved free documentation materials and skills in GSoC projects and helped form the identity of the emergent free-documentation sector.

The Doc Camp consisted of 2 major components – an unconference and 3-5 short form Book Sprints to produce ‘Quick Start’ guides for specific GSoC projects.

Each Quick Start Sprint brought together 5-8 individuals to produce a book on a specific GSoC project. The Quick Start books were launched at the opening party for the GSoC Mentors’ Summit immediately following the event.

2011

The Bookimobile was a a mobile print lab in a van – essentially a van that contained all the equipment necessary to create perfect bound books. It was designed to take the ideas of Booki to people and make real books that have been created in Booki. The first Bookimobile was based on the Internet Archives Book Mobile and we took it to several book fairs and events throughout Europe. It was sponsored by Mozilla, CiviCRM, Archive.org, Francophonie.org, Google Summer of Code, and iCommons.

2008

Objavi is an API-software service originally written for Twiki Book (see below) but also serviced Booki and later Booktype. Objavi converts books from their native HTML into PDF for printing. It also handled other file conversions (eg HTML to ODT, HTML to EPUB etc). I later produced a similar API-based conversion software for PLOS known as iHat. Objavi is written in Python.

2006

TWiki Book didn’t have a real project name at the time. The project was the first publishing system I built. TWiki Books was created solely to meet the needs of FLOSS Manuals (see below) and it was built on top of TWiki, a Perl-based wiki. TWiki Book included book remixing features, side by side translation, table of contents building, publishing interfaces (I actually wrote a separate php-based system to manage this), edit notifications, versioning, diffs, live chats and many other features. It was a good system but reasonably difficult to extend and maintain since it re-purposed an existing wiki software (hence my approach to building purpose-built book production systems after this point).

2006

FLOSS Manuals was the project I founded in 2006 that got me started on this whole publishing thing. FM was, and is still, an active community of volunteers that creates free manuals about free software. There is now a foundation and several language communities (notably French and English). The contributors include designers, readers, writers, illustrators, free software fans, editors, artists, software developers, activists, and many others. Anyone can contribute to a manual – to fix a spelling mistake, add a more detailed explanation, write a new chapter, or start a whole new manual on a topic. The aim was produce high quality free works and we succeeded – creating many fantastic manuals in over 30 languages (and still growing).

“Introduction to the Command Line” is at least as clear, complete, and accurate as any I’ve read or written. But while there are countless correct reference works on the subject, FLOSS’s book speaks to an audience of absolute beginners more effectively, and is ultimately more useful, than any other I have seen.” -- Benjamin Mako Hill, Wikimedia Foundation Advisory Board, Free Software Foundation Board

I am asked to talk about publishing from time to time. The following are some links to some of those presentations.

Choosing a document network

August 2015, Vancouver, Public Knowledge Project

Open Access and Open Standards

Oct 2014, San Francisco, Books in Browsers

May 2013, San Francisco, I Annotate

Oct 2013, San Francisco, Books in Browsers

I have been writing about publishing here and there. Since last year these efforts have been focused on this site. The following are some links to some of my other works:

Interview with me about the future models for publishing, published by k-verlag (Berlin).

When Paper Fails

What happens when books, ownership, authority and authors are all challenged by a network.

Visualizing Book Production

Why is no one visualising data on how we make books?

Zero to Book in 3 Days

A little bit about Book Sprints.

Forking the Book

When books are forked.

Over Thinking EPUB

Commentary on why EPUB might be confusing the issue.

Changing the Culture of Production

How to change the way we produce knowledge.

WYSIWYG vs WYSI

The evolution of the editor.

Math Typesetting

the sorry state of math typesetting.

InDesign vs CSS

Why HTML wins versus Desktop Apps.

The Blanche Dubois Economy

Why ‘Open API’ is really a silly idea.

Gutenberg Regions

Paginating in the browser.

Browser as Typesetting Engine

More commentary on the browser as a typeset engine.

The New New Typography

The evolution of Javascript Typesetting.

2008, Quarantine Island, New Zealand

This project was actually called ‘Intertidal’ but I like the name Seaweed better. Douglas Bagnall and I created a one-day community project to discover a new species of seaweed. We hosted this on Quaratine Island in the Dunedin Harbour and invited anyone to come work with us and a marine biologist from the local research center to search for a new species. The project was a community project and a reflection on the notions of species as an out-moded idea, and on taxonomy as a dying art. About 50 or 60 people – individuals, groups, and families – came out on the free boat (provided by the local sea scouts) and hiked across the island to participate on a coldish Dunedin day to search for and document seaweed. We possibly discovered a new species.

throwing into focus the ever-present potential for new knowledge. Drawing upon 19th century methods of species discovery, involving collecting, looking and drawing, their work formed questions around what we don't know.

2008, Christchurch, New Zealand

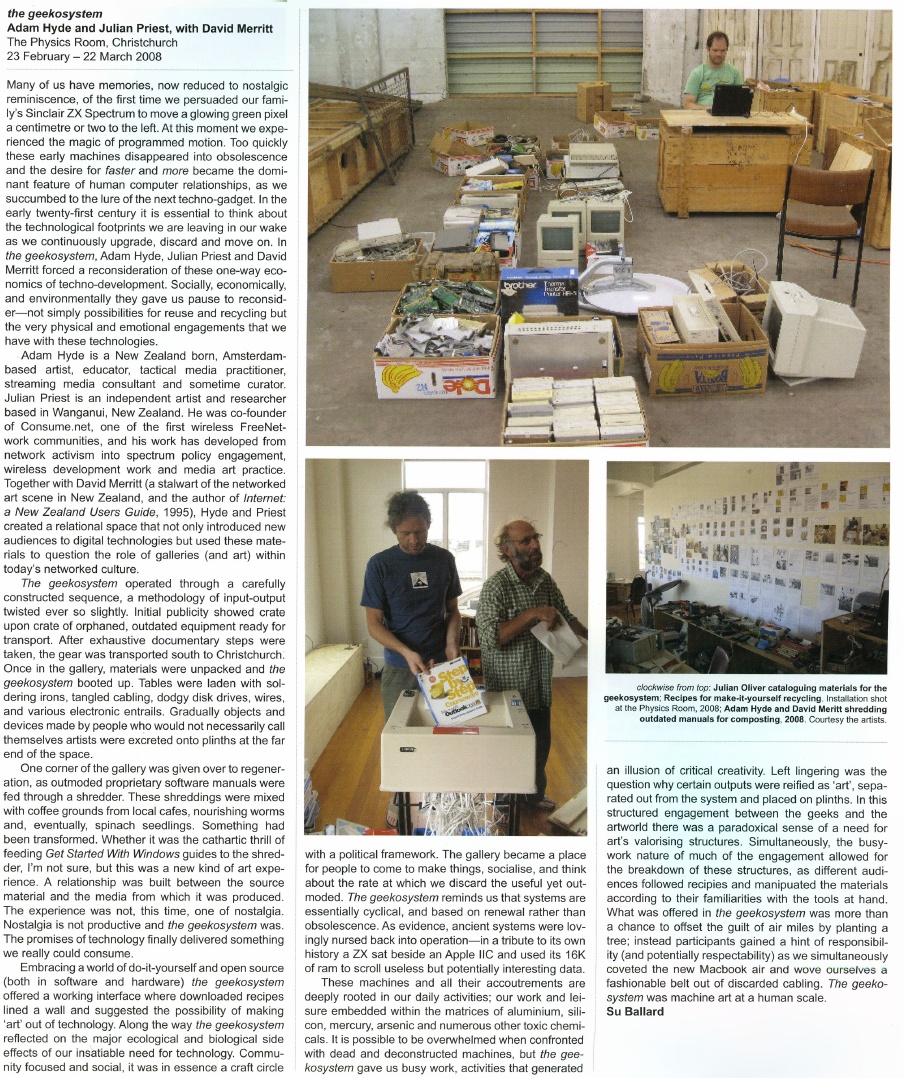

Julian Priest, Dave Merritt and I drove about a tonne of old electronics 700km or so in an old landrover (top speed 35km/hr) from Daves warehouse in Wanganui to an art gallery in Christchurch, New Zealand. In the gallery, we served up the old electronics as a participatory art project and invited anyone to come and build new objects from the old. It took 3 days to get there. It was an adventure.

A Geekosystem was a participatory workshop based on a redundant technology collection created by David Merritt. Items were selected from the collection and packed into a Landrover and driven to The Physics Room in Christchurch. A call for participation was issued by The Physics Room and a group of geeks gathered to re-configure the technology into artworks. A workshop space was created and plinths were placed at once end of the gallery and populated with artworks made from the e-waste. The workshop was open to the public and continually added to during the duration of the show. Old technology books were formed into a library. Proprietary software manuals were shredded and mixed with coffee grounds. This was mulched into soil and silver beet seedlings were successfully germniated in floppy disk trays.

A Geekosystem was shown first at the Physics Room in Christchurch in 2008 and then at The Green Bench during the Whanganui Open studio week in 2008. The Geekosystem garden was transferred to a permanent location and produced vegetables for a number of years.

2006, Exhibited at the Exploratorium in San Francisco, Zero One Festival in San Jose, and many other venues.

This was a project I made with Matthew Biederman and Lotte Meijer. The Paper Cup Telephone Network (PCTN) was a free communication system and comment on how ‘simplicity’ in technical systems is trickery, and problematising the corporatisation and ever increasing individualisation of modern communications.

The PCTN was a network of paper cup telephones. Just like the games played by children, anyone could put a PCTN cup to their ear to listen, or to their mouth to speak. However, the difference between the PCTN and the original game is that the “string” is connected to the World Wide Web where your voice is streamed to all the cups on the network carrying it, blocks or even miles or a continent away. We built the entire system from free software telephony systems (asterisk and SIP phones), open and standards-based telephony protocols, cups, and string.

As simple as it was, it remains the most difficult technical project I have ever undertaken.

2006, exhibited at the Waves exhibition in Riga, Latvia

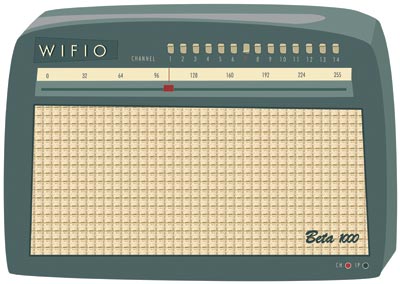

Wifio was a project I did with Lotte Meijer and Aleksandar Erkalovic. Wifio was a comment on the naivety in which we broadcast our personal information. It was a hardware UI and software that allowed anyone to tune into the World Wide Web wifi traffic. If someone near you was browsing the web on a wifi network, you could simply tune in with Wifio by selecting the right channel and tuning into their IP address.

…but don’t worry, you don’t need to know what their “IP Address” is, in fact you don’t even need to know what an IP address is! Just move the dial until you hear their emails or what they are saying in chatrooms.

I was proud when Julian Oliver (an old buddy from NZ) referenced this project as inspiration for one of his works.

2007

r a d i o q u a l i a (see below) were commissioned to make a new work for Forte di Bard in Valle d’Aosta in Italy for “Cima alle stelle (Stars)”, a large exhibition showing historical works by major masters like Durer, Tintoretto and Guercino; contemporary artists such as Pierre Huygue, Olafur Eliasson and others; and astronomical instruments and writings by Copernicus, Galileo, Kepler, Newton and Einstein.

We made a new site-specific sound installation inside two of the glass elevators which take visitors from the arrival area of Forte di Bard, to the gallery levels. Much of the elevator travel is external to the Forte (pictured below). Sound Elevator consisted of two linked sound environments inside the elevators. As the elevators ascended to the exhibitions halls, visitors experienced an auditory journey from the local celestial environment to the edges of the Universe. In the first elevator, visitors sonically travelled through the Earth’s ionosphere and magnetosphere, hearing our closest star, the Sun, interacting with our planetary atmosphere. The upward and outward journey continued in the second elevator, with sounds from our planetary neighbours, the sonic echo of distant stars, and finally the sound of the Big Bang itself.

2006/2007 Antarctica

I did a 2-month residency in Antarctica at SANAE (South African Research Base) as part of I-TASC, ultimately a failed network of individuals and organisations working collaboratively in the fields of art, engineering, science and technology on the interdisciplinary development and tactical deployment of renewable energy, waste recycling systems, sustainable architecture and open-format, open-source media. But it was still a great experience.

The coolest thing about it, was the 2 weeks each way on the beautiful icebreaker the SA Agulhas (now decommissioned). I kept some diary pages on the Interpolar site. I also did a few other projects while there including Polar Radio (see below).

The worst thing about it was that we shouldn’t have been there. There is no need for anyone to be in Antarctica. Most of the ‘science’ projects are strategic positioning for a land grab when the time comes. Some science might be justifiable… but arts projects?

Leaving Antarctica I cried my eyes out. It was just too much for me to deal with. Too amazing. After Antarctica, I gave up the art world. I couldn’t think of anything else the art world could do for me.

It was a conflicted but beautiful experience.

2006/2007, Antartica

Polar Radio was a community radio project initiated by I-TASC and

r a d i o q u a l i a. The first prototype station began FM broadcasts on 29 December 2006 in the Dronning Maud Land sector of Antarctica, where South Africa maintains their base, SANAE IV. It was Antarctica’s first artist-run radio station. It was the first step towards establishing a permanent polar radio presence in Antarctica, which may eventually broadcast in between geographically dispersed Antarctic bases.

But y’know…I wish I hadn’t done it. When I first got to Antarctica I turned on a radio and went through many many frequencies… and I heard nothing… that was amazing. Where else in the world can you not hear anything on your radio? I then went ahead and polluted the spectrum. Darn. I regret it.

Polar Radio was part of a series of projects run by I-TASC – the Interpolar Transnational Art Science Constellation.

2005, Transiberian Express

Capturing the Moving Mind was a conference on board the Trans-Siberian train. It was about new forms of movement and control, war and economy, in the current situation. 50 international researchers, artists and activists participating in the mobile conference formed a mobile production unit aboard the train. For the audiovisual streams, Luka Princic and I developed a free software ‘mobicasting’ platform which enabled mobile transmission of material on the web from mobile phones on the train. Mobicast was initially developed during a residency I had at MAMA Media Lab (Zagreb, Croatia).

It was a great project but really really fragile. The tech of the time was not up to it. Mostly it ran on Puredata and some obscure bits of code from here and there. Still it worked. Best moments were hanging out on the train laughing at people trying to be ‘artists’ in real time… huh? …and getting sardonic with Dr Gillian Fuller – the world’s best queue hacker. Watching the train wind around the Gobi desert… also kinda cool.

mobicast was initially developed to overcome the problem of delivering live video from a moving train to the internet. Traditionally this is the domain of OB (Outside Broadcast) technologies or expensive vehicular satellite uplink hardware. However mobile phones are now very capable remote broadcast environments. Many modern phones record images, video, audio and allow the editing and transfer of these media through wireless data networks (eg. GPRS) with almost global coverage. The quality of these recorded media have generally been considered 'low-fi' but fidelity is increasing and importantly, the expectations of networked media are becoming more appropriate. Once upon a time there was a mythic "broadcast quality" threshold all media had to pass before being accepted by broadcast organisations and (theoretically) audiences. However, now there are active calls for content generated by "on the spot" accidental observers by large scale media organisations. The tide and scale of remote media is changing. The nature of experimental media on this type of platform is the intentional playground of mobicasting. With this emerging new type of media witness cultural forms are also emerging. Multiple networked media phones is in itself a platform for collaborative cultural development and opens interesting doors for experimental media.

2001-2004, International

For many years I had a wonderful mentor – Tetsuo Kogawa. He is the father of MiniFM. I saw Tetsuo build a mini FM transmitter at the Next Five Minutes festival in Amsterdam. Sometime after that, I asked him if he would teach me how to make them too, and he very generously spent a good deal of time making sure I understood the ins-and-outs of the process. Together we designed a workshop and Tetsuo worked out even simpler ways to build the transmitters. For many years I travelled the world leading transmitter-building workshops and often Tetsuo would stream in from his studio in Tokyo to talk about the idea and give a quick demonstration before we started building.

Later Tetsuo and I created a project called SilentTV which was the same idea but using simple elements to broadcast TV.

I’m forever grateful to Tetsuo for his kindness and mentorship.

November 2003, South Africa

re:Play explored the world of the computer game. It featured an exhibition of artists’ computer games by Andy Deck, Josh On + Futurefarmers, Mongrel, Natalie Bookchin, the escapefromwoomera collective and Max Barry, and a programme of workshops and lectures. re:Play was a collaboration between the Institute for Contemporary Art, Cape Town and r a d i o q u a l i a. It launched at L/B’s – The Lounge at Jo’Burg Bar in central Cape Town, South Africa, and went on to be exhibited at Artspace and the Physics Room in New Zealand.

The games in the exhibition were not typical computer games. While all of them encouraged play, and involved a gaming objective, unlike regular computer games, they had a strong political dimension and explored how play, interaction and competition can be utilised in an artistic context.

The re:Play education programme included talks and workshops lead by Graham Harwood of Mongrel and r a d i o q u a l i a at Cape Town High School, Fezeka Senior Secondary School in Gugulethu; the Alexandra Renewal Project, Johannesburg and at Wits School of Arts, University of the Witwatersrand, Johannesburg.

2002, The World

Another project that got a lot of attention for r a d i o q u a l i a, most notably through being exhibited at the New Museum in NYC, but it also at Banff and other places. I loved this project because it brought together several threads.

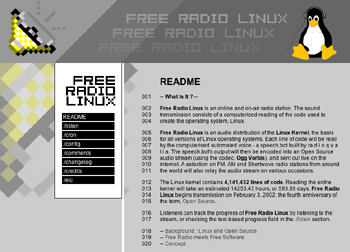

Formally, Free Radio Linux was an online and on-air radio station. The sound transmission was a computerised reading of the entire source code used to create the Linux Kernel, the basis of all distributions of Linux.

Each line of code was read by an automated computer voice – a speech.bot

utility I built for the work. The speech.bot’s output was encoded

into an audio stream, using the early open source audio codec, Ogg Vorbis, and was broadcast live on the internet. FM, AM and Shortwave radio stations from around the world also relayed the audio stream on various occasions.

The Linux kernel at that time had 4,141,432 millions lines of code. Reading the entire kernel took an estimated 14253.43 hours, or 593.89 days.

Listeners tracked the progress of Free Radio Linux by listening to the

audio stream, or checking the text-based progress field in the ./listen

section of the website (which is no longer up)…

Essentially this was all about how free radio and free software were wierdly the same. If you ever worked with free radio geeks, you will know they are nerdy technophiles who believe in the purity of what they are doing. Very much the same as Open Source geeks at the time. Most were interested in the tech and the political ideal of the respective mediums (radio and software). So, FRL was a comment on this. It was also a comment on how free radio was, ironically, very difficult to achieve on the internet unless serious attention was made to developing free codecs. But also FRL had two other elements going for it. The first was to (again) poke fun at the ridiculous hyperbole that surrounded the open source movement. People were expounding this ‘amazing new phenomenon’ and extrapolating how it would change the world (much as they did about wikis a short time after) when they had never come into contact with code or geeks. So this was an attempt to expose those people to the code…or what it sounded like. But also, at the time there was a lot of early talk about how to preserve digital media (a problem still not solved) and radio waves apparently never die… so by broadcasting the Linux kernel into space we were preserving it on the oldest medium ever, forever. Hehe…

I did, however, feel very sorry for the attendants at the New Museum who had to work 8-hour shifts listening to “one dollar sign dollar sign comma hatch four new line seven two dollar sign dollar sign…”

2002, New York City

This was, in theory, a radio network but in reality, just a few transmitters got installed. Still, it was fun. Thing FM was based in NYC and built during a residency I did at the Thing in NYC. The same week that the Yes Men came into the office to film their ‘shit burger’ stunt. They came into the Thing and asked who wanted to go and I didn’t go! doh! Anyway, we built the network using internet audio (via wireless and wired connections) and miniFM. Each of the transmitters was about 0.1 W output and sourced their audio live from the internet using the Frequency Clock scheduling system I had built earlier.

This partly adopts the ethic of micro-radio as founded by Tetsuo Kogawa, where many low powered FM transmitters are coupled to create an effective broadcasting entity that ‘falls beneath the radar’ of the communication authorities. fm.thing.net combined this ethic with that of net.radio which was a relatively new phenomenon focusing on the use of the internet as a carrier signal, best illustrated by the practices of the Xchange network. By combining the net.radio and micro-radio we hoped to build an efficient radio network in New York that used the internet as a primary carrier of the audio for re-broadcasting on legal or almost legal microFM broadcasts.

Hanging with Ted Byfield and Jan Gerber was a highlight of this experience. Wolfgang Strauss was also pretty fun but I was so intimidated by him. He was just so cool. Also sharing a tenement apartment in Ludlow Street with 3 people (bath in the kitchen) was pretty fun.

2001

Radio Astronomy was an art and science project which broadcasts sounds intercepted from space, live on the internet and on the airwaves. The project was a collaboration between r a d i o q u a l i a, and radio telescopes located throughout the world. Together we were creating ‘radio astronomy’ in the literal sense – a radio station devoted to broadcasting audio from our cosmos.

Radio Astronomy had three parts:

Listeners heard the acoustic output of radio telescopes live. The content of the live transmission depended on the objects being observed by partner telescopes. On any given occasion, listeners may have heard the planet Jupiter and its interaction with its moons, radiation from the Sun, activity from far-off pulsars or other astronomical phenomena. Honor from rQ later made a TED Talk about it.

Dino, drummer from HDU, did the website design…thats gotta rate…

2001, Latvia

In 2001 I had the good fortune to be part of the Acoustic.Space.Lab project which started a long love affair with the RT32 radio telescope. Formerly a cold war device, this telescope was liberated when the Russian Army pulled out of Latvia. I worked with this telescope as an artistic device and with the generous scientists for many years after. The doco clip below introduces the explorations of the international Acoustic Space Lab Symposium which took place on the site of RT-32 in 2001.

Highlights of this period in Latvia included being evicted by Russian builders, getting a hernia, and being amazed Marc Tuters survived eating so many dodgy looking mushrooms he found in the forest.

May 2001, Scotland and also later…

I have come to realise there is just too much stuff I have tinkered with to comment on. Open Sauces falls into that bucket. Google tells me this was 2001. Essentially I got sick of all the Open Source blah blah of the time.. everything was suffixed by OPEN and it got very tiring (Open Gov, Open Hardware, Open Society…). No critical reflection on the fact that geek methods are geek methods and they are not transportable – AND – OPEN processes, methods etc existed well before geeks came along and inherited the word. No geek invented openness. I’m still tired of this I have to say…still…. I created Open Sauces which was an open database of recipes… anyone that did a residency could add their favourite recipe and you could just tick all the ingredients you have in your fridge and get a recipe to suit… doesn’t sound too revolutionary but at the time this sort of thing didn’t exist. It was a comment on this abuse of the use of the word ‘open’ and how cooking way preceded sharing of ‘code’ / ‘instructions’ etc… and also how food is probably the most important part of any collaborative project, whereas unsocial nerdy talk is optional. Later Fo.am in Brussels were inspired by the idea and started an Open Sauces theme.

2000, Amsterdam

I’m particularly proud of this project. It came about when I was a very naive newly arrived resident of Amsterdam. I suggested to Geert Lovink this idea for a festival and he said to speak to Erik Kluitenberg. Both huge legends in my mind you understand… I mustered the courage up to suggest it to Erik who was a cultural curator at De Balie at the time. He said he would think about it and I thought I wasn’t very convincing. A week later he called me up and said let’s do it! Whoot!

The festival was held in Amsterdam in October 2000. Net.congestion was an intensive three-day celebration and critique of the new cultures that have arisen from all forms of micro-, narrow- and broad- casting via the internet, now collectively known as streaming media.

The event covered most of the interesting ground of the time for streaming media, from the transformation of issues surrounding intellectual property to the uses of streaming as a mobilisation tool for global resistance through to the more rarefied questions of aesthetics and how narratives are transformed when embedded in networks. The overwhelming experience of many visitors to Net.congestion was a sense of tools, networks and sensibilities being re-purposed, returning us, again and again, to a primary experience of the net as a social space.

Net.congestion occurred just months before dot.com bubble burst, exploding the ‘new economy’ and ‘the long boom’ with its fantasies of a world in which the economic laws of gravity had been repealed. There is no doubt that if the same event were to be held now, the atmosphere would be markedly different. It is not that Net.congestion was an industry event which depended on the hype for its existence, as the very title indicates that we mixed a healthy dose of skepticism with our festivities. But none of us, however critical, can entirely escape the zeitgeist and there is no doubt that in those brief heady days Warhol’s aphorism was re-written; we could all dream of becoming billionaires, if only for 15 minutes. A strange historical phase when (particularly for anyone involved in streaming media) the normally fixed boundaries between business, art, technology, science fantasy and just plain bullshit temporarily blurred to create a moment of unique cultural hysteria. In that sense our timing was perfect.

2000

In an attempt to make theorists a little more funky, I made a software they could use to put their brainy thoughts to glitchy syncopation. It was mainly used by Eric Kluitenberg including one memorable performance at Club Otok in Dubrovnik.

1999, Amsterdam

I helped found an organisation during the Nato bombing of Serbia and Kosovo in 1999. The international support campaign for independent media in Yugoslavia, including the famous Radio B92 media centre, in operation between March and July 1999. We did some pretty cool things but mostly I was very happy to be involved in what must have been one of the web’s early large-scale activist campaigns. It was also the start of my longish relationship with Amsterdam as XS4ALL offered me a job and I stayed for a few years. I still have a bike there somewhere.

What was tricky, though, is that I agreed to go to Skopje to assist an Albanian refugee radio station (Radio 21). It was kinda nerve wracking. There were literally bombs set to explode to take out as many Albanians as possible. Some kids lost their legs across the street from where I was working. I was a milk and cookies boy from NZ.. what was I doing here? Still, I stuck it out and we managed to set up quite an innovative way of getting radio transmissions out of the refugee camps to Radio Netherlands Shortwave.. .I’ll write that up when I get time.

1998 – 2004 or so, The World

This was one of the earliest r a d i o q u a l i a projects and how I learned to program. To understand this project you have to understand the dark ages of the internet when video and audio hardly existed. Essentially we built a media scheduling system that allowed you to build archives of live and pre-recorded content, tag them, and then schedule them. All built in JavaScript… remembering these were these days when Javascript was very rudimentary.

The Frequency Clock was originally conceived as a mechanism to control FM transmitters over the internet. In essence it was a networked timetabling system, connecting globally dispersed FM transmitters so they could broadcast the same internet audio simultaneously. The original player was a popup window but we also built desktop apps to do the same thing using VisualBasic (Win) and RealBasic (Mac). All open source.

However… then we realised that video could also work… and we used it to control community TV channels in Amsterdam and Linz and we also controlled giant video billboards in Estonia and a whole lot of other things. It was exibited a lot, most notably at the Walker when Steve Dietz was still there. We even installed a transmitter in the roof of De Waag! It was a remarkable experiment for its time. Yes, yes, pre-Napster and YouTube and all those other toys… while writing this I found some kind of prototype online.

1996 – 2008, the world.

Performing solo as ‘eset’ and with Honor Harger as r a d i o q u a l i a I did a lot of sound performances, most using sounds from space and either live performances in real space or on radio. Some stuff still exists online:

https://soundcloud.com/radioqualia

Or eg:

This was the project that liberated me from the south and the reason I moved to Europe with no money and no return ticket. My plan was to make coffee and do some arty stuff in London. Thankfully, Nato bombed Serbia (hoho) and everything changed.

What I really loved about this time, was that I felt part of a lovely international community of artists. We used to travel around and bump into each other in various crazy places. This group included people like Marko Pelijhan, Heath Bunting, Rachel Baker, James Stevens, Luka Frelih, the Mama crew, Lev Manovich, Steven Kovats, Matthew Beiderman, Giovanni D’Angelo, Zita Joyce, Adam Willetts, Rasa Smits, Raitis Smits and so many many others…it was an awesome time.

r a d i o q u a l i a was an artist project that consisted of myself and Honor Harger. I have described some of our exhibitions and performance projects above, and listed some below. There were many more.

In August 2004, r a d i o q u a l i a was awarded a UNESCO Digital Art Prize for the project Radio Astronomy. In September 2003, we were awarded the Leonardo-@rt Outsiders 2003 New Horizons Prize together with the participants of the Open Sky installation at the @rt Outsiders exhibition at the Museum of European Photography in Paris.

Selected r a d i o q u a l i a exhibitions and performances:

Lecture & performance at the Centre Pompidou, Paris, France

Work: Sonifying Space, as part of the Space Art conference

Exhibition at the New Museum of Contemporary Art, New York, USA

Work: Free Radio Linux, as part of the OpenSourceArt_Hack exhibition

Exhibition at the NTT InterCommunication Centre, Tokyo, Japan

Work: Radio Astronomy, as part of open nature, a show curated by Yukiko Shikata

Online exhibition / commission / installation at Gallery 9, Walker Art Centre, USA

Work: Free Radio Linux

Exhibition at Arsenals Exhibition Hall, Riga, Latvia

Work: solar listening_stations, part of WAVES

Exhibition at HMKV, Dortmund, Germany

Work: solar listening_stations, part of Solar Radio Station

Exhibition at the Walter Philips Gallery, Banff, Canada

Work: Free Radio Linux, as part of The Art Formerly Known As New Media

Exhibition at Centre d’Art Santa Monica, Barcelona, Spain

Work: Radio Astronomy, as part of Sonar 2005

Performance at Tesla, Berlin, Germany

Work: from polar radio to solar wind

Performance, La Batie Festival, Geneva, Switzerland

Work: signals as part of signal-sever

Exhibition at Ars Electronica, Linz

Work: Radio Astronomy

Exhibition at ISEA 2004, Helsinki, Finland

Work: Radio Astronomy

Symposium & Performance, Ventspils International Radio Astronomy Centre, Latvia

Work: Acoustic Space: RT32: Orchestrating the Solar System

Broadcast on Radio New Zealand

Work: Revolutions Per Minute 1: Frequency Shifting Paradigms in Broadcast Audio

Broadcast on Radio New Zealand

Work: Revolutions Per Minute 2: Little Star

Exhibition at Small Gallery, Los Angeles, USA

Work: comma.data.space: 11 Ghz

Performance at the Moving Image Centre in Auckland, New Zealand

Work: comma.data.return :: 56:30 – 21:1

Performance at Version festival, Auckland, New Zealand

Work: listening_stations v0.3: langmuir waves

Exhibition at the Physics Room, Christchurch, New Zealand

Work: re:Play

Exhibition at Artspace in Auckland, New Zealand

Work: re:Play

Exhibition & education programme, South Africa

Work: re:Play

Exhibition at the Reg Vardy Gallery, Sunderland, UK

Work: Free Radio Linux, as part of the Art for Networks exhibition

Exhibition at Museum of European Photography/ Maison Europeenne de la Photographie, Paris, France

Work: listening_stations, as part of @rt Outsiders exhibition

Exhibition at the Physics Room, Christchurch, New Zealand

Work: data.spac.ereturn, as part of the Audible New Frontiers exhibition

Locative media Residency at K2, Karosta, Latvia

Work: Locative Media

Exhibition at Turnpike Galleries, Leigh, UK

Work: Free Radio Linux, as part of the Art for Networks exhibition

Exhibition at Fruitmarket Galleries, Edinburgh, UK

Work: Free Radio Linux, as part of the Art for Networks exhibition

Radio show on Resonance 104.4FM, London, UK

Work: r a d i o q u a l i a on resonanceFM

Exhibition at the Generali Foundation, Vienna, Austria

Work: listening_stations as part of the Geography and the Politics of Mobility exhibition

Exhibition at Chapter, Cardiff, UK

Work: Free Radio Linux, as part of the Art for Networks exhibition

Performance & Broadcast, Ars Electronica, Linz Austria

Work: Radiotopia @ Ars Electronica

Radio Broadcasts on Austrian National Radio, Vienna, Austria

Work: i s o l

Exhibition at CCCB, Barcelona, Spain

Work: frequency clock – gallery installation – [sNr v.0.1]

Sonar 2001, Barcelona, Spain

Action & Broadcast, Ars Electronica, Linz, Austria

Work: Take Over Cultural Channel

Performance, Residency & Symposium at, Ventspils International Radio Astronomy Centre, Latvia

Work: Acoustic Space-Lab

Exhibition at Video Positive, Liverpool, UK

Work: Frequency Clock – gallery installation – [ vp00 v.0.0.3 ]

Workshop & Performance, Adelaide Festival of the Arts, Adelaide, Australia

Work: Closing the Loop 2000

Seminar & Performance at Lux Centre, London, UK

Work: Tuning the Net

Performance at the Stockton Festival, Stockton, UK

Work: transitions & undercurrents part of live-stock

Exhibition & Performance at OK Centrum, Ars Electronica, Linz, Austria

Work: pso.Net, as part of Sound Drifting

Exhibition at Experimental Art Foundation, Adelaide, Australia

Work: Frequency Clock – gallery installation – [ eaf v.0.0.2 ]

Exhibition at Experimental Art Foundation, Adelaide, Australia

Work: Illata

Exhibition at Contemporary Art Centre of South Australia, Adelaide, Australia

Work: e Q

Performance at LADA98 Festival, Rimini, Italy

Work: we are alive and well but terribly uncommunicative

Exhibition, Ars Electronica, Linz, Austria

Work: Frequency Clock – gallery installation – [ aec98 v.0.0.1 beta ]

Performance, Ars Electronica, Linz, Austria

Work: 56h LIVE!: Acoustic Space

Exhibition at Bregenz Festival, Bregenz, Austria

Work: gl^tch.bot

Performance and presentation at net.radio.days 98, Berlin, Germany

Work: self.e x t r a c t i n g.radio (.ser)

Online Project

Work: self.e x t r a c t i n g.radio (.ser)

Exhibition at the Machida City Museum of Graphic Arts, Tokyo, Japan

Work: The Qualia Dial

Exhibition at Fabrica New Media Art Institution, Italy

Work: Balance

Streaming Suitcase

PliegOS

Re:mote

Low Res

Skint Stream

Open Source Streaming Alliance

Open Channels for Kosovo

self.extracting.radio

ovalmaschina

Gema

simpel

Much more to come.

Most of the book production platforms in circulation have very little workflow tools to speak of. This is not necessarily a bad thing. A platform that is ‘just an editing environment’ is still pretty powerful. If you do need tools to assist with workflow, then in situations where a small group know each other well they can use email or, if in real space, Post-it notes or paper to track what needs to be done next. In many cases, a live chat in the interface, or integrated topic-based forum, will be enough to satisfy many workflow needs, and in other cases the platform can be augmented by external systems such as wikis, online spreadsheets, content management systems and other tools to meet particular requirements.

However, there are a number of situations where these ‘solutions’ become unsatisfactory. This is especially true for organsiations which have a large number of people involved in processing content, or which have sophisticated content-processing needs (such as book publishers).

Before going too much further, let me clarify what “workflow tools” are. In the broadest sense, they are tools that help you to know what needs to be done, and when it needs to be done by. Using this very broad definition, we can see that mechanisms such as discussion forums and live chats are workflow tools. By chatting with colleagues through a live chat or forum, you can work out what needs to be done next, or get a ‘notification’ (a shout out) that it needs to be done now… From there, systems can evolve into complex technical environments which are either relatively open-ended (such as Trello) or relatively closed, such as hard-coded workflow pipelines.

The first book production system I built for FLOSS Manuals was ‘built’ on top of Twiki in 2006-2007, had some basic workflow tools, namely:

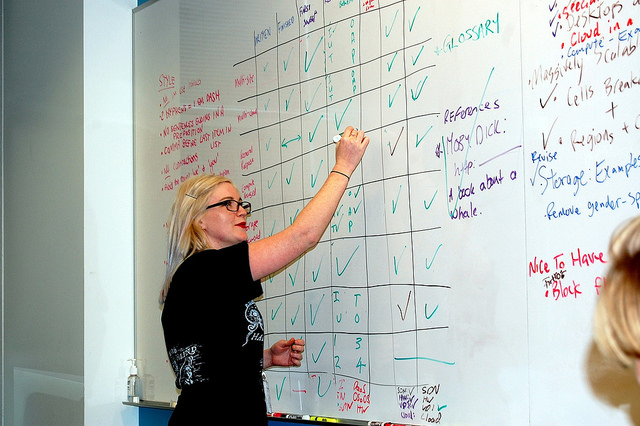

These tools were simple and effective and served us well for a number of years. I also incorporated similar mechanisms into Booktype and PubSweet. In addition, when we used these platforms for Book Sprints, lots of whiteboard scribbles and Post-its were utilised.

In a Book Sprint, notably, the facilitator is the main coordinating workflow mechanism. I point that out because it is important to understand that workflow tools can include humans – often the easiest way to know what needs to be done and when is to be done by, is to get someone else to tell you.

And let’s not forget that human factor! We are living at a time when we tend to want to programmatically solve problems with overly prescriptive technical systems. But sometimes underdetermining the technical systems is the right way to go.

I first tried pushing past these basic software workflow tools with Booktype – a book production system I founded, now housed with Sourcefabric. I leveraged the kanban idea of multiple columns (phases) populated by ‘todo’ items to build the equivalent of a digital kanban system, making the first simple prototype in a demo for the Frankfurt Book Fair in 2012. The inspiration came from Pivotal Tracker and the Open Source Fulcrum.

Most often the technology used to set up a kanban system is a whiteboard, with marker pens to draw and label the columns, and Post-it notes as a marker of the tasks. This kind of system is popular in unconferences, and also often used by software development houses. We also use this type of kanban approach a lot in Book Sprints.

The task manager (as I called it) and the production system were linked to each book and worked nicely. Although this system didn’t make it into the core code of Booktype, this version got the idea across, and later Juan Gutierrez made an integrated version for PubSweet. (During 2014, I also built this idea into a system for PLOS).

The task manager used a whiteboard-like interface in which the user could use to create columns (phases). Cards could be added to each phase and simple notes kept on each card. It was simple but effective.

In time I discovered Trello, and Why Cards are the Future of the Web by Paul Adams – these examples placed cards nicely within evolving design paradigms of the Internet, and I started to think about this model in more detail.

There are many advantages to cards, not the least being that cards can ‘follow the user’ – think of them as powerful work-unit-applications that can be accessed by a user within any context where they are needed.

Additionally, when thinking of digital cards within the digital workflow-kanban paradigm, the nice thing is that it is a very simple model. There are essentially just 2 elements – cards and columns. You can create as many of each as you like. Further, you can name the columns and cards anything you like. That means these two devices can be used to represent any number of simple or complex workflows. You can start from the kanban default – three columns marked ‘to do’, ‘doing’ and ‘done,’ and add cards for each task – progressing them from left to right as tasks progress from ‘to do’ to ‘done.’ This is the default configuration when creating a new Trello board.

Replicating this system in an application is pretty easy to do. Trello is an excellent example. While Trello is not easily integrated into another technical system (such as an in-house publishing system), it is interesting in that the designers, while surely tempted by all that a web application could offer, have endeavoured to keep the Trello system true to the kanban ideology of useful but simple. With Trello, therefore, you can add columns, and cards to columns, naming each as required. When you open a card, however, you have some nice widgets for making lists, comments, discussions, attaching files etc. This is something paper cannot easily do, at least not with the small real estate afforded by Post-it notes.

Trello is a lovely application precisely because these systems, like the paper kanban, have been designed to be simple to use and serve as many generic use cases as possible.

However,while digital kanban systems like this are useful as standalone ‘context agnostic’ systems, they could be much more powerful for publishers (or anyone) if this simplicity and flexibility could be preserved while the system also served their specific use case. The trick is to preserve the simplicity and flexibility to allow publishers to model existing and future workflows in an easily ‘grok-able’ drag and drop manner (similar to Trello), while building cards that reflect the publisher’s specific needs (to invite editors, push content to external vendor services, perform peer review etc).

Building cards like this, means pushing cards away from the Trello/kanban generic-use paper metaphor towards a more sophisticated specific-use digital and networked paradigm. This means embracing the idea that cards are networked applications and building cards that precisely serve the publisher’s needs and integrate into their existing internal and external systems.

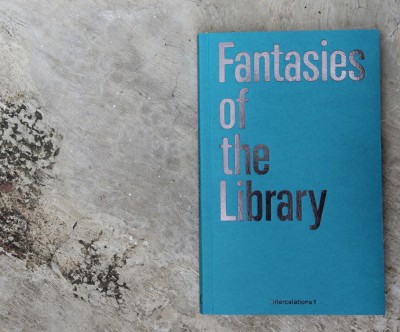

Fantasies of the Library is a book released last week by Berlin publisher k-verlag. There is an interview in it with me about the future of book publishing beyond the proprietary model. I also talk about my current work for the Public Library of Science and the relationship between Open Access and Open Source.

The full interview is also online and can be read here.

My favourite passage is this:

Charles Stankievech: “But why should one value open source and open access? What are the political ramifications of such a philosophy and practice?”

Adam Hyde: “Because both provide more value to humanity. Political ramifications are vast and complex. I like to think about the personal aspects of this choice, however. Living a life of open source and open access forces you to peel away layer by layer the proprietary way of thinking, doing, and being that we have all grown up with. It can be a very painful process, but it’s also extremely liberating and healthy. Largely, it actually means learning to live without fear and paranoia of people ‘stealing your ideas’. That’s quite a freedom in itself.

Note: this is an early version. It has been cleaned up some, but is still needing links and screenshots…. Apologies if the rawness offends you 🙂

This series is skipping around the toolchain, depending on what’s most in my mind at the moment. Today it’s file conversion, otherwise known as ‘rendering’. This is the process of converting one file type to another, for example, HTML-to-EPUB or Word-to-HTML, and so on.

It’s important to have file conversion in the book production world because we often want to convert the HTML to a book format – like book-formatted PDF, or EPUB, mobi and so on, or to import into a new document existing content contained in a file like MS Word.

It is, of course, quite possible to do all your file conversion manually.

Should you wish to convert HTML into a nice book-formatted PDF, one possible strategy is to go out to InDesign or Scribus and lay it all out like our ancestors did as recently as 2014. Or, if you want to convert MS Word, for example, to HTML, you can just save it as HTML in Word… Yes, Word copies across a lot of formatting junk, but you can clean it up using purpose-built freely available software (such as HTMLTidy and CleanUp HTML), online services (like DirtyMarkup),or a handy app (such as Word HTML Cleaner)…

Manual conversion is not too bad a strategy, as long as it doesn’t take you too long, and it is often more efficient and faster than those convoluted hand-holding technical systems which promise to do it for you in one step. Despite the utopian promises made by automation… you often get better results doing the conversion manually.

I sometimes hear people in Book Sprints, for example, complain something to the tune of “why can’t I just click a button and import part of this paragraph from Wikipedia into the chapter, and then if the entry is updated in Wikipedia, I can just click the button again and it will be updated here”…