A few weeks ago Dave Cramer and I started a new website – Paged Media. The website’s purpose is to promote the use of HTML, CSS and JS to make books, whether the books are displayed in the browser, or in e-readers, on mobile devices, or in print. The site is coming along nicely with blog posts and links to valuable resources to do with the production of books for reading or display on screen. Soon we will introduce podcasts to the site, some weekly how-to posts, and items about the future and past of this very important approach to making books.

Tag: HTML

Projects

Some of my projects. As you can see, partially complete. Will add more and then make this a static page.

Publishing-related

Collaborative Knowledge Foundation

Current

The Collaborative Knowledge Foundation’s mission is to evolve how scholarship is created, produced and reported. CKF is building open source solutions in scholarly knowledge production that foster collaboration, integrity and speed.

CKF envisions a new research communication ecosystem that gives rise to wholly unique channels for research output.

CKF was founded in October 2015 with support from the Shuttleworth Foundation.

Shuttleworth Foundation

Current

I have just been awarded a Shuttleworth Foundation Fellowship. I’m deeply honoured to have been selected. I was awarded a second year of Shuttleworth Fellowship for my work on reformulating how knowledge is produced.

Recent Presentations

The Future of Text

Google Headquarters in Silicon Valley, Aug 2016

http://www.thefutureoftext.org/

Organised by friends and followers of Douglas Englebart, Adam was invited to present on collaboration and book production.

Association of Learned and Professional Society Publishers

London, Sept 2016

http://www.alpsp.org/2016-Programme

Adam was invited to present at the annual ALPSP conference about ways that Open Source could change publishing.

International Society of Managing & Technical Editors

Brussels, Nov 2016

http://www.ismte.org/page/2016EuroConference

http://www.slides.com/eset/ismte

Adam was invited to present on Open Source tools for publishers at the Brussels edition of the 2016 IMSTE series of conferences.

Open Fields

Riga, Latvia, Oct 2016

http://rixc.org/en/festival/Open%20Fields%20Konference/

I was invited to speak about the intersection of art, science, and publishing at the cutting edge Open Fields festival.

Unlearning Collaboration

Berlin, Oct 2016

http://www.supermarkt-berlin.net/event/un-learning-networked-collaboration/

Adam was invited to facilitate a one day conference on collaboration and facilitation.

Development projects

PagedMedia

2016 – present

http://wwwpagedmedia.org

Founded the blog about paged media.

Substance Consortium

2016 – present

http://substance.io/consortium/

Foundational member of the Substance Consortium.

Book Sprints

2008 – present, New Zealand

http://www.booksprints.net/

Book Sprints is a methodology and a company I founded to rapidly produce books.

Nov 2016: transitioned from founder and CEO to the board. I appointed Barbara Rühling as CEO.

A Book Sprint is a collaborative process where a book is produced from the ground up in just five days. But even more important, this collaborative process captures the knowledge of a group of subject-matter experts in a manner that would be nearly impossible using traditional methods. The result at the end of the Book Sprint is a high-quality finished book in digital and print-ready formats, ready for distribution.

Book Sprints Ltd, is a team of facilitators, book-production professionals, and illustrators specialised in Book Sprint facilitation and rapid book production. Our organisation developed the original methodology and has refined it since 2008 through the facilitation of more than 100 Book Sprints. Topics have ranged from corporate documentation to industry guides, government policies, technical documentation, white papers, academic research papers, and activist manuals.

Book Sprints clients include Cisco, PLOS, F5, the World Bank, USAID, African Development Bank, Open Oil, Liturgical Press, Ausburg Fortress, Cryptoparty, OpenStack, European Commission, JISC CETIS, UNECA, Mozilla, IDEA, Engine Room, Heidy Collective, Transmediale, Google… to name a few.

"If Book Sprints did not exist, we would be forced to invent them, so powerful is the knowledge production paradigm." --Allen Gunn, Aspiration "Book Sprints get more brilliant work out of bright people in 1 week than most project can evoke across many months." --Loy Evans, Cisco "Writing a book through a Book Sprint turned out to be efficient, thorough and enjoyable; I can’t imagine a better outcome." --Phil Barker, JISC CETIS

Aperta

2013 – July 2015. Public Libary of Science, San Francisco

In 2013, I designed a platform for the Public Library of Science (PLOS), originally called Tahi but renamed to Aperta. In 2014 I was asked to lead a team to build the platform. I led the 15 strong team to the production-ready 1.0 release of this multi-million dollar project to completion, on time and under budget in June 2015.

Aperta is an entire submission and peer review platform for multiple scientific journals housed within the single instance. The entire system is designed to be highly collaborative and concurrent. The platform includes a manuscript production interface, HTML and LaTeX document editing support, Word ingestion, a workflow management system, task management interfaces, admin interfaces, reports, and user dashboards. The platform was built in Ember-CLI, Rails, implements a highly customised Wikimedia Foundations Visual Editor, and uses Slanger for concurrency. It is an HTML-first system, has many innovative new approaches to journal systems, and solved many long-standing problems in this space. The project also involved a separate codebase named iHat that provides Aperta with an API service for queue-managed file conversions.

"At its core, this new PLOS editorial environment brings simplicity to the submission and peer review process by providing advanced task-management technology and a vastly improved user interface, which will enhance the publishing experience for our community of authors, editors, and reviewers." http://blogs.plos.org/plos/2015/07/publishing-initiatives-at-plos-a-look-back-and-a-look-ahead/

NB: I only work on Open Source systems. The sources are not yet available for this project.

PubSweet

2012 – present

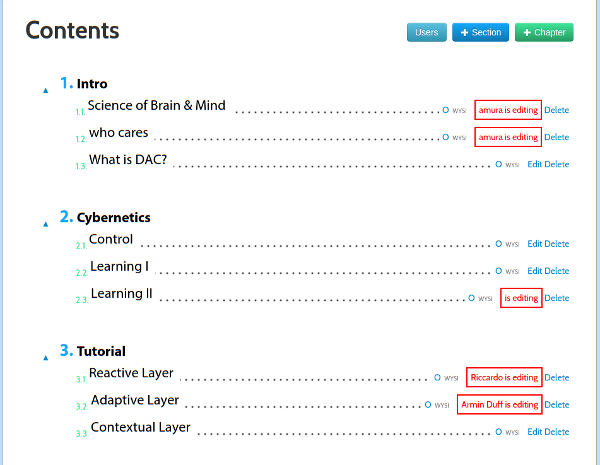

PubSweet is a platform designed to assist the rapid production of books in Book Sprints. The platform is very simple to use, with very little overhead for new users. The system provides dashboards, publishing consoles, card-based workflow management (task manager), discussions, data visualisations of contributions, a dynamic table of content management, and support for multiple chapter types. PubSweet can produce EPUB and leverages book.js (see below) to produce print-ready PDF (paginated in the browser). PubSweet is written in PHP, using Node on the backend, and CKEditor as the content editor.

Lexicon

2012

Lexicon is a platform produced for the United Nations Development Project to collaboratively produce a tri-lingual (Arabic, French, English) lexicon of electoral terms for distribution in Arabic regions. Lexicon provided concurrent editing for chapters with multiple terms, sorting by language, discussion forums and voting. Lexicon was written quickly in php with Node.

"The Lexicon was created with the aid of an innovative collaborative writing tool customized to suit the needs of this project. This web-based software allowed the authors, reviewers, translators and editors to simultaneously input their contributions to successive drafts from their various countries. "http://www.undp.org/content/undp/en/home/presscenter/pressreleases/2014/11/19/undp-launches-first-lexicon-of-electoral-terminology-in-three-languages.html

![]()

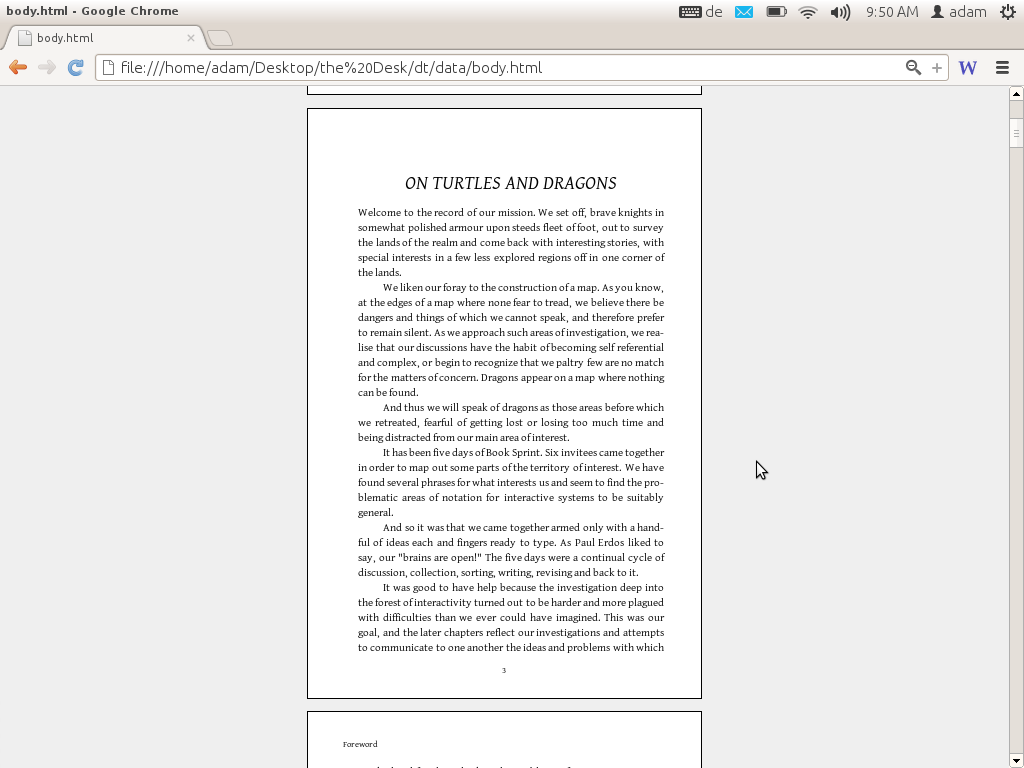

book.js

2012

book.js is a Javascript library that you can use to turn a web page into a PDF formatted for printing as a book. Take a web page, add the JavaScript, and you will see the page transformed into a paginated book complete with page breaks, margins, page numbers, table of contents, front matter, headers etc. When you print that page you have a book-formatted PDF ready to print.

book.js has given inspiration to a number of other JS pagination engines. See Vivliostyle, bookJS Polyfil, Pagination.js, simplePagination.js, and CaSSiuS.

Booktype

2010 / 2012

Booktype is a book production platform. I brought this platform to Sourcefabric (Berlin) as ‘Booki’ in 2012. Booki was started in 2010. Booktype is written in Python (Django).

“Booktype resolves challenging issues in collaborative knowledge production resulting in high quality print and ebooks.” – Erik Möller, deputy director, Wikimedia Foundation

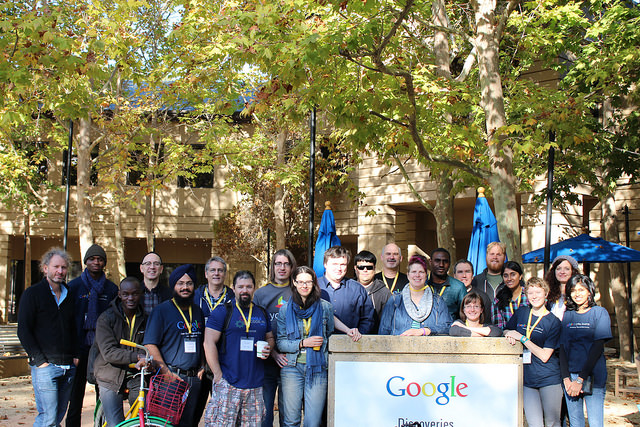

Google Summer of Docs

2011, 2012, 2013

The GSoC Doc Camp was an annual event over three years. It was a place for documenters to meet, work on documentation, and share their documentation experiences. The camp improved free documentation materials and skills in GSoC projects and helped form the identity of the emergent free-documentation sector.

The Doc Camp consisted of 2 major components – an unconference and 3-5 short form Book Sprints to produce ‘Quick Start’ guides for specific GSoC projects.

Each Quick Start Sprint brought together 5-8 individuals to produce a book on a specific GSoC project. The Quick Start books were launched at the opening party for the GSoC Mentors’ Summit immediately following the event.

Bookimobile

2011

The Bookimobile was a a mobile print lab in a van – essentially a van that contained all the equipment necessary to create perfect bound books. It was designed to take the ideas of Booki to people and make real books that have been created in Booki. The first Bookimobile was based on the Internet Archives Book Mobile and we took it to several book fairs and events throughout Europe. It was sponsored by Mozilla, CiviCRM, Archive.org, Francophonie.org, Google Summer of Code, and iCommons.

Objavi

2008

Objavi is an API-software service originally written for Twiki Book (see below) but also serviced Booki and later Booktype. Objavi converts books from their native HTML into PDF for printing. It also handled other file conversions (eg HTML to ODT, HTML to EPUB etc). I later produced a similar API-based conversion software for PLOS known as iHat. Objavi is written in Python.

TWiki Book

2006

TWiki Book didn’t have a real project name at the time. The project was the first publishing system I built. TWiki Books was created solely to meet the needs of FLOSS Manuals (see below) and it was built on top of TWiki, a Perl-based wiki. TWiki Book included book remixing features, side by side translation, table of contents building, publishing interfaces (I actually wrote a separate php-based system to manage this), edit notifications, versioning, diffs, live chats and many other features. It was a good system but reasonably difficult to extend and maintain since it re-purposed an existing wiki software (hence my approach to building purpose-built book production systems after this point).

FLOSS Manuals

2006

FLOSS Manuals was the project I founded in 2006 that got me started on this whole publishing thing. FM was, and is still, an active community of volunteers that creates free manuals about free software. There is now a foundation and several language communities (notably French and English). The contributors include designers, readers, writers, illustrators, free software fans, editors, artists, software developers, activists, and many others. Anyone can contribute to a manual – to fix a spelling mistake, add a more detailed explanation, write a new chapter, or start a whole new manual on a topic. The aim was produce high quality free works and we succeeded – creating many fantastic manuals in over 30 languages (and still growing).

“Introduction to the Command Line” is at least as clear, complete, and accurate as any I’ve read or written. But while there are countless correct reference works on the subject, FLOSS’s book speaks to an audience of absolute beginners more effectively, and is ultimately more useful, than any other I have seen.” -- Benjamin Mako Hill, Wikimedia Foundation Advisory Board, Free Software Foundation Board

Presentations about publishing

I am asked to talk about publishing from time to time. The following are some links to some of those presentations.

Choosing a document network

August 2015, Vancouver, Public Knowledge Project

Open Access and Open Standards

Oct 2014, San Francisco, Books in Browsers

May 2014, Rotterdam, Off the Press

May 2014, San Francisco, I Annotate

May 2013, San Francisco, I Annotate

Oct 2013, San Francisco, Books in Browsers

May 2012, Berlin, re:publica

Writings

I have been writing about publishing here and there. Since last year these efforts have been focused on this site. The following are some links to some of my other works:

Fantasies of the Library : After the Proprietary Model

Interview with me about the future models for publishing, published by k-verlag (Berlin).

Radar O’Reilly posts

When Paper Fails

What happens when books, ownership, authority and authors are all challenged by a network.

Visualizing Book Production

Why is no one visualising data on how we make books?

Zero to Book in 3 Days

A little bit about Book Sprints.

Forking the Book

When books are forked.

Over Thinking EPUB

Commentary on why EPUB might be confusing the issue.

Changing the Culture of Production

How to change the way we produce knowledge.

WYSIWYG vs WYSI

The evolution of the editor.

Math Typesetting

the sorry state of math typesetting.

InDesign vs CSS

Why HTML wins versus Desktop Apps.

The Blanche Dubois Economy

Why ‘Open API’ is really a silly idea.

Gutenberg Regions

Paginating in the browser.

Browser as Typesetting Engine

More commentary on the browser as a typeset engine.

The New New Typography

The evolution of Javascript Typesetting.

Other Projects

Seaweed

2008, Quarantine Island, New Zealand

This project was actually called ‘Intertidal’ but I like the name Seaweed better. Douglas Bagnall and I created a one-day community project to discover a new species of seaweed. We hosted this on Quaratine Island in the Dunedin Harbour and invited anyone to come work with us and a marine biologist from the local research center to search for a new species. The project was a community project and a reflection on the notions of species as an out-moded idea, and on taxonomy as a dying art. About 50 or 60 people – individuals, groups, and families – came out on the free boat (provided by the local sea scouts) and hiked across the island to participate on a coldish Dunedin day to search for and document seaweed. We possibly discovered a new species.

throwing into focus the ever-present potential for new knowledge. Drawing upon 19th century methods of species discovery, involving collecting, looking and drawing, their work formed questions around what we don't know.

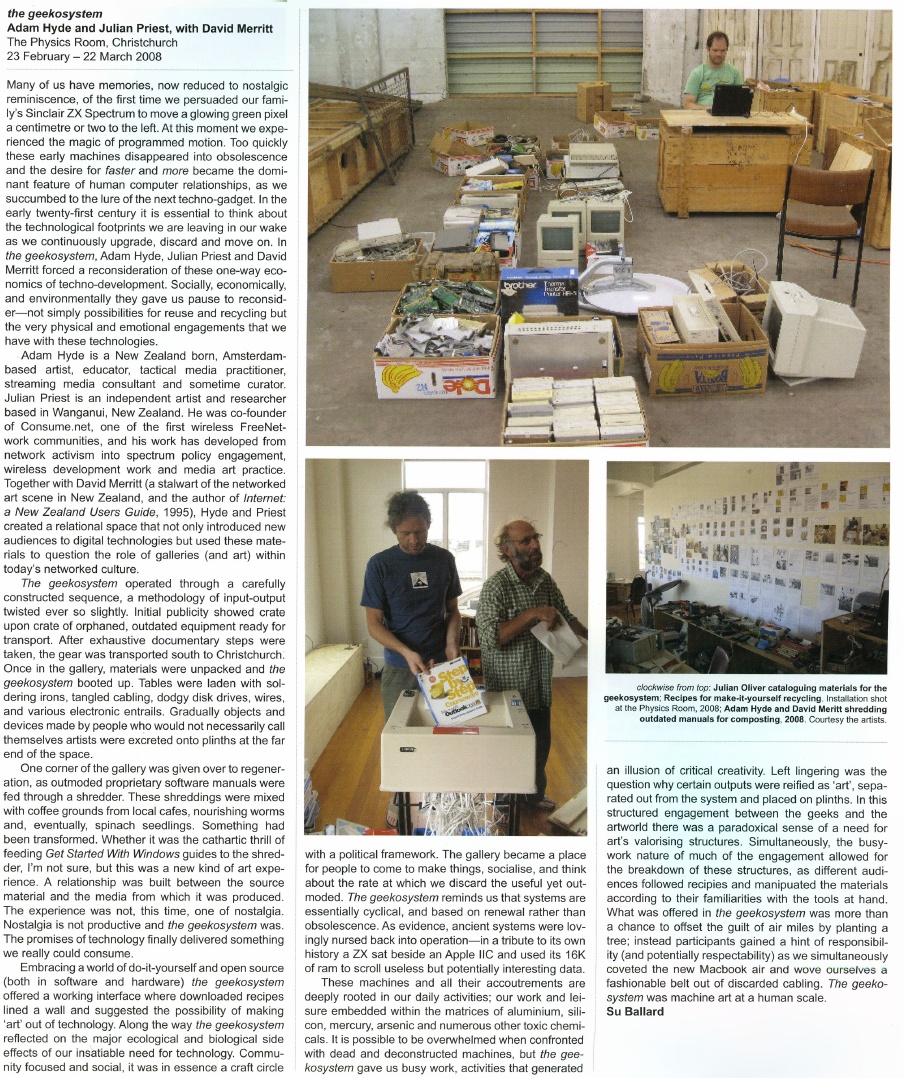

Geek-o-system

2008, Christchurch, New Zealand

Julian Priest, Dave Merritt and I drove about a tonne of old electronics 700km or so in an old landrover (top speed 35km/hr) from Daves warehouse in Wanganui to an art gallery in Christchurch, New Zealand. In the gallery, we served up the old electronics as a participatory art project and invited anyone to come and build new objects from the old. It took 3 days to get there. It was an adventure.

A Geekosystem was a participatory workshop based on a redundant technology collection created by David Merritt. Items were selected from the collection and packed into a Landrover and driven to The Physics Room in Christchurch. A call for participation was issued by The Physics Room and a group of geeks gathered to re-configure the technology into artworks. A workshop space was created and plinths were placed at once end of the gallery and populated with artworks made from the e-waste. The workshop was open to the public and continually added to during the duration of the show. Old technology books were formed into a library. Proprietary software manuals were shredded and mixed with coffee grounds. This was mulched into soil and silver beet seedlings were successfully germniated in floppy disk trays.

A Geekosystem was shown first at the Physics Room in Christchurch in 2008 and then at The Green Bench during the Whanganui Open studio week in 2008. The Geekosystem garden was transferred to a permanent location and produced vegetables for a number of years.

Paper Cup Telephone Network

2006, Exhibited at the Exploratorium in San Francisco, Zero One Festival in San Jose, and many other venues.

This was a project I made with Matthew Biederman and Lotte Meijer. The Paper Cup Telephone Network (PCTN) was a free communication system and comment on how ‘simplicity’ in technical systems is trickery, and problematising the corporatisation and ever increasing individualisation of modern communications.

The PCTN was a network of paper cup telephones. Just like the games played by children, anyone could put a PCTN cup to their ear to listen, or to their mouth to speak. However, the difference between the PCTN and the original game is that the “string” is connected to the World Wide Web where your voice is streamed to all the cups on the network carrying it, blocks or even miles or a continent away. We built the entire system from free software telephony systems (asterisk and SIP phones), open and standards-based telephony protocols, cups, and string.

As simple as it was, it remains the most difficult technical project I have ever undertaken.

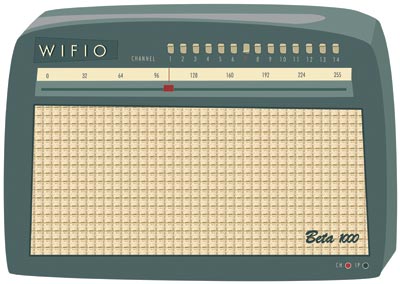

Wifio

2006, exhibited at the Waves exhibition in Riga, Latvia

Wifio was a project I did with Lotte Meijer and Aleksandar Erkalovic. Wifio was a comment on the naivety in which we broadcast our personal information. It was a hardware UI and software that allowed anyone to tune into the World Wide Web wifi traffic. If someone near you was browsing the web on a wifi network, you could simply tune in with Wifio by selecting the right channel and tuning into their IP address.

…but don’t worry, you don’t need to know what their “IP Address” is, in fact you don’t even need to know what an IP address is! Just move the dial until you hear their emails or what they are saying in chatrooms.

I was proud when Julian Oliver (an old buddy from NZ) referenced this project as inspiration for one of his works.

Sound Elevator

2007

r a d i o q u a l i a (see below) were commissioned to make a new work for Forte di Bard in Valle d’Aosta in Italy for “Cima alle stelle (Stars)”, a large exhibition showing historical works by major masters like Durer, Tintoretto and Guercino; contemporary artists such as Pierre Huygue, Olafur Eliasson and others; and astronomical instruments and writings by Copernicus, Galileo, Kepler, Newton and Einstein.

We made a new site-specific sound installation inside two of the glass elevators which take visitors from the arrival area of Forte di Bard, to the gallery levels. Much of the elevator travel is external to the Forte (pictured below). Sound Elevator consisted of two linked sound environments inside the elevators. As the elevators ascended to the exhibitions halls, visitors experienced an auditory journey from the local celestial environment to the edges of the Universe. In the first elevator, visitors sonically travelled through the Earth’s ionosphere and magnetosphere, hearing our closest star, the Sun, interacting with our planetary atmosphere. The upward and outward journey continued in the second elevator, with sounds from our planetary neighbours, the sonic echo of distant stars, and finally the sound of the Big Bang itself.

I-TASC

2006/2007 Antarctica

I did a 2-month residency in Antarctica at SANAE (South African Research Base) as part of I-TASC, ultimately a failed network of individuals and organisations working collaboratively in the fields of art, engineering, science and technology on the interdisciplinary development and tactical deployment of renewable energy, waste recycling systems, sustainable architecture and open-format, open-source media. But it was still a great experience.

The coolest thing about it, was the 2 weeks each way on the beautiful icebreaker the SA Agulhas (now decommissioned). I kept some diary pages on the Interpolar site. I also did a few other projects while there including Polar Radio (see below).

The worst thing about it was that we shouldn’t have been there. There is no need for anyone to be in Antarctica. Most of the ‘science’ projects are strategic positioning for a land grab when the time comes. Some science might be justifiable… but arts projects?

Leaving Antarctica I cried my eyes out. It was just too much for me to deal with. Too amazing. After Antarctica, I gave up the art world. I couldn’t think of anything else the art world could do for me.

It was a conflicted but beautiful experience.

Polar Radio

2006/2007, Antartica

Polar Radio was a community radio project initiated by I-TASC and

r a d i o q u a l i a. The first prototype station began FM broadcasts on 29 December 2006 in the Dronning Maud Land sector of Antarctica, where South Africa maintains their base, SANAE IV. It was Antarctica’s first artist-run radio station. It was the first step towards establishing a permanent polar radio presence in Antarctica, which may eventually broadcast in between geographically dispersed Antarctic bases.

But y’know…I wish I hadn’t done it. When I first got to Antarctica I turned on a radio and went through many many frequencies… and I heard nothing… that was amazing. Where else in the world can you not hear anything on your radio? I then went ahead and polluted the spectrum. Darn. I regret it.

Polar Radio was part of a series of projects run by I-TASC – the Interpolar Transnational Art Science Constellation.

Mobicast

2005, Transiberian Express

Capturing the Moving Mind was a conference on board the Trans-Siberian train. It was about new forms of movement and control, war and economy, in the current situation. 50 international researchers, artists and activists participating in the mobile conference formed a mobile production unit aboard the train. For the audiovisual streams, Luka Princic and I developed a free software ‘mobicasting’ platform which enabled mobile transmission of material on the web from mobile phones on the train. Mobicast was initially developed during a residency I had at MAMA Media Lab (Zagreb, Croatia).

It was a great project but really really fragile. The tech of the time was not up to it. Mostly it ran on Puredata and some obscure bits of code from here and there. Still it worked. Best moments were hanging out on the train laughing at people trying to be ‘artists’ in real time… huh? …and getting sardonic with Dr Gillian Fuller – the world’s best queue hacker. Watching the train wind around the Gobi desert… also kinda cool.

mobicast was initially developed to overcome the problem of delivering live video from a moving train to the internet. Traditionally this is the domain of OB (Outside Broadcast) technologies or expensive vehicular satellite uplink hardware. However mobile phones are now very capable remote broadcast environments. Many modern phones record images, video, audio and allow the editing and transfer of these media through wireless data networks (eg. GPRS) with almost global coverage. The quality of these recorded media have generally been considered 'low-fi' but fidelity is increasing and importantly, the expectations of networked media are becoming more appropriate. Once upon a time there was a mythic "broadcast quality" threshold all media had to pass before being accepted by broadcast organisations and (theoretically) audiences. However, now there are active calls for content generated by "on the spot" accidental observers by large scale media organisations. The tide and scale of remote media is changing. The nature of experimental media on this type of platform is the intentional playground of mobicasting. With this emerging new type of media witness cultural forms are also emerging. Multiple networked media phones is in itself a platform for collaborative cultural development and opens interesting doors for experimental media.

MiniFM and SilentTV

2001-2004, International

For many years I had a wonderful mentor – Tetsuo Kogawa. He is the father of MiniFM. I saw Tetsuo build a mini FM transmitter at the Next Five Minutes festival in Amsterdam. Sometime after that, I asked him if he would teach me how to make them too, and he very generously spent a good deal of time making sure I understood the ins-and-outs of the process. Together we designed a workshop and Tetsuo worked out even simpler ways to build the transmitters. For many years I travelled the world leading transmitter-building workshops and often Tetsuo would stream in from his studio in Tokyo to talk about the idea and give a quick demonstration before we started building.

Later Tetsuo and I created a project called SilentTV which was the same idea but using simple elements to broadcast TV.

I’m forever grateful to Tetsuo for his kindness and mentorship.

re:Play

November 2003, South Africa

re:Play explored the world of the computer game. It featured an exhibition of artists’ computer games by Andy Deck, Josh On + Futurefarmers, Mongrel, Natalie Bookchin, the escapefromwoomera collective and Max Barry, and a programme of workshops and lectures. re:Play was a collaboration between the Institute for Contemporary Art, Cape Town and r a d i o q u a l i a. It launched at L/B’s – The Lounge at Jo’Burg Bar in central Cape Town, South Africa, and went on to be exhibited at Artspace and the Physics Room in New Zealand.

The games in the exhibition were not typical computer games. While all of them encouraged play, and involved a gaming objective, unlike regular computer games, they had a strong political dimension and explored how play, interaction and competition can be utilised in an artistic context.

The re:Play education programme included talks and workshops lead by Graham Harwood of Mongrel and r a d i o q u a l i a at Cape Town High School, Fezeka Senior Secondary School in Gugulethu; the Alexandra Renewal Project, Johannesburg and at Wits School of Arts, University of the Witwatersrand, Johannesburg.

Free Radio Linux

2002, The World

Another project that got a lot of attention for r a d i o q u a l i a, most notably through being exhibited at the New Museum in NYC, but it also at Banff and other places. I loved this project because it brought together several threads.

Formally, Free Radio Linux was an online and on-air radio station. The sound transmission was a computerised reading of the entire source code used to create the Linux Kernel, the basis of all distributions of Linux.

Each line of code was read by an automated computer voice – a speech.bot

utility I built for the work. The speech.bot’s output was encoded

into an audio stream, using the early open source audio codec, Ogg Vorbis, and was broadcast live on the internet. FM, AM and Shortwave radio stations from around the world also relayed the audio stream on various occasions.

The Linux kernel at that time had 4,141,432 millions lines of code. Reading the entire kernel took an estimated 14253.43 hours, or 593.89 days.

Listeners tracked the progress of Free Radio Linux by listening to the

audio stream, or checking the text-based progress field in the ./listen

section of the website (which is no longer up)…

Essentially this was all about how free radio and free software were wierdly the same. If you ever worked with free radio geeks, you will know they are nerdy technophiles who believe in the purity of what they are doing. Very much the same as Open Source geeks at the time. Most were interested in the tech and the political ideal of the respective mediums (radio and software). So, FRL was a comment on this. It was also a comment on how free radio was, ironically, very difficult to achieve on the internet unless serious attention was made to developing free codecs. But also FRL had two other elements going for it. The first was to (again) poke fun at the ridiculous hyperbole that surrounded the open source movement. People were expounding this ‘amazing new phenomenon’ and extrapolating how it would change the world (much as they did about wikis a short time after) when they had never come into contact with code or geeks. So this was an attempt to expose those people to the code…or what it sounded like. But also, at the time there was a lot of early talk about how to preserve digital media (a problem still not solved) and radio waves apparently never die… so by broadcasting the Linux kernel into space we were preserving it on the oldest medium ever, forever. Hehe…

I did, however, feel very sorry for the attendants at the New Museum who had to work 8-hour shifts listening to “one dollar sign dollar sign comma hatch four new line seven two dollar sign dollar sign…”

Thing FM

2002, New York City

This was, in theory, a radio network but in reality, just a few transmitters got installed. Still, it was fun. Thing FM was based in NYC and built during a residency I did at the Thing in NYC. The same week that the Yes Men came into the office to film their ‘shit burger’ stunt. They came into the Thing and asked who wanted to go and I didn’t go! doh! Anyway, we built the network using internet audio (via wireless and wired connections) and miniFM. Each of the transmitters was about 0.1 W output and sourced their audio live from the internet using the Frequency Clock scheduling system I had built earlier.

This partly adopts the ethic of micro-radio as founded by Tetsuo Kogawa, where many low powered FM transmitters are coupled to create an effective broadcasting entity that ‘falls beneath the radar’ of the communication authorities. fm.thing.net combined this ethic with that of net.radio which was a relatively new phenomenon focusing on the use of the internet as a carrier signal, best illustrated by the practices of the Xchange network. By combining the net.radio and micro-radio we hoped to build an efficient radio network in New York that used the internet as a primary carrier of the audio for re-broadcasting on legal or almost legal microFM broadcasts.

Hanging with Ted Byfield and Jan Gerber was a highlight of this experience. Wolfgang Strauss was also pretty fun but I was so intimidated by him. He was just so cool. Also sharing a tenement apartment in Ludlow Street with 3 people (bath in the kitchen) was pretty fun.

Radio Astronomy

2001

Radio Astronomy was an art and science project which broadcasts sounds intercepted from space, live on the internet and on the airwaves. The project was a collaboration between r a d i o q u a l i a, and radio telescopes located throughout the world. Together we were creating ‘radio astronomy’ in the literal sense – a radio station devoted to broadcasting audio from our cosmos.

Radio Astronomy had three parts:

- a sound installation

- a live on-air radio transmission

- a live online radio broadcast

Listeners heard the acoustic output of radio telescopes live. The content of the live transmission depended on the objects being observed by partner telescopes. On any given occasion, listeners may have heard the planet Jupiter and its interaction with its moons, radiation from the Sun, activity from far-off pulsars or other astronomical phenomena. Honor from rQ later made a TED Talk about it.

Dino, drummer from HDU, did the website design…thats gotta rate…

RT32

2001, Latvia

In 2001 I had the good fortune to be part of the Acoustic.Space.Lab project which started a long love affair with the RT32 radio telescope. Formerly a cold war device, this telescope was liberated when the Russian Army pulled out of Latvia. I worked with this telescope as an artistic device and with the generous scientists for many years after. The doco clip below introduces the explorations of the international Acoustic Space Lab Symposium which took place on the site of RT-32 in 2001.

Highlights of this period in Latvia included being evicted by Russian builders, getting a hernia, and being amazed Marc Tuters survived eating so many dodgy looking mushrooms he found in the forest.

Open Sauces

May 2001, Scotland and also later…

I have come to realise there is just too much stuff I have tinkered with to comment on. Open Sauces falls into that bucket. Google tells me this was 2001. Essentially I got sick of all the Open Source blah blah of the time.. everything was suffixed by OPEN and it got very tiring (Open Gov, Open Hardware, Open Society…). No critical reflection on the fact that geek methods are geek methods and they are not transportable – AND – OPEN processes, methods etc existed well before geeks came along and inherited the word. No geek invented openness. I’m still tired of this I have to say…still…. I created Open Sauces which was an open database of recipes… anyone that did a residency could add their favourite recipe and you could just tick all the ingredients you have in your fridge and get a recipe to suit… doesn’t sound too revolutionary but at the time this sort of thing didn’t exist. It was a comment on this abuse of the use of the word ‘open’ and how cooking way preceded sharing of ‘code’ / ‘instructions’ etc… and also how food is probably the most important part of any collaborative project, whereas unsocial nerdy talk is optional. Later Fo.am in Brussels were inspired by the idea and started an Open Sauces theme.

net.congestion

2000, Amsterdam

I’m particularly proud of this project. It came about when I was a very naive newly arrived resident of Amsterdam. I suggested to Geert Lovink this idea for a festival and he said to speak to Erik Kluitenberg. Both huge legends in my mind you understand… I mustered the courage up to suggest it to Erik who was a cultural curator at De Balie at the time. He said he would think about it and I thought I wasn’t very convincing. A week later he called me up and said let’s do it! Whoot!

The festival was held in Amsterdam in October 2000. Net.congestion was an intensive three-day celebration and critique of the new cultures that have arisen from all forms of micro-, narrow- and broad- casting via the internet, now collectively known as streaming media.

The event covered most of the interesting ground of the time for streaming media, from the transformation of issues surrounding intellectual property to the uses of streaming as a mobilisation tool for global resistance through to the more rarefied questions of aesthetics and how narratives are transformed when embedded in networks. The overwhelming experience of many visitors to Net.congestion was a sense of tools, networks and sensibilities being re-purposed, returning us, again and again, to a primary experience of the net as a social space.

Net.congestion occurred just months before dot.com bubble burst, exploding the ‘new economy’ and ‘the long boom’ with its fantasies of a world in which the economic laws of gravity had been repealed. There is no doubt that if the same event were to be held now, the atmosphere would be markedly different. It is not that Net.congestion was an industry event which depended on the hype for its existence, as the very title indicates that we mixed a healthy dose of skepticism with our festivities. But none of us, however critical, can entirely escape the zeitgeist and there is no doubt that in those brief heady days Warhol’s aphorism was re-written; we could all dream of becoming billionaires, if only for 15 minutes. A strange historical phase when (particularly for anyone involved in streaming media) the normally fixed boundaries between business, art, technology, science fantasy and just plain bullshit temporarily blurred to create a moment of unique cultural hysteria. In that sense our timing was perfect.

The Theory Machine

2000

In an attempt to make theorists a little more funky, I made a software they could use to put their brainy thoughts to glitchy syncopation. It was mainly used by Eric Kluitenberg including one memorable performance at Club Otok in Dubrovnik.

HelpB92

1999, Amsterdam

I helped found an organisation during the Nato bombing of Serbia and Kosovo in 1999. The international support campaign for independent media in Yugoslavia, including the famous Radio B92 media centre, in operation between March and July 1999. We did some pretty cool things but mostly I was very happy to be involved in what must have been one of the web’s early large-scale activist campaigns. It was also the start of my longish relationship with Amsterdam as XS4ALL offered me a job and I stayed for a few years. I still have a bike there somewhere.

What was tricky, though, is that I agreed to go to Skopje to assist an Albanian refugee radio station (Radio 21). It was kinda nerve wracking. There were literally bombs set to explode to take out as many Albanians as possible. Some kids lost their legs across the street from where I was working. I was a milk and cookies boy from NZ.. what was I doing here? Still, I stuck it out and we managed to set up quite an innovative way of getting radio transmissions out of the refugee camps to Radio Netherlands Shortwave.. .I’ll write that up when I get time.

The Frequency Clock

1998 – 2004 or so, The World

This was one of the earliest r a d i o q u a l i a projects and how I learned to program. To understand this project you have to understand the dark ages of the internet when video and audio hardly existed. Essentially we built a media scheduling system that allowed you to build archives of live and pre-recorded content, tag them, and then schedule them. All built in JavaScript… remembering these were these days when Javascript was very rudimentary.

The Frequency Clock was originally conceived as a mechanism to control FM transmitters over the internet. In essence it was a networked timetabling system, connecting globally dispersed FM transmitters so they could broadcast the same internet audio simultaneously. The original player was a popup window but we also built desktop apps to do the same thing using VisualBasic (Win) and RealBasic (Mac). All open source.

However… then we realised that video could also work… and we used it to control community TV channels in Amsterdam and Linz and we also controlled giant video billboards in Estonia and a whole lot of other things. It was exibited a lot, most notably at the Walker when Steve Dietz was still there. We even installed a transmitter in the roof of De Waag! It was a remarkable experiment for its time. Yes, yes, pre-Napster and YouTube and all those other toys… while writing this I found some kind of prototype online.

Sound Performances

1996 – 2008, the world.

Performing solo as ‘eset’ and with Honor Harger as r a d i o q u a l i a I did a lot of sound performances, most using sounds from space and either live performances in real space or on radio. Some stuff still exists online:

https://soundcloud.com/radioqualia

Or eg:

r a d i o q u a l i a

This was the project that liberated me from the south and the reason I moved to Europe with no money and no return ticket. My plan was to make coffee and do some arty stuff in London. Thankfully, Nato bombed Serbia (hoho) and everything changed.

What I really loved about this time, was that I felt part of a lovely international community of artists. We used to travel around and bump into each other in various crazy places. This group included people like Marko Pelijhan, Heath Bunting, Rachel Baker, James Stevens, Luka Frelih, the Mama crew, Lev Manovich, Steven Kovats, Matthew Beiderman, Giovanni D’Angelo, Zita Joyce, Adam Willetts, Rasa Smits, Raitis Smits and so many many others…it was an awesome time.

r a d i o q u a l i a was an artist project that consisted of myself and Honor Harger. I have described some of our exhibitions and performance projects above, and listed some below. There were many more.

In August 2004, r a d i o q u a l i a was awarded a UNESCO Digital Art Prize for the project Radio Astronomy. In September 2003, we were awarded the Leonardo-@rt Outsiders 2003 New Horizons Prize together with the participants of the Open Sky installation at the @rt Outsiders exhibition at the Museum of European Photography in Paris.

Selected r a d i o q u a l i a exhibitions and performances:

Lecture & performance at the Centre Pompidou, Paris, France

Work: Sonifying Space, as part of the Space Art conference

Exhibition at the New Museum of Contemporary Art, New York, USA

Work: Free Radio Linux, as part of the OpenSourceArt_Hack exhibition

Exhibition at the NTT InterCommunication Centre, Tokyo, Japan

Work: Radio Astronomy, as part of open nature, a show curated by Yukiko Shikata

Online exhibition / commission / installation at Gallery 9, Walker Art Centre, USA

Work: Free Radio Linux

Exhibition at Arsenals Exhibition Hall, Riga, Latvia

Work: solar listening_stations, part of WAVES

Exhibition at HMKV, Dortmund, Germany

Work: solar listening_stations, part of Solar Radio Station

Exhibition at the Walter Philips Gallery, Banff, Canada

Work: Free Radio Linux, as part of The Art Formerly Known As New Media

Exhibition at Centre d’Art Santa Monica, Barcelona, Spain

Work: Radio Astronomy, as part of Sonar 2005

Performance at Tesla, Berlin, Germany

Work: from polar radio to solar wind

Performance, La Batie Festival, Geneva, Switzerland

Work: signals as part of signal-sever

Exhibition at Ars Electronica, Linz

Work: Radio Astronomy

Exhibition at ISEA 2004, Helsinki, Finland

Work: Radio Astronomy

Symposium & Performance, Ventspils International Radio Astronomy Centre, Latvia

Work: Acoustic Space: RT32: Orchestrating the Solar System

Broadcast on Radio New Zealand

Work: Revolutions Per Minute 1: Frequency Shifting Paradigms in Broadcast Audio

Broadcast on Radio New Zealand

Work: Revolutions Per Minute 2: Little Star

Exhibition at Small Gallery, Los Angeles, USA

Work: comma.data.space: 11 Ghz

Performance at the Moving Image Centre in Auckland, New Zealand

Work: comma.data.return :: 56:30 – 21:1

Performance at Version festival, Auckland, New Zealand

Work: listening_stations v0.3: langmuir waves

Exhibition at the Physics Room, Christchurch, New Zealand

Work: re:Play

Exhibition at Artspace in Auckland, New Zealand

Work: re:Play

Exhibition & education programme, South Africa

Work: re:Play

Exhibition at the Reg Vardy Gallery, Sunderland, UK

Work: Free Radio Linux, as part of the Art for Networks exhibition

Exhibition at Museum of European Photography/ Maison Europeenne de la Photographie, Paris, France

Work: listening_stations, as part of @rt Outsiders exhibition

Exhibition at the Physics Room, Christchurch, New Zealand

Work: data.spac.ereturn, as part of the Audible New Frontiers exhibition

Locative media Residency at K2, Karosta, Latvia

Work: Locative Media

Exhibition at Turnpike Galleries, Leigh, UK

Work: Free Radio Linux, as part of the Art for Networks exhibition

Exhibition at Fruitmarket Galleries, Edinburgh, UK

Work: Free Radio Linux, as part of the Art for Networks exhibition

Radio show on Resonance 104.4FM, London, UK

Work: r a d i o q u a l i a on resonanceFM

Exhibition at the Generali Foundation, Vienna, Austria

Work: listening_stations as part of the Geography and the Politics of Mobility exhibition

Exhibition at Chapter, Cardiff, UK

Work: Free Radio Linux, as part of the Art for Networks exhibition

Performance & Broadcast, Ars Electronica, Linz Austria

Work: Radiotopia @ Ars Electronica

Radio Broadcasts on Austrian National Radio, Vienna, Austria

Work: i s o l

Exhibition at CCCB, Barcelona, Spain

Work: frequency clock – gallery installation – [sNr v.0.1]

Sonar 2001, Barcelona, Spain

Action & Broadcast, Ars Electronica, Linz, Austria

Work: Take Over Cultural Channel

Performance, Residency & Symposium at, Ventspils International Radio Astronomy Centre, Latvia

Work: Acoustic Space-Lab

Exhibition at Video Positive, Liverpool, UK

Work: Frequency Clock – gallery installation – [ vp00 v.0.0.3 ]

Workshop & Performance, Adelaide Festival of the Arts, Adelaide, Australia

Work: Closing the Loop 2000

Seminar & Performance at Lux Centre, London, UK

Work: Tuning the Net

Performance at the Stockton Festival, Stockton, UK

Work: transitions & undercurrents part of live-stock

Exhibition & Performance at OK Centrum, Ars Electronica, Linz, Austria

Work: pso.Net, as part of Sound Drifting

Exhibition at Experimental Art Foundation, Adelaide, Australia

Work: Frequency Clock – gallery installation – [ eaf v.0.0.2 ]

Exhibition at Experimental Art Foundation, Adelaide, Australia

Work: Illata

Exhibition at Contemporary Art Centre of South Australia, Adelaide, Australia

Work: e Q

Performance at LADA98 Festival, Rimini, Italy

Work: we are alive and well but terribly uncommunicative

Exhibition, Ars Electronica, Linz, Austria

Work: Frequency Clock – gallery installation – [ aec98 v.0.0.1 beta ]

Performance, Ars Electronica, Linz, Austria

Work: 56h LIVE!: Acoustic Space

Exhibition at Bregenz Festival, Bregenz, Austria

Work: gl^tch.bot

Performance and presentation at net.radio.days 98, Berlin, Germany

Work: self.e x t r a c t i n g.radio (.ser)

Online Project

Work: self.e x t r a c t i n g.radio (.ser)

Exhibition at the Machida City Museum of Graphic Arts, Tokyo, Japan

Work: The Qualia Dial

Exhibition at Fabrica New Media Art Institution, Italy

Work: Balance

more to do….

Streaming Suitcase

PliegOS

Re:mote

Low Res

Skint Stream

Open Source Streaming Alliance

Open Channels for Kosovo

self.extracting.radio

ovalmaschina

Gema

simpel

Building Book Production Platforms p3

The editor

This series is based on HTML as a source file format for book production platforms. I have looked at many HTML editors over the years and can remember when the first in-browser editors appeared…it was a shock. Prior to that, all HTML creation was done by writing directly in HTML code, then came fully-featured environments like Front Page and Dreamweaver which allowed you to create HTML in a desktop app, then came wiki mark-up to liberate us all from the tedium of writing HTML, and then finally…the browser-based WYSIWYG editor…

It’s worth noting that the Wiki markup and WYSIWYG solutions were a different category to the previous solutions in that they weren’t designed for creating web pages, rather they were designed to enable the production of wikis and content management systems.

What-You-See-Is-What-You-Get at that time was a refreshing and liberating idea, a newcomer to this scene (although WYSIWYG as a concept and approach to document creation predates the web, with the first true WYSIWYG editor being a word processing program called Bravo, invented by Charles Simonyi at the Xerox Palo Alto Research Center in the 1970s, the basis for MS Word and Excel). Many WYSIWYG strategies have been explored, and many weaknesses unearthed, including the very important critique that What-You-See-Isn’t-What-You-Get, because the HTML created by these editors is unreliable, but more on this later…

As far as I can tell, the first HTML-based WYSIWYG editor was Amaya World, first released in 1996. I don’t know what WYISWYG editor was the first to be embedded in a browser (if you know, please email me). However, I remember TinyMCE like it was a revelation. According to the Sourceforge page, they started building it around 2004 to solve the need to produce HTML in content management systems. It was, and is a great product. The strategy at the time was pretty much to emulate rendered HTML within an HTML text field. TinyMCE (and the others that followed until contenteditable came along) used a heap of JavaScript to turn a simple editable text field into a window onto the browser’s layout engine.

alt.typesetting

From this point, a number of plugins were developed for use with WYSIWYG editors like TinyMCE to extend the functionality.

Some of these plugins ventured into the ever-important area of typesetting. TinyMCE even tried at times to make up for the lack of browser functionality in this area – for example, there were some early and workable attempts to bring equation editing into TinyMCE. I can’t remember when it was, but surely around 2006/2007 that IMathAs had an experimental jab at this. I thought it was pure genius at the time as there was no other solution (I searched! a lot!). As I can remember they used a very clever round-tripping to achieve the result… essentially, since browsers didn’t then support Math, IMathAs supported inline equation writing using ASCIIMath syntax. When the user clicked out of the field, the editor sent the equation markup to the server, and the server returned the rendered equation as either a bitmap (PNG, JPG etc) or as vector graphics (SVG). It was genius and I built it into the workflow for FLOSS Manuals around 2010 because we wanted to write books with equations for software like CSound (produced in 2010/11). It worked great – the equations always looked a bit ‘bit-mappy’ but we could write and print books with equations using in-browser editors and HTML as source (the HTML produced included equations as images so we could render PDF direct from the HTML). Awesome.

It’s also worth noting that these days math typesetting has largely moved to the client side with the evolution of fantastic libraries like MathJax and KateX. These are JavaScript typesetting libraries designed to be included in web pages and render math from markup on the client side. There are one or two tools that still use server-side rendering, notably Mathoid, and this is often used to reduce the burden on the client’s browser, however, they have possible additional bandwidth costs as the client and server must remain in communication with each other, otherwise nothing will be rendered or displayed.

Mature solutions for math and other typesetting issues are only just starting to come online – no surprise to historians who inform us that notations such as math and music were the last to come online for the printing press as well. The first book to contain music notation post-Gutenberg, the Mainz Psalter, was printed with moveable type, and the music notation was added manually by scribe. It seems the first thing to get right is the printing of text, all other notations come later in print systems. These solutions are slowly evolving – even music notation has its champions. However what is really surprising, is that Google, a company priding itself on being built from ground up by math-heads, seems to struggle to bring native math typesetting to their own browser . I would say that is embarrassing.

Contenteditable

Moving on from typesetting… The initial WYSIWYG editors proved an admirable solution for many content management systems. The name persisted but the background technology fundamentally changed in when the first implementations of the W3C contenteditable specification for HTML5 was brought to the browser. Contenteditable is an attribute that you can add to a number of HTML container elements (like ‘P’ or ‘div’) that make their contents editable. So, in essence, you are directly editing the content in the browser rather than through some JavaScript text field trickery. This strategy might be called WYSI (What-you-see-IS). This strategy also spawned a whole new generation of editors leveraging this new native browser functionality. Aloha Editor was one of the first to grab the spotlight but there were many many others to follow. Additionally, the big legacy WYSIWYG editors such as TinyMCE and CKEditor added support for contenteditable although they were a little slow to the party.

Contenteditable at first promised a lot… native editing of the browser … phew … that certainly lowers the technology burden and opens the door to innovation and experimentation. Additionally, the idea that this is a read-write web suddenly comes more keenly into focus when you can just edit the web page right there and inherit all the same JavaScript and CSS that operates on the element you are editing. It’s good stuff.

Inevitably, though, some problems soon emerged, first some wobbly things about not being able to place a caret (your mouse pointer) between block elements (eg between two divs) was really a problem, but later a more serious issue was identified – contenteditable does not produce stable results across different browsers, such that if you edit one page in browser A, the resulting HTML could look different if you edited the same page in browser B. That might not affect many people – if you just want some text with bold and italics and simple things, then it doesn’t really matter… the HTML created will render results that will look pretty much the same across any browser. However there are use cases where this is a problem.

In the world I work in at the moment – scholarly publishing – we don’t want a manuscript that contains inconsistent HTML depending on the browser it was edited in … it hurts us down the road when we want to translate that HTML into different formats (eg JATS) or if we want to render that HTML directly to PDF and get consistent results.

So, unfortunately, editors like CKEditor (used by many book production platforms including Atlas), and TinyMCE (used by Press Books AKA WordPress), or Aloha (used by Booktype 2) have to use a lot of JS magic to produce consistent HTML to overcome the problems with contenteditable, and this doesn’t always succeed. I would recommend reading this article from the Guardian tech team about these issues. You also may wish to look at this video from the Wikimedia Foundation Visual Editor core devs for the comments on contenteditable (audio is lousy, jump to 1.14.00) (readable subtitles can be found here .

A better way

So…what can you do? The answer is kind of threefold.

First choice: decide not to care – an entirely legitimate approach. You can still do huge amounts with these editors, and if you need to tweak the HTML now and then, so what? I can clean up the HTML by hand for a 300-page book in an hour, not too tough really and it enables me to cash in on all the other enormous gains to be had from a single-source HTML environment.

Second choice: provide client-side and server-side cleanup tools. Most editors have these built in, but it’s also good to implement backend clean up tools to ‘consistify’ the HTML at save-time (or at least at pre-render time).

Third choice: find an editor that is designed to produce consistent HTML.

In my opinion, the third choice is the best long term option and the ‘right way’ to do things. Being able to produce reliable results with ease, and without having to do things twice, will make everyone’s life easier.

Thankfully there is a new editor on the scene that is designed to do just this – the Wikimedia Foundations Visual Editor. This editor was developed to help the Wikimedia Foundation solve an uptake problem … essentially there are not enough people these days prepared to sit around learning Wiki markup (which is pretty much a complicated scripting language these days). The resulting need to drop the threshold on the foundation’s contributions environment has resulted in the development of the Visual Editor (VE). New contributors can use an easy WYSIWYG-like environment instead of having to learn markup.

Obviously, the entire Wikimedia universe is already stored as wiki markup, so the editor needs to be able to translate between HTML and wiki markup on-the-fly (interestingly, it is actually part of much larger plan to store all Wikimedia Foundation content in HTML. To do this there is a back end called Parsoid that converts markup to HTML and vice versa. Also, the HTML produced by the editor obviously needs to be tightly controlled, otherwise the results are going to be a mess when converting back to wiki markup. VE does this by replicating the content in its own internal (JSON) model and displaying the results in a contenteditable region. When the content is edited, the edits are strictly controlled by the VE internal rules, and then rendered to display. The result… consistent HTML is produced across any edit session regardless of the browser used…

That’s pretty good news. This is one reason amongst many that the platform I am working on for the Public Library of Science has adopted VE software (we were the first to use it outside of the Wikimedia Foundation) and we are extending it considerably and contributing the results upstream to the VE repos. So far we have added table, equation, and citation plugins – all of which are in an early alpha state. If you want a peek, you can see some of the work here.

I highly recommend to anyone building a book platform, or any other kind of knowledge production platform, that you examine VE more closely. It is a sophisticated software and has been carefully thought through. It is still relatively immature, and development is happening at an incredible pace, which can make testing new plugins against an unstable API a little arduous … still, it is a great solution. VE also approaches content editing in a way that will open the door to concurrent editing via operational transformations in HTML, which is a hard problem and currently only solved by Google and Wikidocs (recently acquired by Atlassian.)

If you are in the process of choosing an editor, choose VE and contribute to the effort to make it not just the best Open Source solution to editing in the browser, but the best solution, full stop.

Many thanks to Raewyn Whyte for improving this article.

Building Book Production Platforms p2.

Amongst the core requirements for a book production platform are the source file format and the editor, and of course, these are intimately linked. The development team is usually faced with choosing the format first, then the editor.

Choosing a format

The choice is pretty much HTML? or not HTML?

Currently, HTML is the ruling choice of format for a web-based book production platform. HTML is native to the browser and has associated standards-compliant support, such as CSS and javascript. Inversely, not choosing HTML puts you in a bit of a hole and can create a lot of overhead.

It might be interesting to look back a little and learn from some others since there have already been projects in this space that started down non-HTML roads and then gave it up for HTML. Kathi Fletcher, originally the project manager and technical director for Connexions (now OpenStax) which built a custom XML editing environment for academic materials, later researched in-browser XML vs HTML editing environments for her Shuttleworth Foundation-funded OERPUB project. Kathi became convinced HTML was the way to go and did some great work on HTML editor usability with the Aloha HTML editor.

We have chosen to use HTML5 as the canonical format for open textbooks, because developers and tools are more plentiful for web technologies than XML technologies.

http://www.w3.org/2012/12/global-publisher/statements-of-interest/29-oerpub.html

The (closed source) O’Reilly Atlas platform also started with the complex AsciiDoc format (a form of markdown) and eventually awoke to the power of HTML in 2012.

HTML5-based authoring offers a streamlined production workflow for producing both print and digital outputs, facilitates “digital first” content development, and is a perfect fit for creating a WYSIWYG, web-based writing experience.

http://radar.oreilly.com/2013/09/html5-is-the-future-of-book-authorship.html

They then got an extra dose of religion and started a project called HTML Book which is a suggested ‘spec’ for a subset of HTML elements to be used in books.

So far I have not seen a book production platform travel the reverse direction, from HTML to something else. Instead, we are seeing more and more platforms start with, or change to, HTML as a source file format.

Markdown

Markdown is sometimes put forward as the way to go but I’m not going to go into that in too much detail here. I have talked about this elsewhere. The only additional thing I will say is that markdown causes even more issues for book production platforms than those included in that article. Namely, in an in-browser markdown environment, the markdown will most likely be displayed as rendered HTML next to the authoring pane. That is a huge amount of lost screen space and extra UI junk for no apparent gain. Think of the UX cost. If you don’t have that rendered display then you will most likely only see pure markdown in a text field with no rendered display. The user won’t really know if their document looks right until it is rendered somewhere down the line, which is also a tremendous cost to the user for no apparent gain. Markdown: all pain, no gain.

NB: There is a possible good use case for markdown as a helpful add-on for HTML WYSI editors but I will cover that later.

LaTeX

There is a more valid use case for LaTeX in the browser since some scientists and academics will never use anything else, and you’ll never convince them to adopt HTML regardless of the benefits. You are up against the great Church of Knuth and I don’t fancy your chances. If your audience is comprised of LaTeX addicts, then I think you have no choice other than to support that.

Many times I have talked about remedies for unstructured MS Word documents (for scientific manuscripts) only to have someone earnestly comment that if everyone just learned LaTeX we would be in a much better position… They might be right, but I’m pretty sure it’s never going to happen.

The preference for LaTeX is a legacy issue, and problematic, but needs to be dealt with. (Unfortunately, today’s Markdown heroes are growing legacy issues like this with each passing day, and that is going to cost us down the road).

Recently there has been some interesting work on in-browser LaTeX editing including the (closed source) Authorea platform and, most notably the (open source) ShareLatex platform. ShareLatex round trips the LaTeX syntax displayed and edited in a text area (in the browser), renders that to a bitmap on the server, and returns it to the browser for a side-by-side ‘WYSIWYG’. The effect is that you can see a just-in-time rendered view of the LaTeX as you type. It’s a neat trick and effective if you insist on LaTeX in a web-based platform. Then you just have to live with the UI costs. However ,you only need this approach if you wish to support the full LaTeX syntax. If you wish to just support LaTeX equations, you can use an HTML editor with a LaTeX plugin based on MathJax or the Khan Academies KaTeX(and there are some other solutions such as Mathoid).

Incidentally, if you need to support full LaTeX I highly recommend checking out ShareLaTeX over WriteLaTeX. They both have the same approach but WriteLaTeX is proprietary whereas you can pick up the ShareLaTeX code and integrate it straight away. You could even build your own ShareLaTeX-like interface, it’s not too tricky – together with a colleague – Rizwan Reza – and I (Riz did all the hard work) we managed to develop a workable prototype in about 2 days, but there are many gotchas setting up the LaTeX compiler correctly.

Not many book projects need LaTeX, so I will leave this as an interesting edge case. There are solutions if you need it, but not many people need it.

XML

I think I will just leave it to the words of the brilliant Dave Cramer (Hachette Book Group):

So we’ve chosen to describe our content with HTML, and build our production system around HTML.

When I tell people that, they smile condescendingly, and chuckle a bit. “That’s cute. Why don’t you use real XML?”

I then ask them what you can do in Docbook (or TEI, or NLM) that you can’t do in XHTML? I haven’t heard a good answer to that question yet. XHTML is XML, by definition. Calling something “para” rather than “p” doesn’t get you anything, except carpal tunnel syndrome and invoices from consultants

The problem with non-HTML XML is that it is essentially just XML the browser can’t use. Hence you lose all that other good stuff like WYSI editors, CSS design tools, cool tricks with JavaScript, and all the cool tools that are being developed for HTML. XML just can’t compete, plus you are going to need to convert the XML into HTML anyway. So don’t make life more complicated than it already is – continue your love affair with XML as long as it’s XHTML!

HTML

HTML is king in the browser and it gives you all you need to make books. I don’t want to spend a lot of time arguing the merits of HTML in this post as there is a lot to say and I want to bring that in at other points of the conversation. But in brief:

- HTML is supported by JS and CSS.

- The DOM is known natively by the browser.

- HTML is standards-based.

- It is straightforward.

- HTML is easy to read and easy to clean.

- HTML is the most popular file format on the planet.

- You can use HTML to build structure in documents with assigned class and id values, or microdata formats.

- HTML is the native file format for EPUB.

- PDF can be rendered directly from HTML in the browser (more on this later).

- HTML can be paginated in the browser.

- CSS is moving towards supporting more and more page based elements.

- The browser can act as a design environment.

- You can create real what-you-see-is (WYSI) production environments.

- Basic editing is built into the format itself.

- HTML is supported by an enormous number of tools for conversion (in and out).

- HTML is supported by an enormous repository of examples (the web).

- HTML is cheap to develop with.

- Even book designers are getting used to it.

- Some schools teach it.

- It has a million free tutorials online to help you use it.

- A lot of people know HTML.

- HTML is supported by a rapidly proliferating body of JavaScripts for typography, graph production, animation, interactions, dynamic rendering etc etc etc etc

The basic idea really comes down to this.

- HTML is the cheapest format of our time.

- HTML is the most popular format of our time.

- HTML is the networked document format of our time.

Increasingly HTML is the way stories are told, whether that is in books or on the web. It’s a trite analogy perhaps, but HTML is the paper of our time. As Dave Cramer says:

why start with something other than HTML, when you have to turn it into HTML anyway?

It should be noted that Cramer also turns HTML into paper, and the Hachette Book Group have produced many beautiful paper books using HTML as the source format. Many of these books you will now find in the best-selling sections of your local brick and mortar bookstore.

Other print producers are also using HTML as the source. Print-on-demand services, used to producing very ugly books by ingesting MS Word and dealing with all that ugly conversion, are also adopting HTML production environments. Books on Demand, Germany’s largest Print on Demand service, adopted Booktype so their customers could have an easy in-browser book production environment. The source format is HTML but the users don’t know that, and the books look better. That’s the beauty of HTML.

Finally, helped a lot by the efforts of Dave Cramer and the Hachette Book Group, Sourcefabric, the people at O’Reilly, and others adopting HTML, we might be starting to see the very beginning of the changing of the guard.

HTML is the way to go for Book Production Platforms. If you choose another format you will find you inherit a lot of costs and additional overheads and, sadly, you will soon be left behind. There is just no format going forward at the same speed as HTML. Not even close. So, my advice is to first ask the question – can HTML do what you need? Push your team to answer that question. Will format X give you anything HTML can’t? As an exercise ask your team to prove HTML is a bad choice, and if the answer is not-HTML, then contact me and let me try and talk you into it!

What’s Wrong with Markdown?

Markdown (.MD) is a text format that lazy people use to write HTML. Unfortunately once those same lazies are used to the format, their eyes glaze over and they start to believe .MD is the solution for all the world’s problems. They share a lot in common with githubbies who think github is the solution for book production, open source, bad democracies etc..

.MD files are common in the geek world. Programmers love them. The design of .MD is simple and efficient. If you know the syntax, you can write basic text documents with headers and bullet lists, blockquotes, bold & emphasis etc. pretty quick. That makes it a handy tool for the elite of text workers – programmers – to develop simple text documents, quickly. So it’s a popular format for writing, for example, human-readable README files that tell you a little bit about the software you are about to install. However, that is where the use case ends. .MD is not a useful format for many other cases unless you want to prove to the programmers that you too can do tricky stuff in plain text. For the rest of us, it has little value.

Markdown was originally developed by John Gruber in 2004 and you can read about some of the reasons why he developed it here. The original purpose of .MD is that it can be read without converting it to another format like HTML. Presumably, John Gruber wanted a format that could be read easily by the eye, allowing the user to be able to quickly understand which part of a text is a heading, which part is a list, which part is a paragraph etc .MD is designed not to be rendered for display, it is meant to be the display.

For example, a list written in .MD would look something like this:

* item 1

* item 2

* item 3

That actually looks like a list. In HTML we would do something similar and it would look like this:

- item 1

- item 2

- item 3

An asterisk looks like a bullet, so there is little cognisant drag here. Pretty readable.

However, things start to fall apart pretty fast. Can we really parse at speed, for example, nested bold and emphasis in .MD like this:

The quick *brown fox* **jumps** *over* the lazy **dog**

Is that or the following easier to read?

The quick brown fox jumps over the lazy dog

I’m going to say the second is easier to read. Way easier to read. So, that is just the start of the problems; from here on in it goes downhill pretty quick for use cases beyond simple READMEs.

MarkDown isn’t designed for creating HTML

So, let’s assume we can agree Markdown is readable in a very limited number of scenarios – and move on to rationalise (grasp at straws) other needs for the format. That is pretty much where we are today, with the big selling point being that Markdown is an easy way to create HTML. But let’s face it, even programmers don’t like reading raw Markdown and even in the most popular .MD repository of them all – github – the Markdown files are rendered in the browser as nicely formatted HTML. Great! A use case we can stand behind – use Markdown to create HTML.

However .MD is really a pretty bad way to create HTML. Firstly, you need something to convert the .MD into HTML. So if you use just a plain text editor to create .MD files and load it into the browser you will see just plain, boring Markdown. No nicely formatted documents for you. There are tools that programmers like, and so the rest of us are also expected to like them, for converting .MD to .html. After all, according to the technically gifted, converting a .MD file to HTML is “really really easy.”

One of the most common tools for doing this is Pandoc. Pandoc is a great software and extremely useful. However having to install and learn how to run Pandoc – a complex tool at best – to convert a text file to something readable – sounds a little like the long way home. And that’s not where the rot ends, far from it, the rot has only just set in and the worst is to come. If everyone was to use Pandoc to convert .MD to HTML we would have consistent conversion results. Unfortunately, that’s not what happens. Each to their own, and we have a lot of different tools with which to do these conversions, and hence we have different results created from the same source. Ugh. That is a file format nightmare right there.

And let’s say you want to add a little colour to your text. Perhaps a highlight? Forget about it. Markdown lacks the tools to enable you to do it. Pandoc might help, however – let’s add some colour highlights to a code block with this easy to remember command from the Pandoc manual:

pandoc code.text -s --highlight-style pygments -o example18a.html

So, first of all, do you know what a command is? Do you know what a terminal is? Happy using one? Oh..that’s actually not ok for you? No problems, there are plenty of online introductory courses on the command line. So before you write that funding document, “about” page on your website or scholarly research document – just whip through a quick course on the command line and you’ll be all set! (don’t forget to read the sections about installing software from the command line, you’ll need it to get Pandoc working).

Problems with conversion tools aside – Markdown struggles to find a nice way to represent HTML. It’s just a bad fit. Use Markdown for creating HTML and you will find all sorts of little formatting gotchas that will cause you frustration. It is why many markdown environments/conversion tools also support HTML tags.

All HTML is valid Markdown. If you’re stuck, not able to format your content as you would like (for example using tables), you can always use plain HTML instead of Markdown. http://support.ghost.org/markdown-guide/

So if you want to really write HTML with Markdown you have to, well, write HTML. Klaro.

Markdown was never intended for writing HTML. It wasn’t designed that way and for good reason – it doesn’t do it well.

Codified text

As mentioned above, by design, the original markdown has a very small subset of elements that can be converted to HTML. As John Gruber says in his philosophy:

Markdown is not a replacement for HTML, or even close to it…The idea for Markdown is to make it easy to read, write, and edit prose.

So, Markdown is not actually designed to be a good format for creating HTML. And it lives up to its design. It is for this reason that some Markdown formats ‘extend’ Markdown to include HTML code, and there are also other forks of Markdown that do some really weird stuff that I can hardly explain. For example, Ghost Markdown, the version of Markdown used for the (Open Source) Ghost blogging platform, tries to wrangle image formatting into Markdown. To place an image you have to write the following:

![]()

Intuitive, right? Nope.

The above is really a leap from ‘readable’ to ‘codified’. It is codified text and in order to be able to work with it, you need to know how to de-code the text… I’m sorry, but I just don’t get it. Markdown adds another level of codified complexity which I then need to de-code first (according to non-standardised, and not-standard rules written in some help file somewhere if I’m lucky), so that I can then sally forth and read the content? No thanks.

Say no to codified text.

Non-standardised formats suck

Efforts to take Markdown and extend it to meet a wider variety of formatting needs are actually where the big trouble starts. Markdown has gone off in a hundred-and-one different directions, each with its own syntax.

That means, if I want to write a Ghost blog (I love Ghost by the way, no disrespect to them) in Markdown (their required format) then it is not enough to learn Markdown. I must learn Ghost markdown …their particular reading of what a good markdown format is… So, that leads us to one of the really big problems. Markdown is not standardised.

Can any of us think of another non-standardised text format and where that leads us? Does MS Word and ‘world of pain’ ring any bells? Yes, Markdown is non-standardised and that is a very big no-no. It is, in fact, quite shocking that programmers, big on standards, do not quite see that by advocating Markdown they advocate dropping some central best practices. Can’t say anything more about that really.

But Markdown is structured!

I often hear the term “structured text” when referring to Markdown. For example, the opening lines from the CommonMark pre-amble.:

Markdown is a plain text format for writing structured documents

Sounds good doesn’t it? Sounds very techy and convincing. But what is structured text? Structured text means basically that we can see if something is a heading or something is a bulleted list, or something is a paragraph. Huh? But that describes just about any text document. Structured text is the basic requirement of any text you create – without it, you just have a flat plain-text document with no headings, no bullet lists etc. So… we might as well start every sentence about documents designed to be read as being ‘structured’. I think tomorrow I will go and buy a structured book. Or perhaps I will write a structured narrative on my text-structuring word processor. Excuse me word processor sales person, do you have structured text word processors? Ugh. Meaningless.

What is left?

Markdown is good for limited use cases. Use it for README files on github.