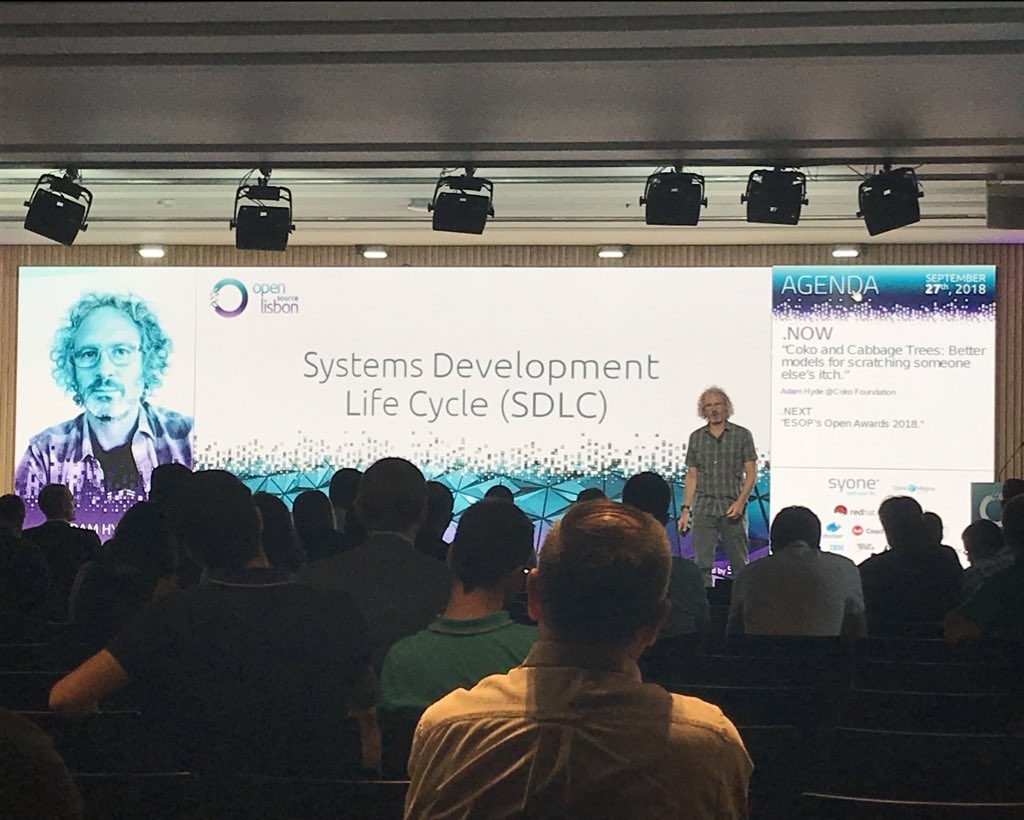

Just presented at Open Source Lisbon.

And these are the slides : lisboa_final_2

I’ve been pondering some stuff in preparation for a presentation at Open Source Lisbon this week. In essence, I’m trying to understand Open Source and how it works… not to say I don’t know how Open Source works, we do it well at Coko…I mean to zoom up a level and really understand the theory and not just the mechanics. It is one thing to facilitate a bunch of people to meld into a community, it is quite another to understand why that is important, and what the upsides and downsides are on a meta level. If you take the ideals out and look purely at the mechanics from a bird’s eye view. then what, ultimately, makes Open Source a better endeavor than proprietary software? What is exactly going on?

I have some clues… some threads…but while each thread makes sense when you consider it on its own, when you combine them all it doesn’t exactly make a nice neat little montage. Or if it does, I am currently not at the right zoom level to see it clearly instead I see lots of different threads criss-crossing each other,

Ok…so enough rambling… what is it I’m trying to understand…well, I think when you embark on making software there is this meta category of methods known as the Systems Development Life Cycles (SDLC). Its a broad grouping that describes the path from conception of the idea, through to design, build, implementation, maintenance and back again etc…

Under this broad umbrella are a whole lot of methods. Agile is one which you may have heard of. As is Lean. Then there are things like Joint Application Design (JAD), and Spiral, Xtreme programming, and a whole lot more. Each has its own philosophy and if you know them you can sort of see them like a bookshelf of offerings…you browse it and intentionally choose the one you want. Except these days people don’t choose really, they go with the fashion. Agile and Lean being the most fashionable right now.

The point is, these are explicit, well documented, methods. You can even get trained and certified in many of them.

But… Open Source doesn’t have that. There isn’t a bookshelf of open source software development methods. There are a few books, with a few clues, but these are largely written to explain the mechanics of things and they seldom acknowledge context. I say that because the books I have read like this make a whole lot of assumptions and those assumptions are largely based on the ‘first wave’ of Open Source – the story of the lone programmer starting off and writing some code then finding out it’s a good idea to then build community instead of purely code therefore magnifying the effect. A la Linus Torvalds.

But its very down-on-the-ground stuff. I’m thinking of Producing Open Source by Karl Fogel, and The Art of Community by Jono Bacon. Both very well known texts and I have found both very useful in the past. But they don’t provide a framework for understanding open source. I’ve also read some research articles on the matter that weren’t very good. They tend to also regurgitate first generation myths as if open source is this magic thing and they struggle to understand ‘the magic’. In other words, I miss a ‘unified theory’, a framework, for open source…

I think it is particularly important these days as we are beyond the first generation and yet our imaginations are lagging behind us. There are many more models of open source now than when Eric Raymond described a kind of cultural method which he referred to as ‘the bazaar’ in his cathedral and the bazaar. We now have a multitude of ways to make open source and so the license no longer prescribes a first generational approach, producing open source is much richer than that these days.

As it happens, Raymond’s text does attempt to provide some kind of coherent theory about why things work although it often mixes ‘the mechanical’ (do this) with an attempt to explain why these processes work. It doesn’t do a bad job, there is some good stuff in there, but it varies in level of description and explanation in a way that is uneven and sometimes unsatisfying. Also, as per above, it only addresses the first generation ‘bazaar’ model. While this model is still common today in open source circles, it needs a more thorough examination and updating to include the last 15 years of other emergent models for open source. There are, for example (and to stretch the metaphors to breaking point), many cathedral models in open source these days that seem to work, and some that look rather like bazaar-cathedral hybrids.

Recently Mozilla attempted to make some sense of these ‘new’ (-ish) models with their recent paper on ‘archetypes’

https://blog.mozilla.org/wp-content/uploads/2018/05/MZOTS_OS_Archetypes_report_ext_scr.pdf

Here they kind of describe what reads as Systems Development Life Cycle methods…indeed they even refer to them as methods

The report provides a set of open source project archetypes as a common starting point for discussions and decision-making: a conceptual framework that Mozillians can use to talk about the goals and methods appropriate for a given project.

They have even given them names such as ‘Trusted Vendor’ and ‘Bathwater’ and the descriptions of each of these ‘types’ of open source project sound to me like they are trying to make a first stab at a taxonomy of open source cultural practices – so you can choose one, just like a proprietary project would choose, or self identify as, Agile or Lean. Infact, the video on the blog promoting this study pretty much says as much. It’s Mozilla’s attempt at constructing a kind of SDLC based on project type (which is like choosing a ‘culture’ instead of a method).

However it doesn’t quite work. The paper compacts a whole lot of stuff into several categories and it is so dense that, while it is obvious a lot of thought has gone into it, it is pretty hard to parse. I couldn’t extract much value of what one model meant vs the other, or how I would identify if a project was one or the other. It was just too dense.

Mozilla has effectively written a text that describes a number of different types of bazaars, and also some cathedrals, without actually explaining why they work – except in a few pages that sort of off-handedly comment on some reasons why Open Source works. I’m referring to the section that provides some light assertions as to why Open Source is good to:

But this is the important stuff… if these things, and the other items listed in that section are true (I believe they are), they why are they true? Why do they work? Under what conditions do they work and when do they fail?

In other words, I think the Mozilla doc is interesting, but it is cross cutting at the wrong angle. I think a definition of archetypes is probably going to yield as many archetypes as there are open source projects – so choosing one archetype is a hopeful thought. Also the boundaries seem a little arbitrary. While the doc is interesting, I think it is the characteristics listed in the ‘Benefits of Open Source’ section of the Moz doc that are the important things to understand – this is where a framework could be built that would describe the elements that make open source work…..allowing us to understand in our own contexts what things we may be doing well at, what we could improve, what we should avoid, useful tools etc

The sort of thing I’m asking for is a structured piece of knowledge that can take each of the pieces of the puzzle and put them together with an explanation of why they work…not just that they exist and, at times, do work, or are sometimes/often grouped together in certain ways. An explanation of why things work would provide a useful framework for understanding what we are doing so we can improvise, improve our game, and avoid repeating errors that many have made before us.

With this a project could understand why open source works, and then drill down to design the operational mechanics for their context. They could design / choose how to implement an open source framework to meet their needs.

Such texts do exist in other sectors. Some of these actually could contribute to such a model. I think, for example, the Diffusion of Innovations by Everett Rogers is such a text, as is Open Innovations by Henry Chesbrough. These texts, while focused on other sectors, do explain some crucial reasons why open source works. Rogers explains why ‘open source can spread so quickly’ (as referenced in one line in the Moz doc), and Chesbrough provides substantial insights into why innovation can flourish in a healthy open source culture, and how system architecture might play a role in that.

Also the work of John Abele is important to look at and his ideas of collaborative leadership. As well as Eric Raymond’s text…but it all needs to be tied together in a cohesive framework…

This post isn’t meant to be a review of the Moz article. It reflects the enjoyment I have gained from understanding elements of open source by reading comprehensive analysis and explanation of phenomenon like diffusion and open innovation. These texts are compelling and I have learned a lot from them which have helped when developing the model for Coko because at the end of the day, there is no archetype that exactly fits – it is better to construct your own framework, your own theory of open source, to guide how you put things together, than to try and second guess and copy another project from a distance. Its for this reason that I would love to have a unified framework for open source that takes a stab at explaining why all these benefits of open source work so I can decide for myself which ones fit or how they fit with the projects I am involved with.

In addition, I have the following goals:

The goals are as important as the ingredients. After all, if you have don’t have lemons, you shouldn’t be trying to make lemonade.

I am thinking this exploration could have a working title ‘Collaborative Product Development’.

My first step is to look at historical methods for software product development and see what their approaches have been. The intent being to learn more about how software development processes are targeted at specific objectives and operational contexts. I think this will help develop an understanding of why certain methods are effective for this specific case, which in turn will help me understand which bits can be discarded.

I’ll keep this particular page in my Ghost blog as the notepad for my evolving thoughts.

I think I need to cover at least the following questions for each:

In addition, I need enough information to succinctly describe the methodology and to provide some praise and critique.

“I think what the waterfall description did for us was make us realize that we were doing something else, something unnamed except for “software development.” – Gerald M Weinberg

The history of Software Development Lifecycles is a history of experiment, description, and rejection.

Systems Development Life Cycle is what I refer to here as ‘method’ or ‘methodology’. It describes any methodology used as a framework for developing software. While all the methods described below can be used in a pure form, often SDLCs are combined and hybridised. Sometime for better, sometimes for worse. However, it is interesting to note that the basic framework address the following needs one way or another:

Some SDLCs are cyclical, some incremental, some strict, others loose, linear etc

The interesting question to me is what can we learn from these if we want to develop a methodology (SDLC) for developing products with free licences and the principles of open source.

Also interesting is that there have been attempts to define OSS as a SDLC. See http://www.sersc.org/journals/IJSEIA/vol8no32014/38.pdf

http://docplayer.net/9016010-A-review-of-open-source-software-development-life-cycle-models.html

Somethings that I notice:

So, I think part of the framing is that OSS ‘SDLCs’ are about creating the right culture and employing tools to shape that. SDLCs (in the traditional) form are about employing a structured method and making sure everyone adheres to it. So, I would prefer to think of the kind of methodology i am looking for to be framed as a ‘Product Development Culture’, not a Software Development Life Cycle. The former is a culture, the later a structured process. The former can be shaped, the later employed. Best to pick the right conditions for success from the beginning 😉 To improve OSS PDC we need the right tools to shape culture – this is the culture-method I am after.

The interesting thing here is that the history of SDLC highlights the problem with history. It is easy to tell a story of a timeline and certain events happening in sequence, almost like building blocks in time. However that isn’t how things happen. We can, for example, set up a nice story about how ‘Waterfall’ came from a pre-software engineering era, and it was simply applied to software development, and then in the 1980s, people started rebelling against this legacy process and developed more iterative methods. However, while there is some level of truth in that story, it also hides the complex paralled, unfloding, emergent processes and histories that is the ‘full telling of this story’. For example, iterative development in software is recorded as happening as early as 1957 (and certainly in the 60s for Project Mercury), but the process evolved out of non-software projects as early as the 1930s. As Larman and Victor Basili noted, “We are mindful that the idea of IID [Interactive and Incremental Development] came independently from countless unnamed projects and the contributions of thousands and that this list is merely representative. We do not meanthis article to diminish the unsung importance of other IID contributors.”

http://www.craiglarman.com/wiki/downloads/misc/history-of-iterative-larman-and-basili-ieee-computer.pdf

While Waterfall is often said to have been born in 1970 (– Royce, Winston (1970), “Managing the Development of Large Software Systems“,) So before we even had waterfall for programming, we had iterative development as this article so nicely highlights: http://www.craiglarman.com/wiki/downloads/misc/history-of-iterative-larman-and-basili-ieee-computer.pdf

What this highlights is that these ideas and methods have evolved in a many faceted, evolutionary manner. There is no simple star wars narrative here of good vs evil although that is often how the history of SDLC is told.

“In my experience, the simpler method has never worked on large software development efforts…” – Royce Winston

Waterfall in software dev was actually developed in the 1970s, which is late for computing. It was first described by Winston Royce in 1970 (“Managing the Development of Large Software Systems“,)

http://ig.obsglobal.com/2013/01/software-history-waterfall-the-process-that-wasnt-meant-to-be/

The steps included:

1. Design comes first

2. Document the design

3. Do it twice

4. Plan, control and monitor testing

5. involve the customer

Interestingly, it has nothing to do with how open source generally works 🙂 except! involve the customer is often also at the end of OSS product development.

Even more interesting…Waterfall as we know it now is really what Royve was criticising when he introduced the term. ie a very strict, sequential process. Conception, initiation, analysis, design, construction, testing, implementation, and maintenance. It is one way process, no going back to an earlier step at any stage. This is known as ‘single pass’ or ‘single step’ Waterall and differentiates it from Royce’s method that advocated building twice (2 pass).

A foundation of Waterfall, as we know it now, is the argument that the more work done gathering requirements and designing, the more time you save and risks you avoid when it comes to building.

Mid 80s, origins outside of software, namely the 1986 article “The new new product development game” – placed the notion of rugby as a metaphor for product development dynamics and specifically called out the term ‘Scrum’ as a description of small mobile teams.

Targetted at the first stage of the SDLC (requirements gathering and design). Before the development of JAD business requirements were gathered from interviewing stakeholders. JAD suggested the solution could be jointly designed. It is a very prescriptive methodology developed by Chuck Morris and Tony Crawford (IBM) in 1977. JAD is a rather strict methodology. The goal of the methodology is to develop business requirements ie. to accelerate the design phase and to increase project ownership. JAD has very specific roles:

All participants have equal say.

There are 8 key steps in JAD:

The workshop is the centerpoint for this methodology. JAD turns meetings into production orientated workshops (common also in agile etc). The basic idea being that by combining the users and IT staff a better product is designed.

Development lifecycle includes:

Method started with all in one room but used more commonly now with remote softwares. It puts emphasis on a diverse team, representing all stakeholders.

Earlier version of “design thinking” or Theory (Japanese)?

“It is critical that the facilitator be impartial and have no vested interest in the outcome of the session.”

“the focus of attention should always be on the JAD process itself, not the individual facilitator.”

“There is a school of thought that trained facilitators can successfully facilitate meetings regardless of the subject matter or their familiarity with it. This does not apply, however, to facilitating meetings to build information systems. A successful IS facilitator needs to know how and when to ask the right questions, and be able to identify when something does not sound right.”

http://www.umsl.edu/~sauterv/analysis/488f01papers/rottman.htm

…

“Many of the principles originating from the JAD process have been incorporated into the more recent Agile development process. ”

“Where JAD emphasises producing a requirements- specification and optionally a prototype, Agile focusses on iterative development, where both requirements and the end software product evolve through regular collaboration between self-organizing cross-functional teams.”

http://www.chambers.com.au/glossary/jointapplicationdesign.php

Initially developed by Barry Boehm. A response to Waterfall. RAD was a general approach (category of methods) as an alternative to Waterfall. In 1986, Boehm created the articulated Spiral methodology in 1986 which is considered as the first RAD method. Confusingly another RAD method evolved later (which also subscribes to the general RAD approach) by James Martin and named RAD by him.

Waterfall was developed out of pure engineering where strict requirements for a known problem lead to an inflexible design and build. Software however, could radically alter the landscape and hence, in a sense, change the problem space. So the process had to be more flexible, reflective etc. Hence RAD recognised that information gathered during the life of a project had to be fed back into the process to inform the solution.

RAD is generally used for the development of user interfaces and placed a lot of focus on the development of prototypes over rigorous and rigid specifications. Prototypes were considered as a method to reduce risk as they could be developed at anytime to test out certain parts of the application. The emphasis being to find out problems sooner rather than later. Additionally, users are better at giving feedback when using a tool rather than thinking about using the tool, and prototypes can evolve into working code and be used in the final product. The later could lead to users gettting useful functionality in the product sooner.

RAD is considered a ‘risk driven process’ ie. it identifies risk in the hopes to reduce it.

The addition of functionality incrementally is considered an advantage in user facing products as they can access certain functionality sooner. This iterative approach also produces some of the software earlier and hence there is something to show sooner.

Some disadvantages could be a ‘ad-hoc’ design, and the continual involvement of users might actually be difficult to achieve and may either slow down the production or offside stakeholders.

This method had four critical steps which were used repeatedly. Hence the project progressed in increments.

The spiral notion refers to the cyclical process PLUS as the project goes forward more knowledge is gained about the project. For a good description of this dynamic see https://www.youtube.com/watch?v=mp22SDTnsQQ

In 1991 James Martin took these ideas and turned RAD processes into another methodology, confuslingly called RAD.

There are four phases in the James Martin-defined RAD methodology:

Developed out of the RAD group.

Agile manifesto – 2001

“The basic idea behind iterative enhancement is to develop a software system incrementally, allowing the developer to take advantage of what was being learned during the development of earlier, incremental, deliverable versions of the system. Learning comes from both the development and use of the system, where possible. Key steps in the process were to start with a simple implementation of a subset of the software requirements and iteratively enhance the evolving sequence of versions until the full system is implemented. At each iteration, design modifications are made along with adding new functional capabilities. ” Vic Basili and Joe Turner, 1975

http://www.craiglarman.com/wiki/downloads/misc/history-of-iterative-larman-and-basili-ieee-computer.pdf

Agile is interesting because it is not a method in itself, it is a proposal for a certain kind of development culture, under which various methodologies have come to find a home. Agile, in other words, is a set of values or principles.

Dave Thomas has made a good presentation on the problems with turning Agile into a complex method when actually it was intended as a set of values. https://www.youtube.com/watch?v=a-BOSpxYJ9M

…and his blog post: http://pragdave.me/blog/2014/03/04/time-to-kill-agile/

Many of the famous methodologies for Agile – XP, Crystal, Adaptive Software Development, and SCRUM all point to specific parts of the SDLC and all existed before the term agile was applied to them. These early ‘Agile Methodologies’ were originally refered to as ‘light’ or ‘lightweight’ methodolgies which was really a way to label them in contrast to much more strict, linear and formal methodologies like Waterfall.

http://agilemanifesto.org/history.html

In the first edition of “Extreme Programming Explained” for example, lightweight is used to describe this kind of SDLC

“XP is a lightweight methodology for small-to-medium-sized teams developing software in the face of vague or rapidly changing requirements.” (Kent Beck)

Its also interesting to note that this ‘Agile’ shift in approach to development came around the first .com bubble and reflects the shifting business environment where speed to market was seen as a factor in business success – hence the need for SLDC methods that supported faster product development life cycles.

So, proving once again that one of the most difficult thing for programmers to do is to name things, a small group gathered in 2001 to replace this informal naming with something a little more descriptive and positive (programmers didnt like saying they employed ‘light’ or ‘lightweight’ methods as this implied a lack of concern or vigour over the quality of their work and ability to do it). The group came up with the term Agile to retro-name an existing category and also identified the core characteristics of those methodologies that would conform to the Agile way. This became known as the Agile manifesto and it is as follows:

We are uncovering better ways of developing software by doing it and helping others do it.

Through this work we have come to value:

Individuals and interactions over processes and tools

Working software over comprehensive documentation

Customer collaboration over contract negotiation

Responding to change over following a plan.

http://www.agilemanifesto.org/

So, in a sense, Agile is a framework, not a methodology. Methodologies conformed to it or, later, evolved from it. It is also interesting as the manifesto not only describes what it is, but what it is not. While this helps understand each value, it also shows that the manifesto very much defined itself as a reaction or remedy to the dominant culture. In some sense Agile represents a determined cultural fork within SDLC.

This is pretty interesting as:

Wikipedia, perhaps wrongly, perhaps not, lists Open Source as a SDLC

https://en.wikipedia.org/wiki/Systemsdevelopmentlife_cycle

Open source as a culture-method is good for developing infrastructure / backend solutions.

Open-source software has tended to be slanted towards the infrastructural/back-end side of the software spectrum represented here. There are several reasons for this:

- End-user applications are hard to write, not only because a programmer has to deal with a graphical, windowed environment which is constantly changing, nonstandard, and buggy simply because of its complexity, but also because most programmers are not good graphical interface designers, with notable exceptions.

- Culturally, open-source software has been conducted in the networking code and operating system space for years.

- Open-source tends to thrive where incremental change is rewarded, and historically that has meant back-end systems more than front-ends.

- Much open-source software was written by engineers to solve a task they had to do while developing commercial software or services; so the primary audience was, early on, other engineers.

This is why we see solid open-source offerings in the operating system and network services space, but very few offerings in the desktop application space.

http://www.oreilly.com/openbook/opensources/book/brian.html

“The real challenge for open-source software is not whether it will replace Microsoft in dominating the desktop, but rather whether it can craft a business model that will help it to become the “Intel Inside” of the next generation of computer applications.”

http://www.oreilly.com/openbook/opensources/book/tim.html

Stages (Vixie)

http://www.oreilly.com/openbook/opensources/book/vixie.htmlb

1. inception (developer) – “The far more common case of an open-source project is one where the people involved are having fun and want their work to be as widely used as possible ”

2. requirements assertion (developer) – “Open Source folks tend to build the tools they need or wish they had.”

3. no design phase 1 – “There usually just is no system-level design”

4. no design phase 2 – “Another casualty of being unfunded and wanting to have fun is a detailed design…Detailed design ends up being a side effect of the implementation. ”

5. implementation (developer) – “This is the fun part. Implementation is what programmers love most; it’s what keeps them up late hacking when they could be sleeping. ”

6. integration (developer) – writing manpages and READMEs

7. field testing – forums, issues in trackers, lists, irc, chat

My thoughts,:

1. requirements assertion (itch) – 1 or more developers have an idea and assert the requirements

2. build (scratch) – building begins, might be preceeded with some simple system design but more likely to proceed towards a proof of concept

3. use – users, might be developers only, use or try the software

4. interaction – interaction between intial devs and users and/or developers. May lead to use or contributions. This is the iterative growth of ‘the community’. Contributions might be code, documentation, marketing etc…

part 4 is all about developing culture.

Process might terminate at step 2, or cycle steps 1 & 2 only, or cycle 1,2,3 only. Successful projects generally repeat items 1-4 in a parrallel and cyclical fashion.

So…as to why this model is good for adoption…i think it is the openness of the process that does this. That is, it is the inclusiveness that makes the possibility of wide scale adoption possible. Companies that have product teams do not necessarily achieve this via this means since the ‘ownership’ of the product is contained within its own walls. Abele broke this model, extending the team into customer space.

Notable issues:

Founded by Kent Beck in the mid 80s. Interestingly, pre .com there was a general need to accelerate the design and producton of products. Speed to market was becoming an increasingly competitive factor. Extreme Programming was born from these conditions.

Seems Extreme Programming is really the cannonical start to agile values. Many practices are outlined in the book but the most common is ‘trust yourelf’. In a way you could narrate the evolution as being a move of early computer science as a practice of researchers and gov agencies, to corporate dev and markets in the 60s, then a reclaiming of programmer power in the 80s… Kent Beck’s writing is almost like a reclaiming of rights and power… like a workers movement… much of the language is centered around trusting yourself as a programmer.

The Extreme Programming tools and tricks are all about optimising quality of code and programmer satisfaction.

Everett Rogers’ book. An apparent classic about how innovations are adopted.

Rogers lists the following components are critical to diffusion processes:

1. the nature of the innovation

2. communication

3. time

4. social system

Important things to consider regarding the nature of the innovation:

1. Relative Advantage of the innovation

2. Compatability of innovation with existing values and experiences

3. Complexity – the simpler the better

4. Trialability – the more an innovation can be experimented with the better

5. Observability – the degree to hich the results of the innovation are visible to others.

In addition – Rogers believes an innovation diffuses more rapidly when “it can be re-invented”…this re-invention also helps ensure that “its adoption is more likely to be sustained”.

On top of this, communication is vital. Rogers states that “the heart of the diffusion process consists of the modelling and imitation by potential adopters of their network partners who have previously adopted”.

Interestingly, Rogers also states that while communication is easiest amongst those that have similar beliefs (homophilous), “one of the most distinctive problems in the diffusion of innovations is that the participants are usually heterophilous”…the nature of diffusion requires that “at least some degree of heterophily be present”….”ideally the individuals would be homophilous on all other variables […] even though they are henerophilous regarding the innovation. Usually, however, the two individuals are heterophilous on all of these variables”…hence….facilitation!

steps that one is likely to go through when adopting an innovation:

1. knowledge (of the innovation) eg. comms through website etc

2. persuasion (usually interpersonal comm)

3. decision

4. implementation (and re-invention)

5. confirmation

Interesting to understand how long it takes people to go through this process.

Those likely to adopt include:

1. innovators

2. early adopters

3. early majority

4. late majority

5. laggards

“the measure of innovativeness and the classification of a systems members into adopter categories are based upon the relative time at which an innovation is adopted”.

What is the expected and actual s-curve adoption rate for your innovation?

diffusion occurs within a social system. the social system defines the boundaries.