Strange days… We use Vivliostyle at Coko, but it has been a fraught experience. Vivliostyle is an open source javascript library for automating typesetting of HTML/CSS content. We use it in Editoria for automating the production of book-formatted PDF from an HTML source. You can read a little about how this works here.

The source code is open source, but it was apparent from the beginning that Vivliostyle-the-company were not into collaborating unless we could pay. We tried very hard to get inside their bubble and contribute, but were pretty well cold-shouldered. We offered meeting resources for community building, and developer and designer time, to help move it all on. Coko went to quite some effort to promote what they were doing (eg https://www.adamhyde.net/why-vivliostyle-is-important/ + the posts on PagedMedia.org by Coko’s Julien Taquet. But they wanted none of it. I was also pretty sure they were being paid to extend Vivliostyle but not putting those changes back into the common pool. Fauxpen, as they say.

We weren’t the only ones to feel things were odd – I spoke to many people who felt the same way and who had had similar experiences. As a result, to de-risk ourselves I founded another initiative – pagedmedia.org – so we would not be reliant on Vivliostyle. My thoughts on this were very similar to what I recently wrote about editors ie. if an open source community is not open to building community, something is wrong and you should carefully consider whether you should be involved with them. They could at any time change direction, and if there was no community at play, then you could be left high and dry. Sometimes you have to make hard decisions and we made it…

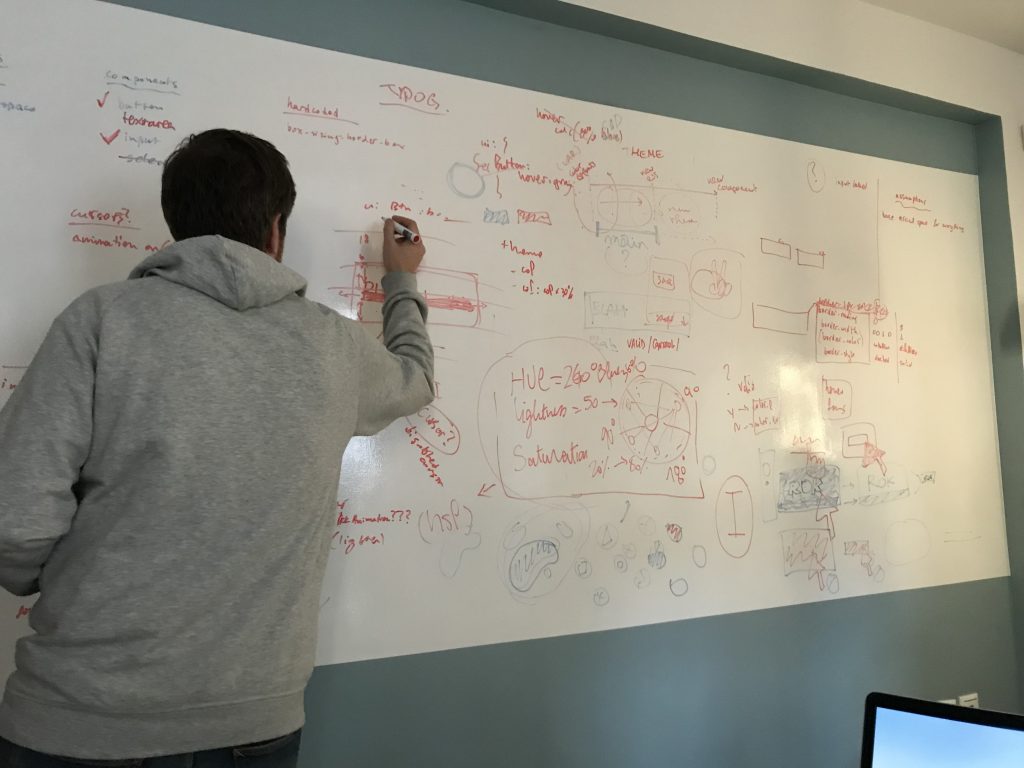

No one really wants to build an automated typesetting solution from zero unless they have to. After trying to solve this problem for the last 10 years or so (with open source) I wanted to see it done…so… sigh… if it (unfortunately) wasn’t going to be Vivliostyle after all, then what choice did I have? So I committed to this new endeavor (which is going very well! some cool updates soon) and we launched at MIT Press earlier this year with a community-first approach. We are, similarly, currently considering our choice of editor libraries because of similar concerns.

So, as it happens, I guessed it right and moved at the right time… as that is exactly what has happened with Vivliostyle. A few weeks ago they mysteriously posted a new company name (Trim-Marks Inc) and site under the old URL and pointed vivliostyle.com to vivliostyle.org. No further information was forthcoming. Now there is an announcement about it all on vivliostyle.org… you can read it here:

https://vivliostyle.org/blog/2018/03/26/a-new-beginning/

Essentially Vivliostyle-the-company went off to form trim-marks.com.

I have no idea what they do, but it isn’t open source. Vivliostyle, the open source project, is apparently continuing under the new .org site, led by Shinyu Murakami and Florian Rivoal. I know them and like them both. Very talented and committed people. I met Shinyu when he was thinking about how to tackle this problem – we met at Books in Browsers a long time ago and talked about strategies for solving this problem. So, I wish them all the best, but I retain some initial skepticism – until I see them actively building community, I won’t be terribly interested in going down that path again… They are good folks, so who knows… fingers crossed (the more projects active in this space the better).